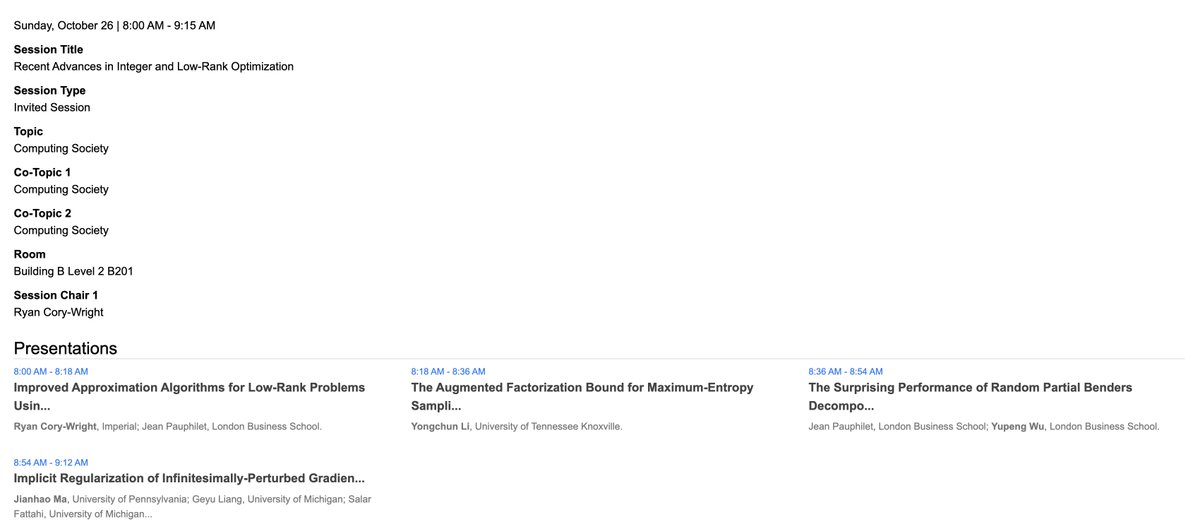

Sabitlenmiş Tweet

New paper 🚨 optimization-online.org/2025/01/improv… “Improved Approximation Algorithms for Low-Rank Problems Using Semidefinite Optimization” (w/ Jean Pauphilet)

Inspired by Goemans-Williamson’s success in binary quadratic optimization, we generalize to semi-orthogonal and low-rank matrices.

English