Fisherman

7.4K posts

Fisherman

@Rybens92

Here just to support good open source, Linux Gaming and AI projects.

After @Pinterest @Airbnb @NotionHQ @cursor_ai, today it’s @eoghan @intercom publicly sharing that they’re finding it better, cheaper, faster to use and train open models themselves rather than use APIs for many tasks. And hundreds of other companies are doing the same without sharing. Ultimately, I believe the majority of AI workflows will be in-house based on open-source (vs API). It took much more time than we anticipated but it’s happening now!

built computer use into hermes agent tell it what to do from your phone → it controls your mac no sandbox real desktop, real apps, real time a complex example : diagram on freeform @Teknium @NousResearch @claudeai for more : github.com/NousResearch/h…

TurboQuant is looking pretty solid. 🔥 > Original idea was to use it just for KV cache where context tokens are stored > Now it is expanding to be used with models > On Qwen 3.5-27B it shrinks the model down to 12.9B > 6X memory savings vs 16-bit precision > Stays accurate

Valve's Steam Machine price could be saved thanks to collapsing RAM prices

so far i test 2 nvidia models tested on my 3090. two different failures. cascade 2: 187 tok/s, blank screens on every coding test. speed king, can't code. openreasoning 32B dense: 36 tok/s, overthinks everything. prompted hello and it solved math problems for 2 minutes straight. gave it a real build task and it wandered in its own reasoning until it was unusable. 5 million deepseek reasoning traces turned this model into a thinker that forgot how to ship. 3B active MoE couldn't hold coherence. 32B dense couldn't stop reasoning long enough to write code. nvidia's 3090 tier is not it for agent coding. but i am not done. the question is whether nvidia needs scale to compete with qwen or if the architecture itself is the problem. loading the big fight next.

Heard about Hermes Agent and actually want to try it? Here's a full install and setup guide, with a special trick at the end! * Demo on Ubuntu 24 * Telegram chat setup * Config walk-through

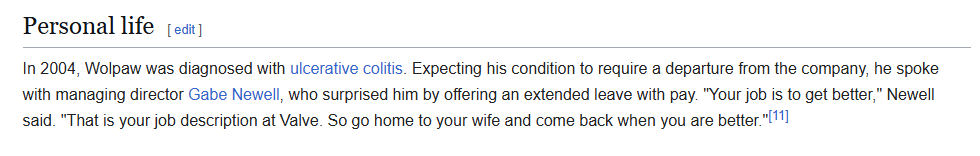

Lmao Gabe Newell when faced with an employee with cancer changed their employed status to “get better” and kept them at full salary the entire ordeal and paid out the insurance.

Valve's Steam Machine price could be saved thanks to collapsing RAM prices

OpenClaw is amazing as a concept but I am getting genuinely sick and tired of at least one thing breaking every single day

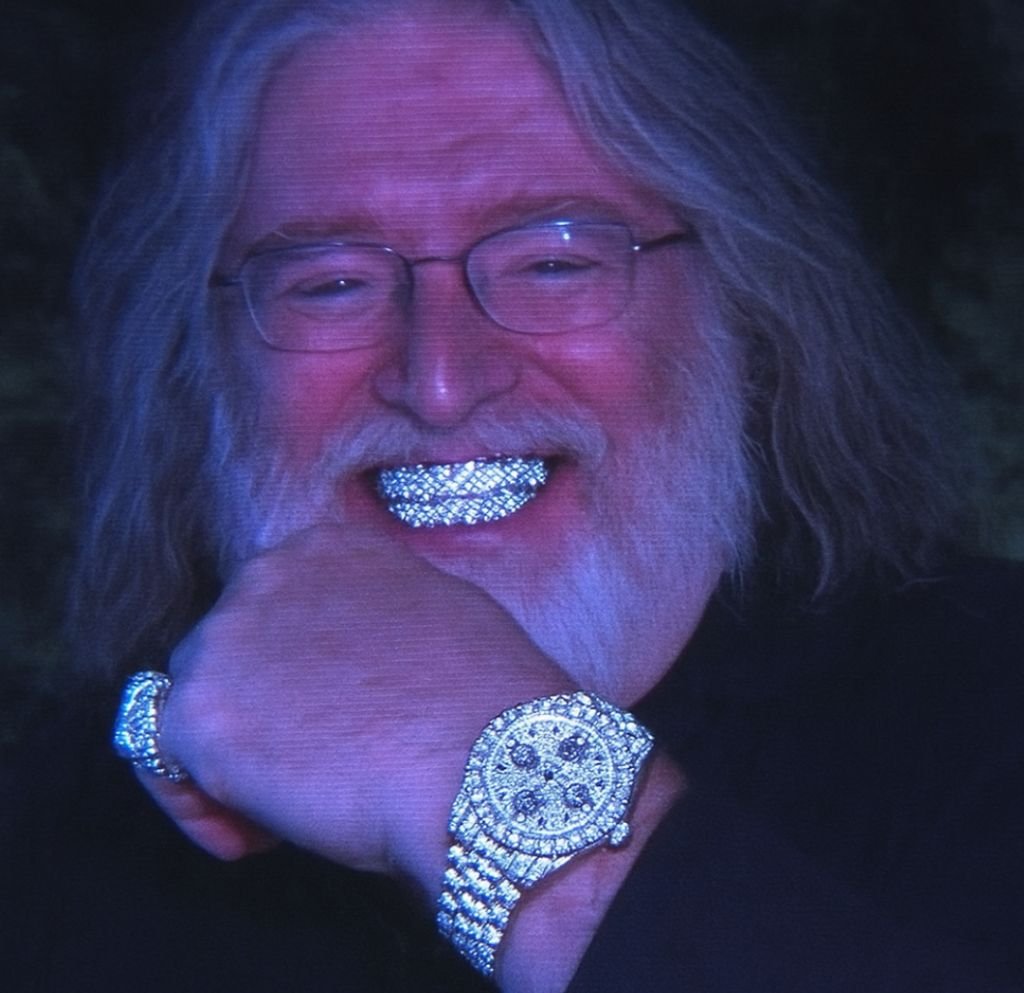

KwaiKAT has released KAT-Coder-Pro V2, a non-reasoning model that scores 44 on the Artificial Analysis Intelligence Index, an 8 point improvement from KAT-Coder-Pro V1 @KwaiAICoder has updated their flagship proprietary coding model with the release of KAT-Coder-Pro V2. KAT-Coder-Pro V2 achieves 44 on the Artificial Analysis Intelligence Index, matching Claude Sonnet 4.6 (non-reasoning) and trailing only Claude Opus 4.6 (non-reasoning, 46) among non-reasoning models. At ~9M output tokens, it is also more token efficient than Claude Opus 4.6 (~11M), Claude Sonnet 4.6 (~14M), and reasoning models with similar intelligence such as DeepSeek V3.2 (reasoning, ~61M) and Qwen3.5 397B A17B (reasoning, ~86M). KAT-Coder-Pro V2 is a non-reasoning model, unlike all of the current frontier language models which ‘think’ before answering. Typically, reasoning variants score higher on the Intelligence Index than their non-reasoning counterparts, but consume more output tokens and are less suited to latency-sensitive workloads. Key Highlights: ➤ 🧠 Higher overall intelligence, but regression in long context reasoning and knowledge recall: KAT-Coder-Pro V2 scores 44 on the Artificial Analysis Intelligence Index, an 8 point improvement from KAT-Coder-Pro V1 and matching Claude Sonnet 4.6 (non-reasoning, max effort). It performs well on tool use (90% on Tau2-Telecom), but regresses compared to KAT-Coder-Pro V1 on long-context reasoning and knowledge, falling 8 p.p. on AA-LCR (66%) and 17 p.p. on HLE (16%). ➤ 🤖 Agentic capability improvements: KAT-Coder-Pro V2 shows major improvements on our agentic evaluations. On Terminal-Bench Hard, it scores 49%, up 40 p.p. from KAT-Coder-Pro V1, making it the highest-scoring non-reasoning model, matching Claude Opus 4.6 (non-reasoning, 49%) and ahead of Claude Sonnet 4.6 (non-reasoning, 46%). KAT-Coder-Pro V2 also shows improvement in GDPval-AA, scoring 1123 (+304 Elo from V1), but still sits behind models such as DeepSeek V3.2 (1198) and Qwen3.5 397B A17B (1202). ➤ ⚙️ High token efficiency: KAT-Coder-Pro V2 is a non-reasoning model and uses fewer tokens than peers with similar intelligence. It uses 8.7M output tokens to run the Artificial Analysis Intelligence Index, below Claude Opus 4.6 (non-reasoning, ~11M) and Claude Sonnet 4.6 (non-reasoning, ~14M), though this is ~2x higher than its predecessor, KAT-Coder-Pro V1 (~4.5M). It also uses significantly fewer tokens than similarly intelligent reasoning models such as DeepSeek V3.2 (reasoning, ~61M) and Qwen3.5 397B A17B (reasoning, ~86M). ➤ $ Improved cost efficiency: KAT-Coder-Pro V2 costs $73 to run the Artificial Analysis Intelligence Index, down from $76 for V1, as it uses fewer input tokens by requiring fewer turns in agentic evaluations. This makes it one of the most cost-efficient models at its intelligence level, costing less than Qwen3.5 397B A17B (reasoning, $418) and Claude Sonnet 4.6 (non-reasoning, $1397). KAT-Coder-Pro V2 is currently priced at $0.30/$1.20 per 1M input/output tokens on StreamLake and AtlasCloud API endpoints. ➤ ⚡ Low end-to-end response time: KAT-Coder-Pro V2 runs at ~109 output tokens per second, far ahead of Claude Opus 4.6 (non-reasoning, 39 OTPS) and Claude Sonnet 4.6 (non-reasoning, 43 OTPS). Because it also has a low time to first token without any reasoning delay, it delivers one of the fastest end-to-end response times, which measures the time taken from request sent to final output returned. Model details: ➤ Availability: KAT-Coder-Pro V2 is available via StreamLake and AtlasCloud API endpoints ➤ Context Window: 256K tokens (equivalent to KAT-Coder-Pro V1) ➤ Multi-modal capabilities: Text input and output only