Baran Hashemi

994 posts

@Rythian47

Physicist | Postdoc at MPI for Mathematics in Sciences, AI for Mathematics

1/ Are frontier LLMs the only path to AI math breakthroughs? I think not! We introduce FlowBoost, A lightweight RL+Flow-Matching framework that discovers new extremal geometric structures, beating AlphaEvolve with 100–1000× less compute with zero-shot geometry-aware & reward-guided generation. 🚀 arXiv: arxiv.org/pdf/2601.18005

1/ Are frontier LLMs the only path to AI math breakthroughs? I think not! We introduce FlowBoost, A lightweight RL+Flow-Matching framework that discovers new extremal geometric structures, beating AlphaEvolve with 100–1000× less compute with zero-shot geometry-aware & reward-guided generation. 🚀 arXiv: arxiv.org/pdf/2601.18005

1/ Are frontier LLMs the only path to AI math breakthroughs? I think not! We introduce FlowBoost, A lightweight RL+Flow-Matching framework that discovers new extremal geometric structures, beating AlphaEvolve with 100–1000× less compute with zero-shot geometry-aware & reward-guided generation. 🚀 arXiv: arxiv.org/pdf/2601.18005

امیرمحمد گمینی در کتاب «ما چگونه ما نشدیم» بهدرستی خاطرنشان میکند که نقد غزالی به طبیعیات ارسطویی نه تنها مانع علم نشد، بلکه محرک مکتب مراغه در نقد نظام بطلمیوسی بود. علم در جهان اسلام نه با «تهافت»، که در بسترِ دیالوگ با آن به تکامل رسید.

1/ Are frontier LLMs the only path to AI math breakthroughs? I think not! We introduce FlowBoost, A lightweight RL+Flow-Matching framework that discovers new extremal geometric structures, beating AlphaEvolve with 100–1000× less compute with zero-shot geometry-aware & reward-guided generation. 🚀 arXiv: arxiv.org/pdf/2601.18005

1/ Are frontier LLMs the only path to AI math breakthroughs? I think not! We introduce FlowBoost, A lightweight RL+Flow-Matching framework that discovers new extremal geometric structures, beating AlphaEvolve with 100–1000× less compute with zero-shot geometry-aware & reward-guided generation. 🚀 arXiv: arxiv.org/pdf/2601.18005

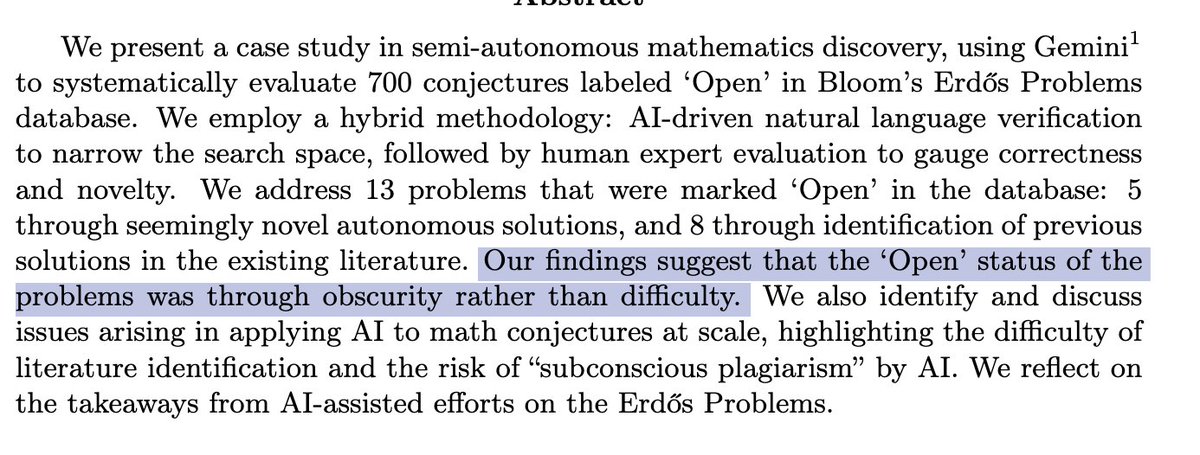

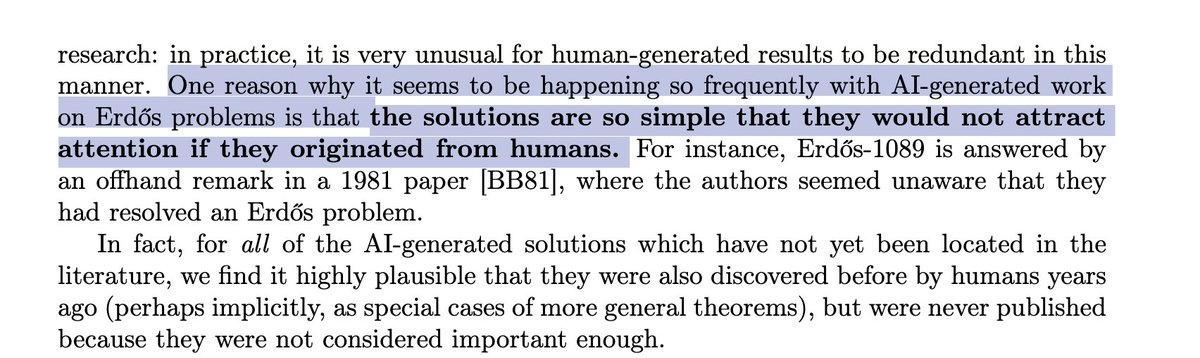

Excited to share our latest work: "Semi-Autonomous Mathematics Discovery with Gemini." We used Gemini to systematically evaluate 700 "open" conjectures in the Erdős Problems database. The result? We addressed 13 problems marked as open—finding 5 novel autonomous solutions and identifying 8 existing solutions missed by previous literature. Read the full case study here: arxiv.org/abs/2601.22401

1/ Are frontier LLMs the only path to AI math breakthroughs? I think not! We introduce FlowBoost, A lightweight RL+Flow-Matching framework that discovers new extremal geometric structures, beating AlphaEvolve with 100–1000× less compute with zero-shot geometry-aware & reward-guided generation. 🚀 arXiv: arxiv.org/pdf/2601.18005

Okay looks like I can now talk about Aletheia on the Erdős Problems! arxiv.org/abs/2601.22401…

1/ Are frontier LLMs the only path to AI math breakthroughs? I think not! We introduce FlowBoost, A lightweight RL+Flow-Matching framework that discovers new extremal geometric structures, beating AlphaEvolve with 100–1000× less compute with zero-shot geometry-aware & reward-guided generation. 🚀 arXiv: arxiv.org/pdf/2601.18005

1/ Are frontier LLMs the only path to AI math breakthroughs? I think not! We introduce FlowBoost, A lightweight RL+Flow-Matching framework that discovers new extremal geometric structures, beating AlphaEvolve with 100–1000× less compute with zero-shot geometry-aware & reward-guided generation. 🚀 arXiv: arxiv.org/pdf/2601.18005