Sabitlenmiş Tweet

Generate PROMPTS like a PRO 😹 👇

#stablediffusion #aipromptgenerator

🔖Bookmark (save) for future reference....

🩷 Like if you loved it....

🖊️Comment/Suggestions if you are getting any error....

English

Stable Diffusion Tutorials

2.4K posts

@SD_Tutorial

👉 Ai models local installation 👉 Comfy Workflows 👉 Tutorials (Image Gen, Video gen) FOLLOW WEBSITE 👇👇

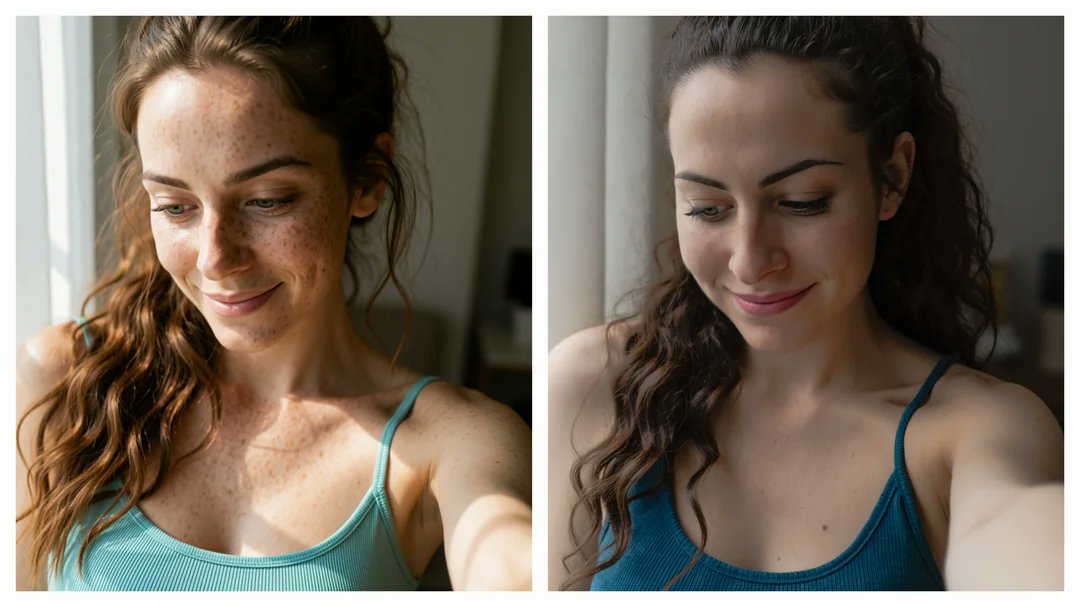

FLUX.2 Klein+AsymFlow with no VAE. -builds hyper-realistic images directly in pixel space rather than compressed latents -Sharper textures and superior fidelity40% faster -Low-rank noise parameterization solves high-dimensional -Comfy support incoming 👇 hanshengchen.com/asymflow/

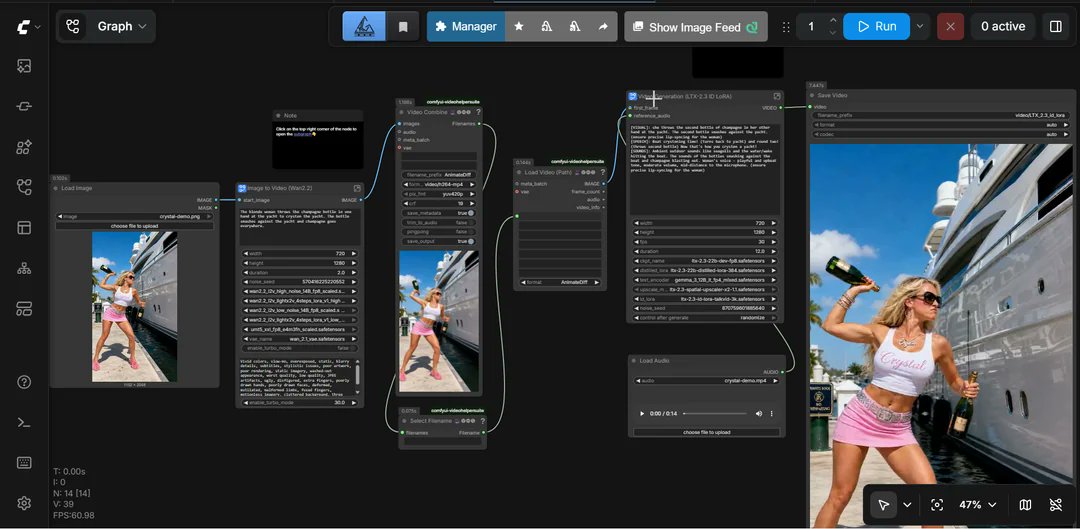

LTX 2.3 😉🎶🎵Music Video 📹Creator in ComfyUI These workflows are designed for creators to generate: a fast-fully automated setup for building cinematic music video clips HF Repo: 👇 huggingface.co/vrgamedevgirl8…