SID

85 posts

@SID_AI

solving retrieval one model at a time | @ycombinator

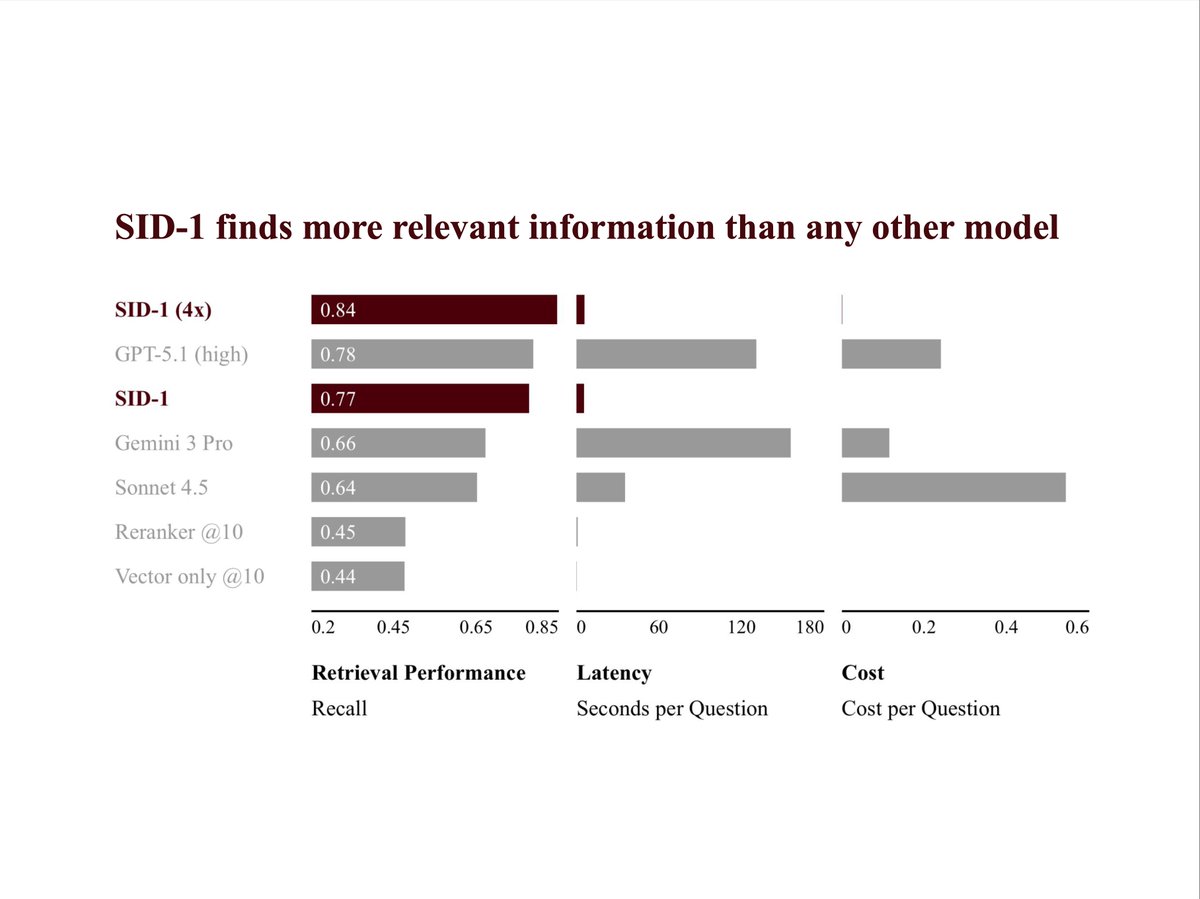

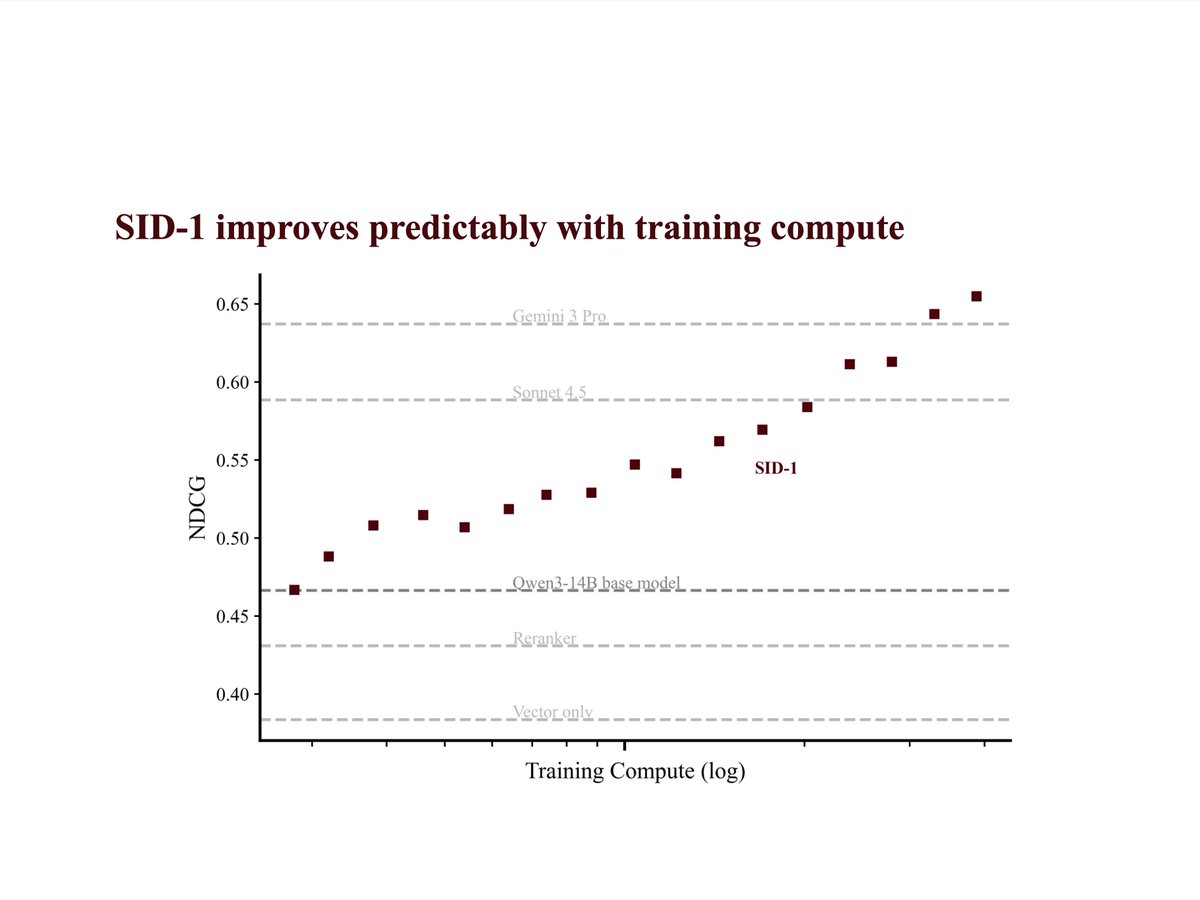

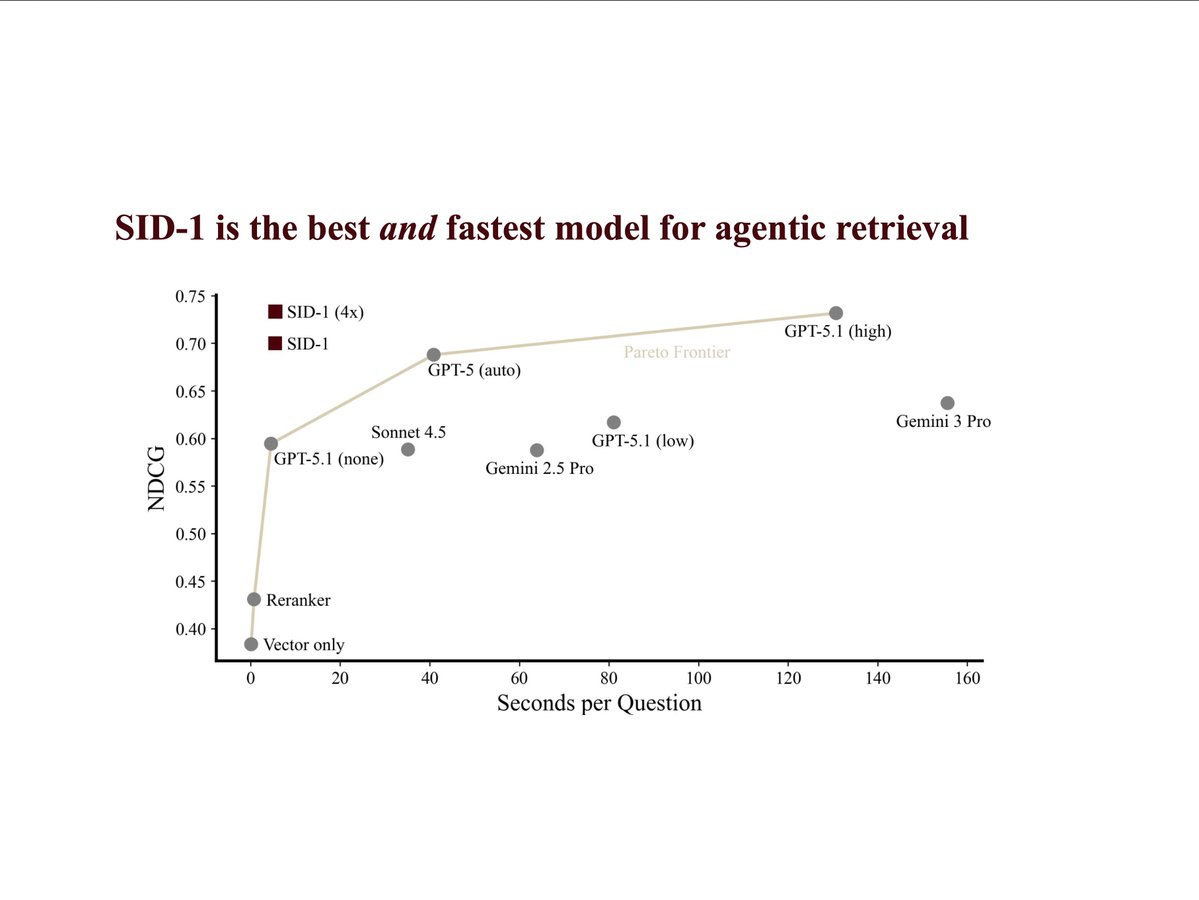

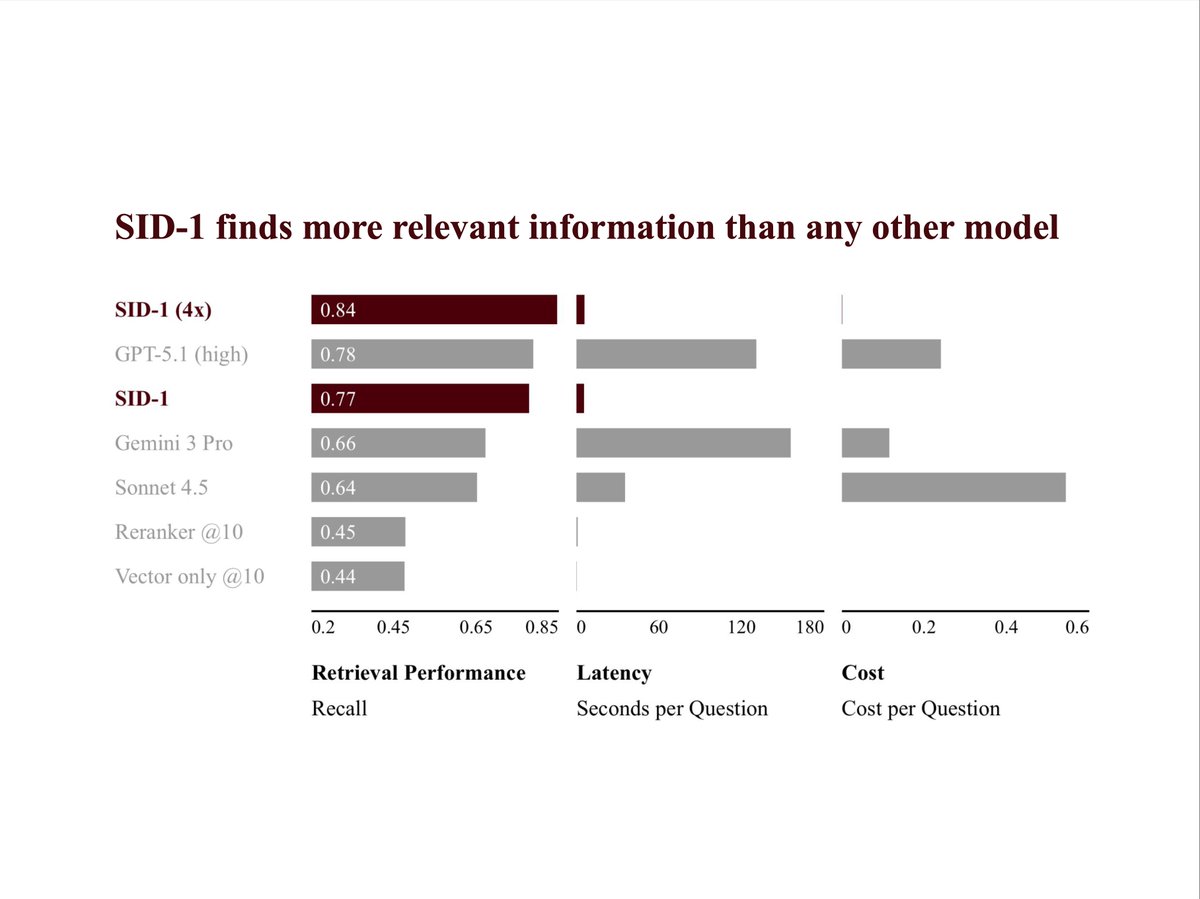

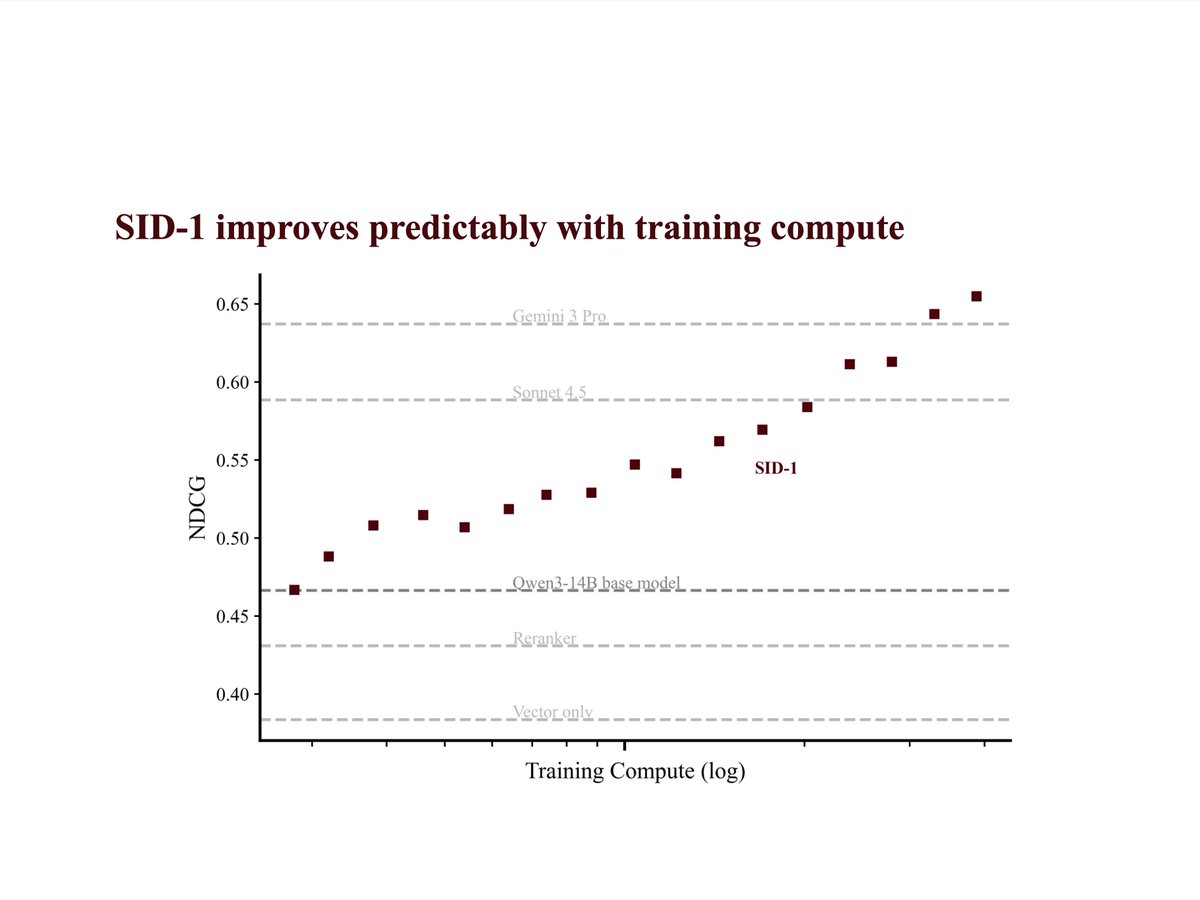

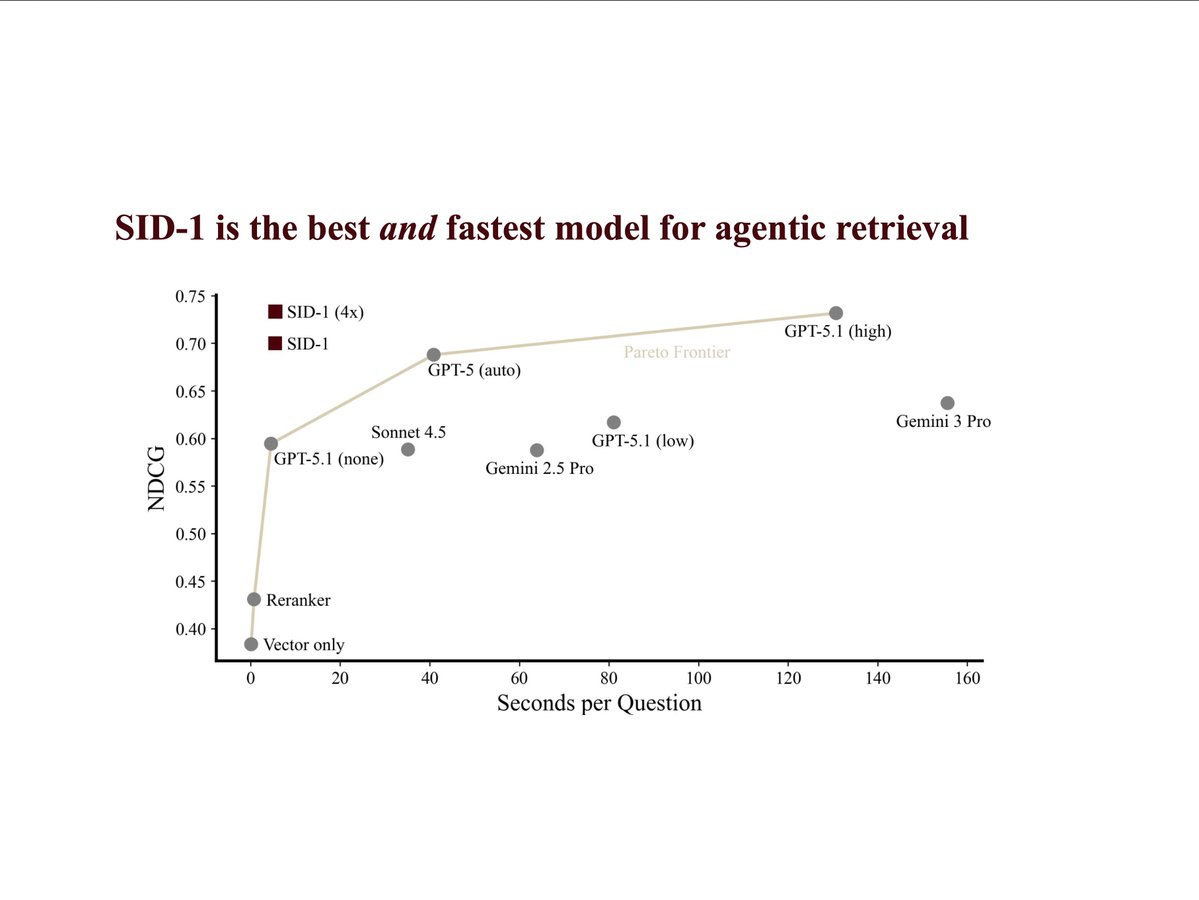

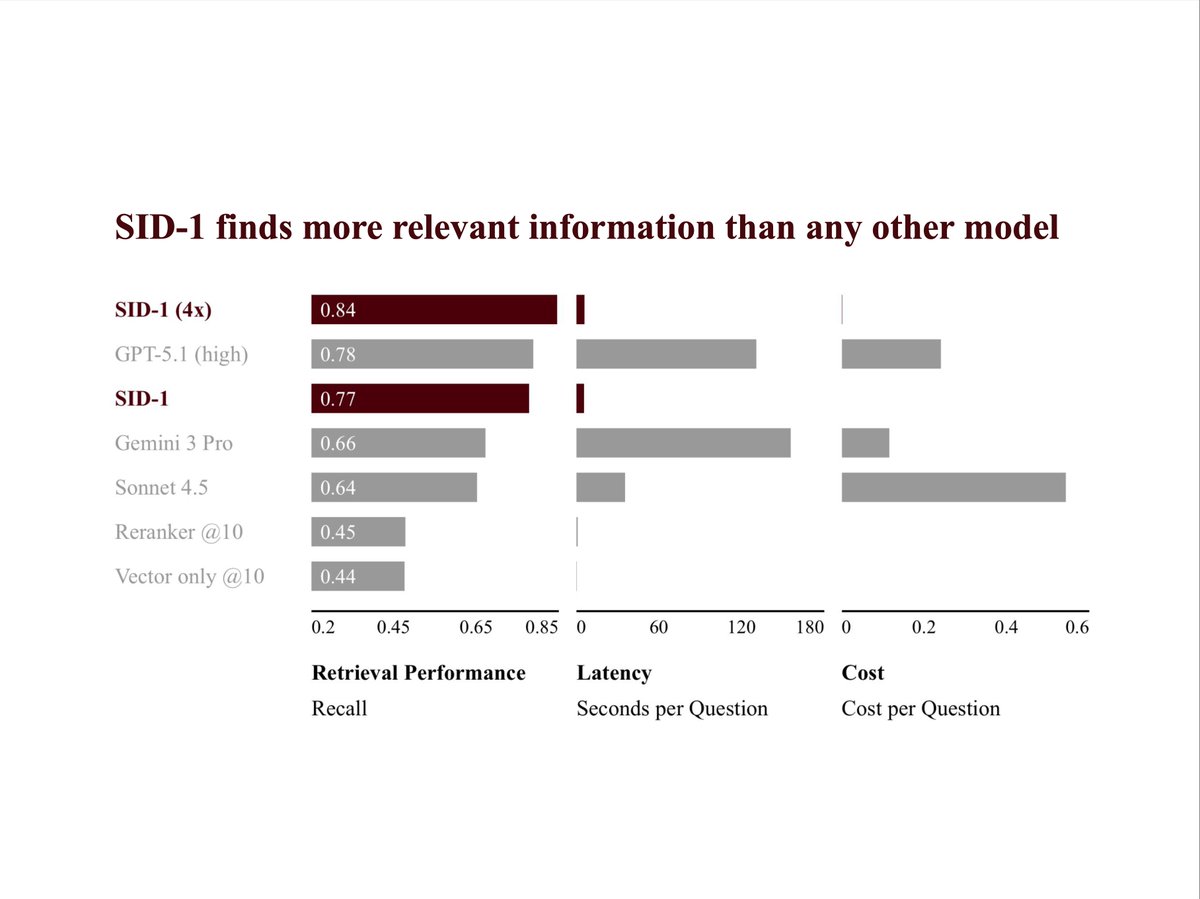

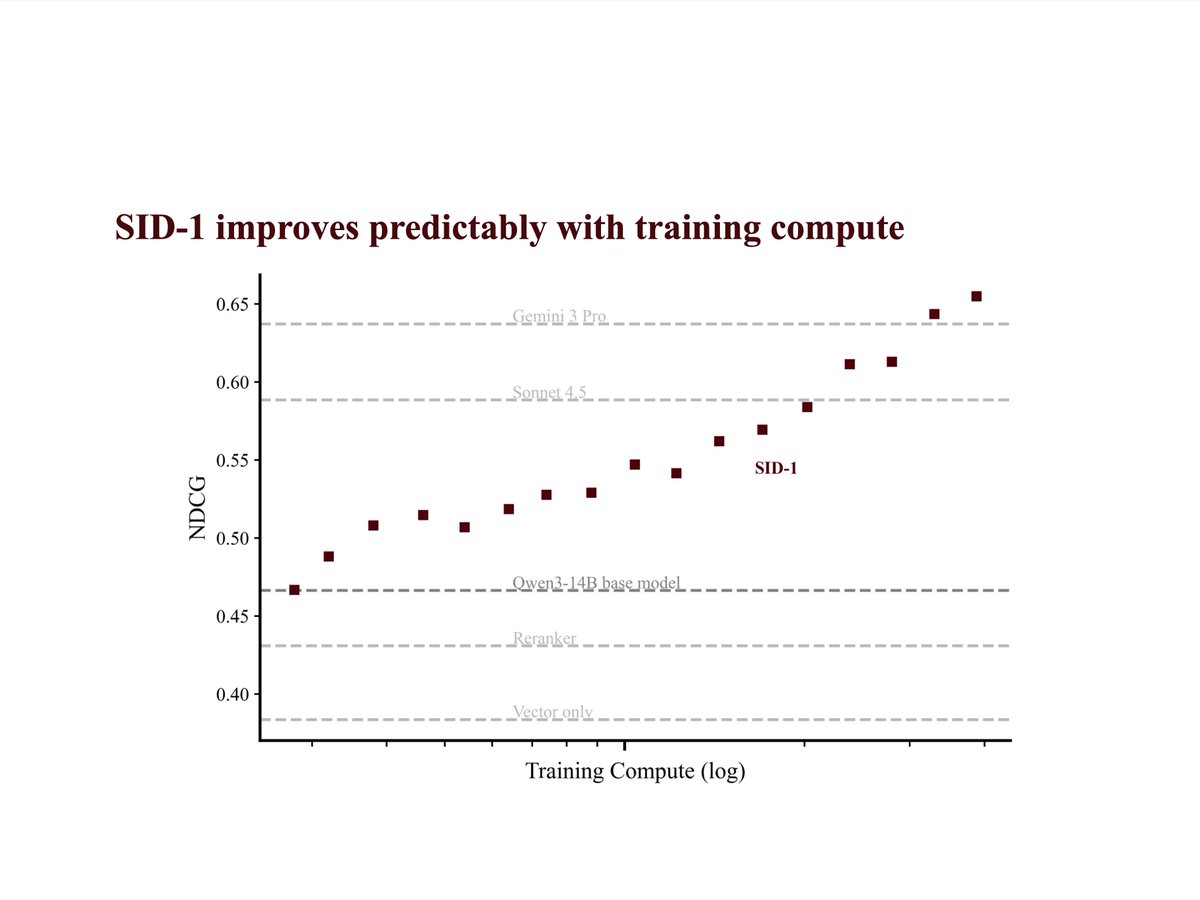

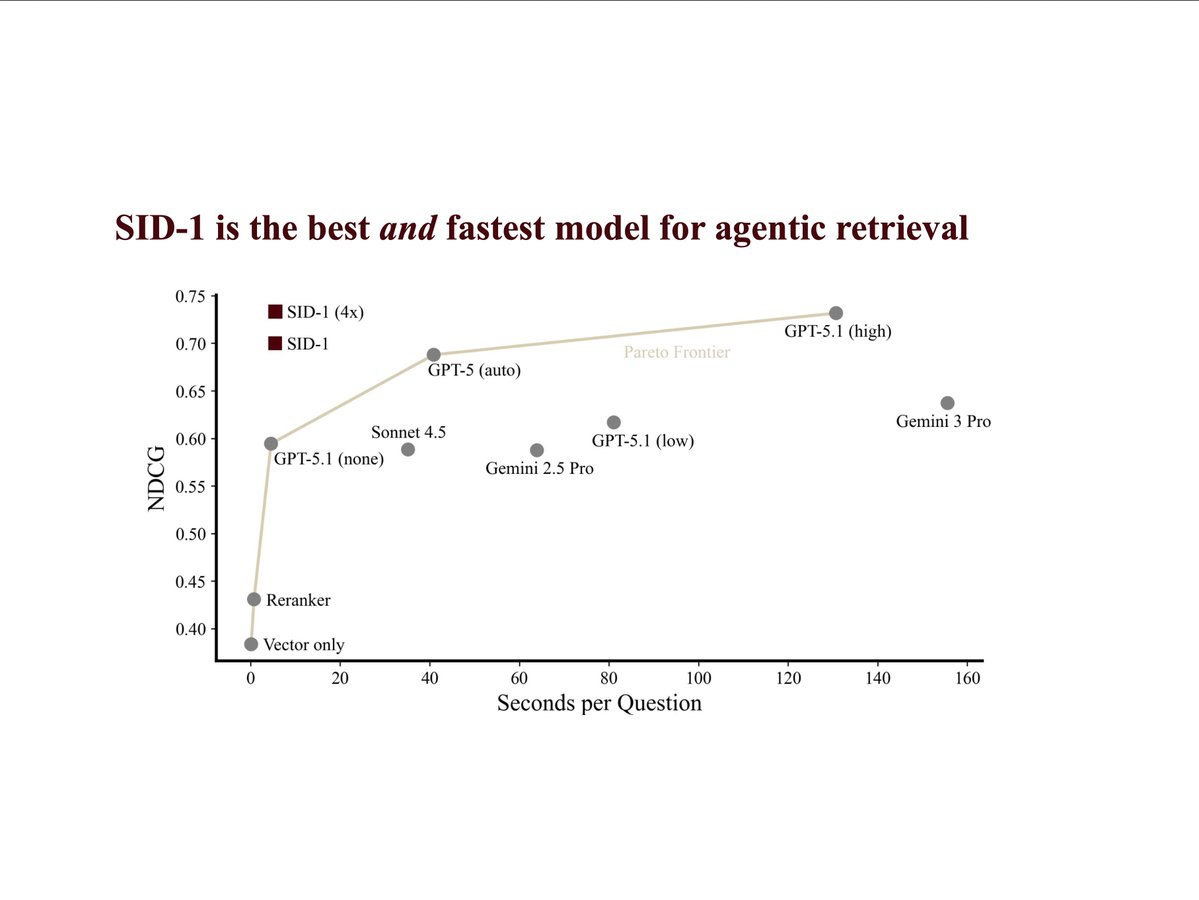

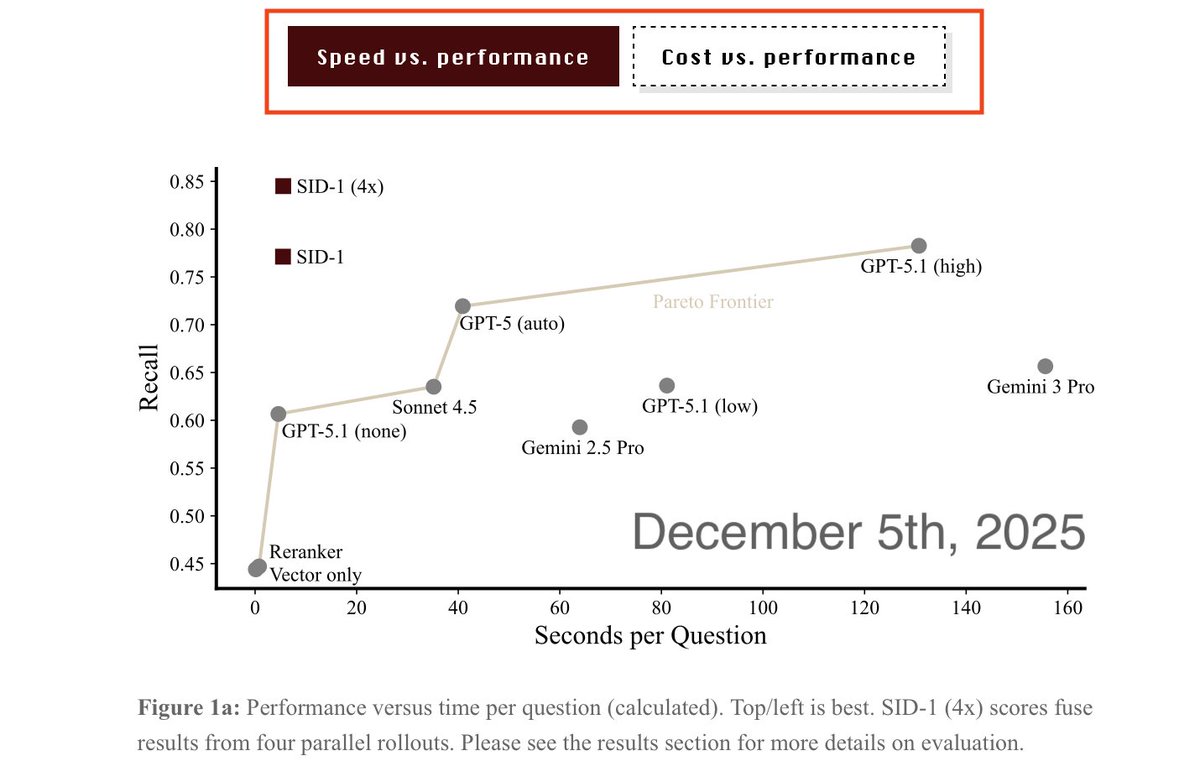

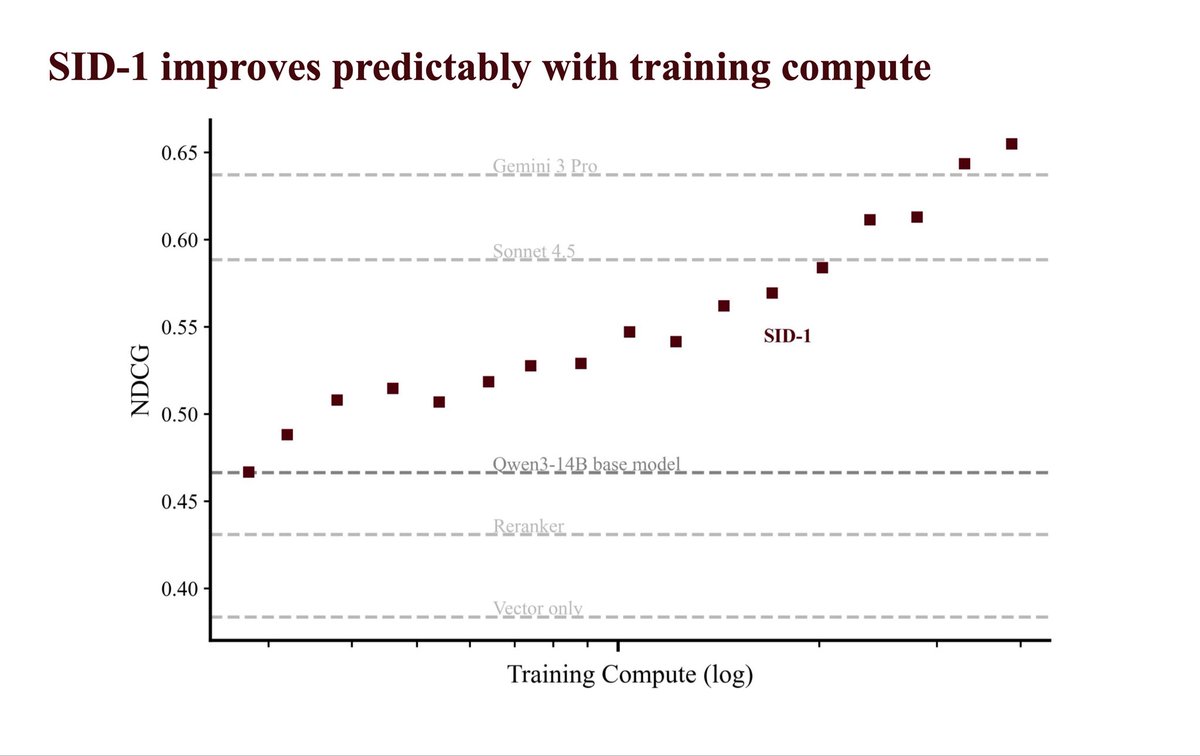

SID-1 is an agentic search model by @SID_AI → 1.9x recall over RAG + rerank → 24x faster, 99% cheaper than GPT-5.1 trained using large-scale RL on turbopuffer at 1k+ QPS bursts over 10M+ document corpora across thousands of steps tpuf.link/sid-1

SID-1 is an agentic search model by @SID_AI → 1.9x recall over RAG + rerank → 24x faster, 99% cheaper than GPT-5.1 trained using large-scale RL on turbopuffer at 1k+ QPS bursts over 10M+ document corpora across thousands of steps tpuf.link/sid-1

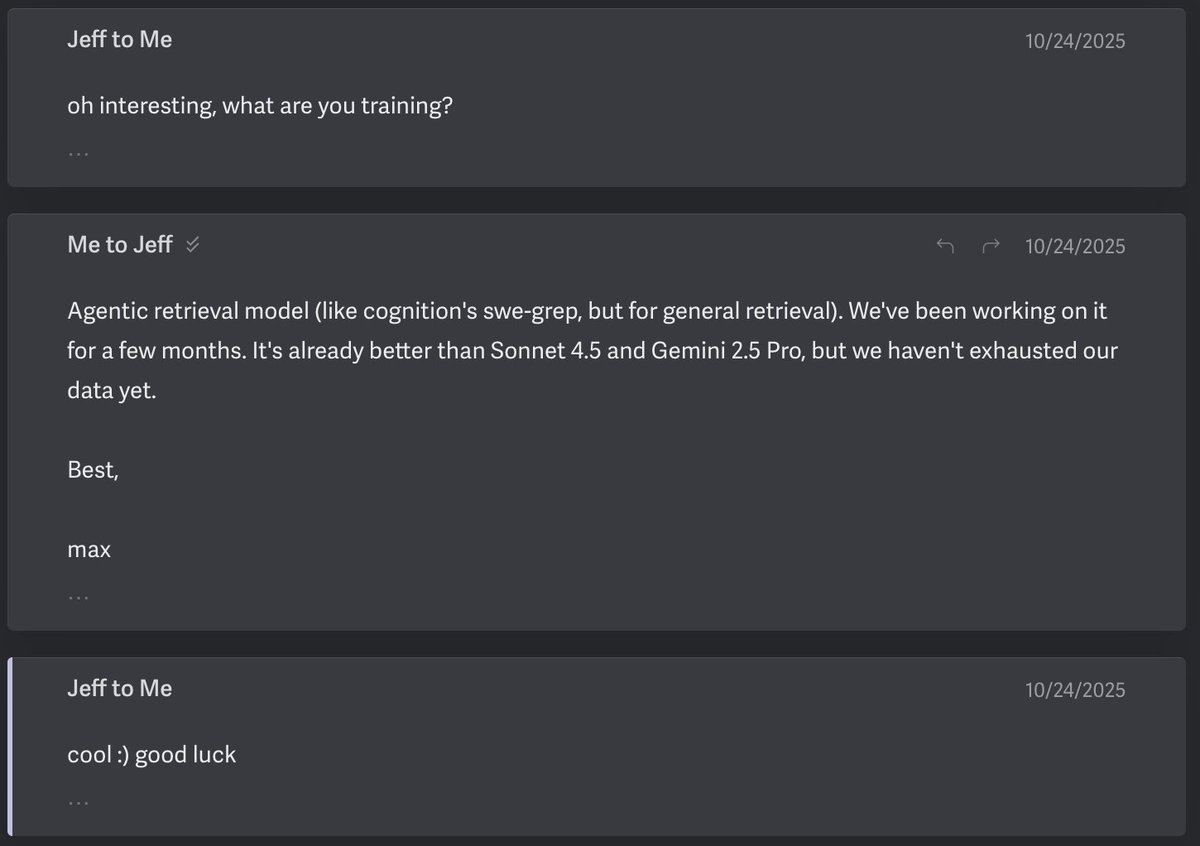

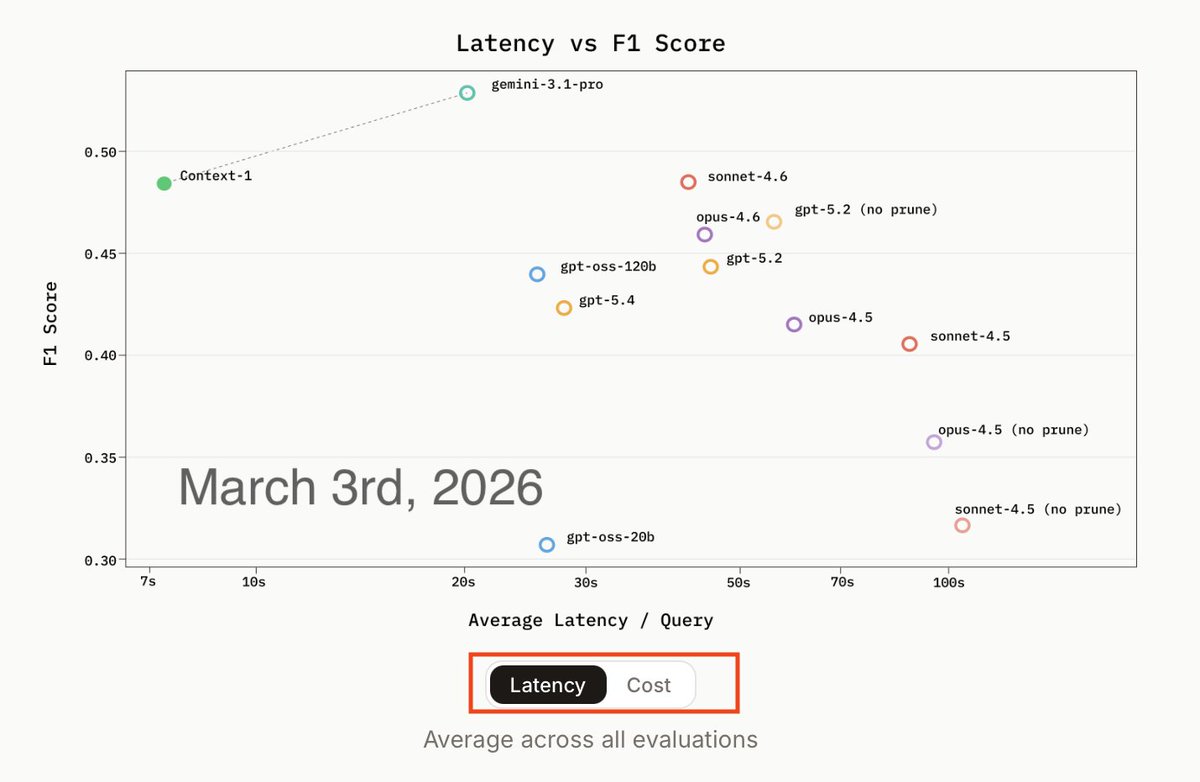

Chroma's "new" model sure seems familiar. A story. Imitation is the sincerest form of flattery. But there is a point where it goes from "inspiration" to whatever Context-1 is: 6 months ago, Chroma's CEO @jeffreyhuber asked us about our research. 4 months ago, we proudly shared SID-1's tech report with him. An exchange I now understand very differently (see the emails). Today, they released a report heavily "inspired" by ours. Charts, datasets, methods, and the whole model itself. Down to the toggle for Figure 1 and our 4x RRF rollouts. They never reached out to benchmark our model. Their claims of "pareto-optimality" ring hollow. They provable knew there was another model. Unfortunately, we can't benchmark their model: While their weights are open, the harness they say one needs isn't yet. Their claims of "pareto-optimality" ring hollow. They knew there was another model. I know Jeff well and our offices neighbor. We shared a lot of insights in our tech report. Maybe more than prudent. But we believe in advancing human knowledge. (Making search better is our way of doing so). We applaud companies like @thinkymachines that are brave enough to share the ideas that make the work possible. But where do we go as a research community when we stop respecting each other's work? When we don't give credit where it's due? And trick "friends" into sharing more, just to steal it? While claiming moral high ground by calling this "open-source?" This completely destroys any incentive for us (and others) to go into as much depth as we did in our tech report. It’s sad to see the poor research practices that are sadly common in academia making their way into startups. Context-1 has some interesting ideas: Pruning is clever. I wish I were writing about them. Followers and copycats, even if they're bigger, don't scare us. I'm very proud of what we've built. And even more proud of who I'm building this with. We're also hiring original thinkers.

SID-1: Tech Report It has way more detail than is prudent. sid.ai/research/sid-1…

Introducing Chroma Context-1, a 20B parameter search agent. > pushes the pareto frontier of agentic search > order of magnitude faster > order of magnitude cheaper > Apache 2.0, open-source

There is significant discussion in the academic literature about RL making models better at pass@1 and *worse* at pass@N (or related claims). We run a lot of RL runs at Cursor and don't see this issue systematically. Not doubting it occurs, but something else might be going on.

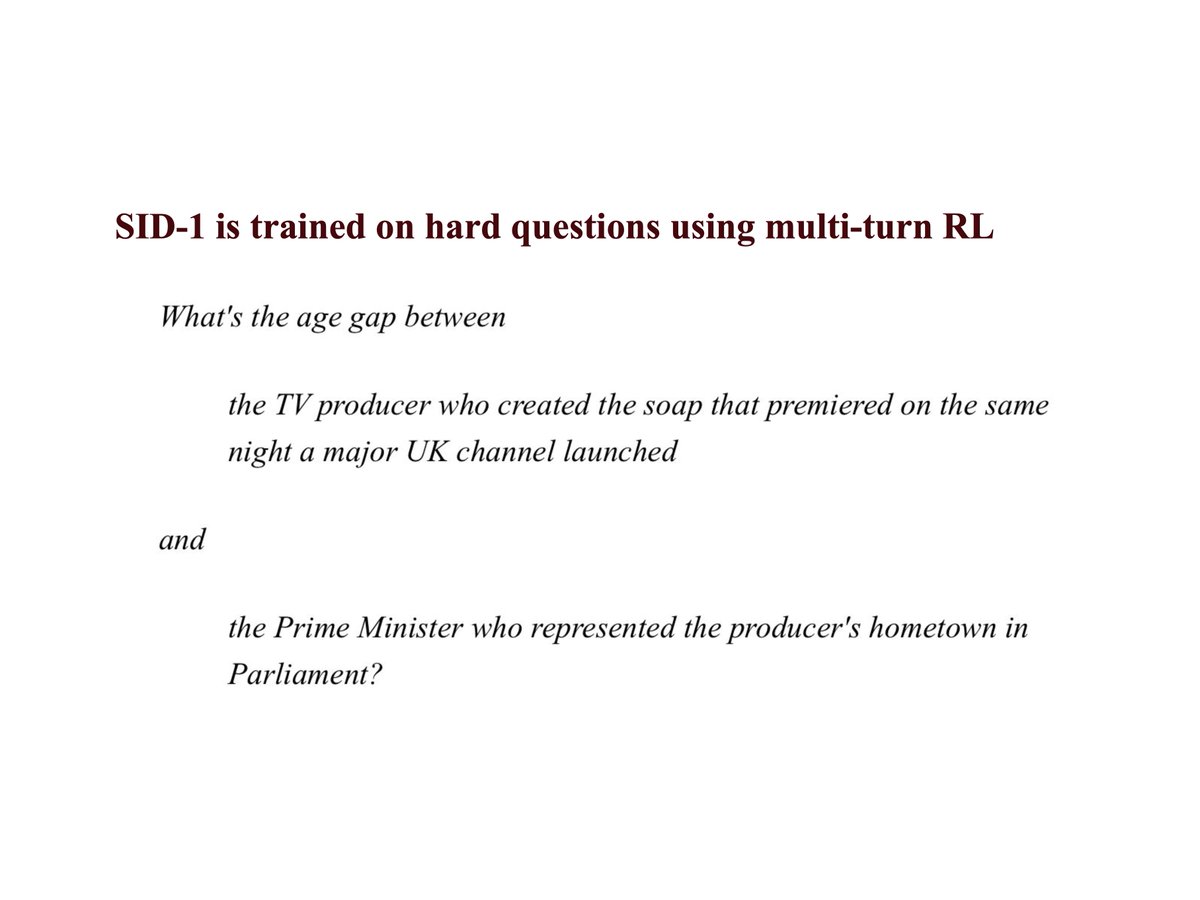

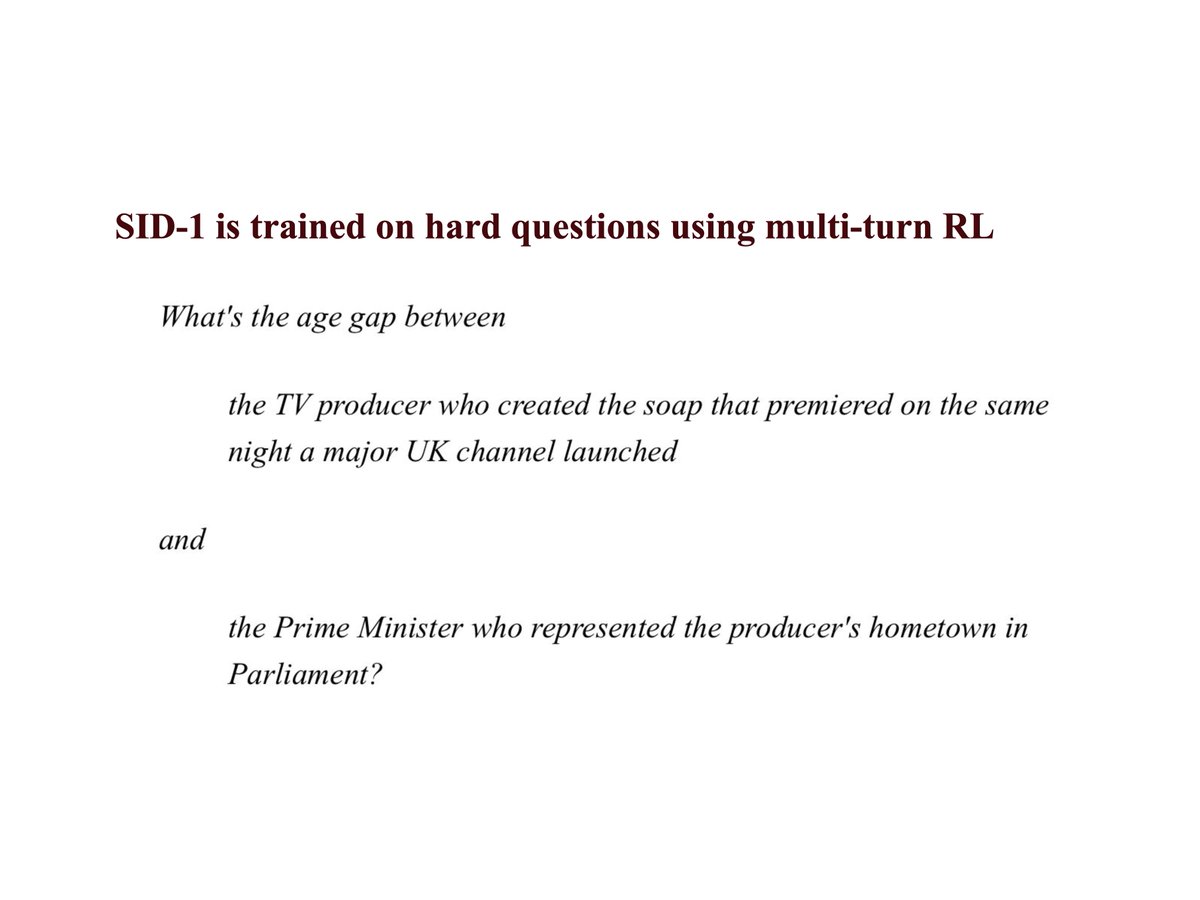

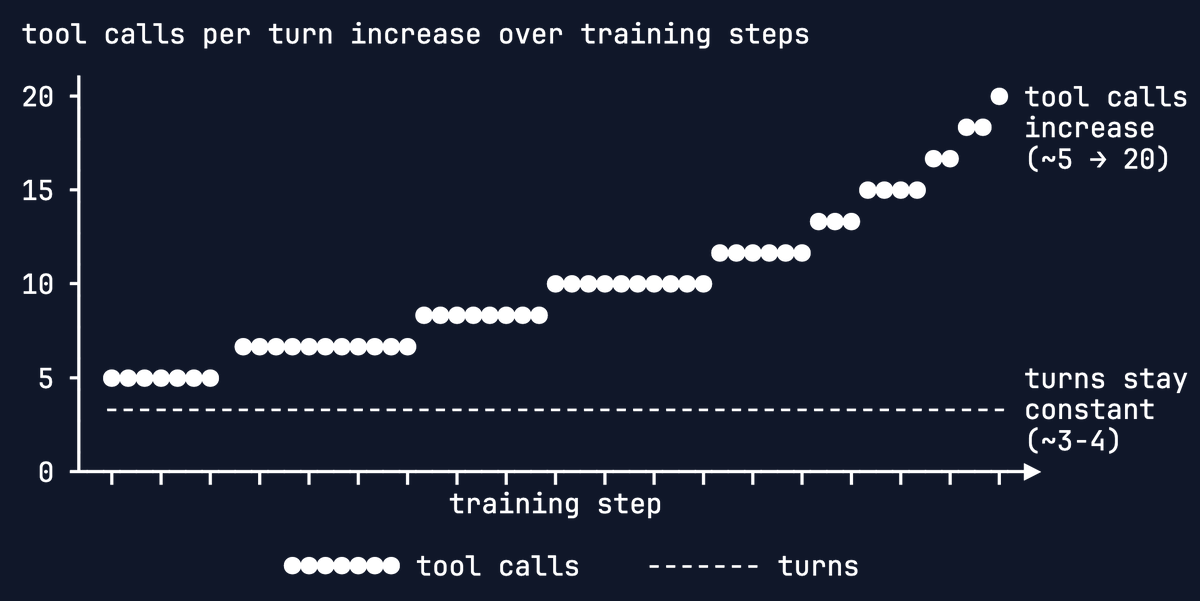

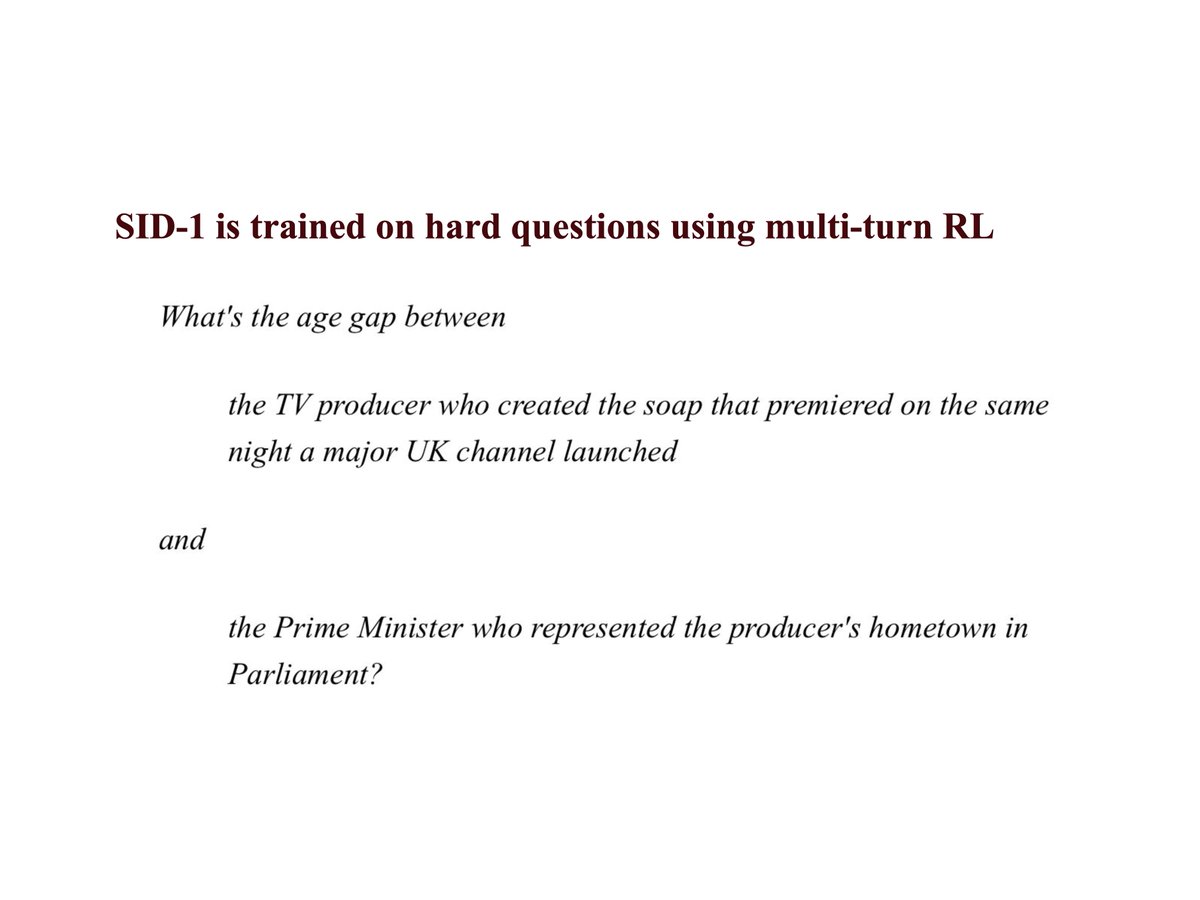

we just released our first model: SID-1 it's designed to be extremely good at only one task: retrieval. it has 1.8x better recall than embedding search alone (even with reranking) and beats "agentic" retrieval implemented using all frontier LLMs, including the really large and expensive ones (see chart). we trained SID-1 using multi-environment, multi-turn RL on Qwen. it was a lot of work (a lot of which is documented in our tech report -- see pinned tweet). our RL environments build on the idea that humans with search tools can find almost any information given sufficient iteration. like humans, SID-1 makes a first search, read the results, and adapts its strategy. and it can do this much faster *and* better than frontier LLMs: 24x faster than GPT-5.1, 27x faster than Gemini 3 Pro. the better part is critical! if a model is fast and wrong, it's just wrong. that's why we trained SID-1 until it was the most likely to deliver the correct results. bar none. we're partnering with a small number of companies today and have a waitlist for everyone else. (we don't have enough inference compute for everyone yet).

we just released our first model: SID-1 it's designed to be extremely good at only one task: retrieval. it has 1.8x better recall than embedding search alone (even with reranking) and beats "agentic" retrieval implemented using all frontier LLMs, including the really large and expensive ones (see chart). we trained SID-1 using multi-environment, multi-turn RL on Qwen. it was a lot of work (a lot of which is documented in our tech report -- see pinned tweet). our RL environments build on the idea that humans with search tools can find almost any information given sufficient iteration. like humans, SID-1 makes a first search, read the results, and adapts its strategy. and it can do this much faster *and* better than frontier LLMs: 24x faster than GPT-5.1, 27x faster than Gemini 3 Pro. the better part is critical! if a model is fast and wrong, it's just wrong. that's why we trained SID-1 until it was the most likely to deliver the correct results. bar none. we're partnering with a small number of companies today and have a waitlist for everyone else. (we don't have enough inference compute for everyone yet).

we just released our first model: SID-1 it's designed to be extremely good at only one task: retrieval. it has 1.8x better recall than embedding search alone (even with reranking) and beats "agentic" retrieval implemented using all frontier LLMs, including the really large and expensive ones (see chart). we trained SID-1 using multi-environment, multi-turn RL on Qwen. it was a lot of work (a lot of which is documented in our tech report -- see pinned tweet). our RL environments build on the idea that humans with search tools can find almost any information given sufficient iteration. like humans, SID-1 makes a first search, read the results, and adapts its strategy. and it can do this much faster *and* better than frontier LLMs: 24x faster than GPT-5.1, 27x faster than Gemini 3 Pro. the better part is critical! if a model is fast and wrong, it's just wrong. that's why we trained SID-1 until it was the most likely to deliver the correct results. bar none. we're partnering with a small number of companies today and have a waitlist for everyone else. (we don't have enough inference compute for everyone yet).

we just released our first model: SID-1 it's designed to be extremely good at only one task: retrieval. it has 1.8x better recall than embedding search alone (even with reranking) and beats "agentic" retrieval implemented using all frontier LLMs, including the really large and expensive ones (see chart). we trained SID-1 using multi-environment, multi-turn RL on Qwen. it was a lot of work (a lot of which is documented in our tech report -- see pinned tweet). our RL environments build on the idea that humans with search tools can find almost any information given sufficient iteration. like humans, SID-1 makes a first search, read the results, and adapts its strategy. and it can do this much faster *and* better than frontier LLMs: 24x faster than GPT-5.1, 27x faster than Gemini 3 Pro. the better part is critical! if a model is fast and wrong, it's just wrong. that's why we trained SID-1 until it was the most likely to deliver the correct results. bar none. we're partnering with a small number of companies today and have a waitlist for everyone else. (we don't have enough inference compute for everyone yet).