SWE-bench

26 posts

SWE-bench

@SWEbench

Official SWE-bench Account. Follow for updates to the SWE-universe

SWE-smith is going multilingual! We have expanded our task synthesis pipeline to JavaScript! This release includes: • 6,099 new JS tasks • Coverage across 34 popular repos • End-to-end Modal pipeline for fast task synthesis Scaling agentic training data just got easier.

SWE-bench blog site launched! Check out our content + expect more SWE-bench/agent/smith content soon!

Excited to announce Kai's latest ASE'25 work, let LLMs not only see bugs, but also fix them: 📄 “Seeing is Fixing: Cross-Modal Reasoning with Multimodal LLMs for Visual Software Issue Repair” 🔗arxiv.org/abs/2506.16136 Ranked #1 on @SWEbench Multimodal!

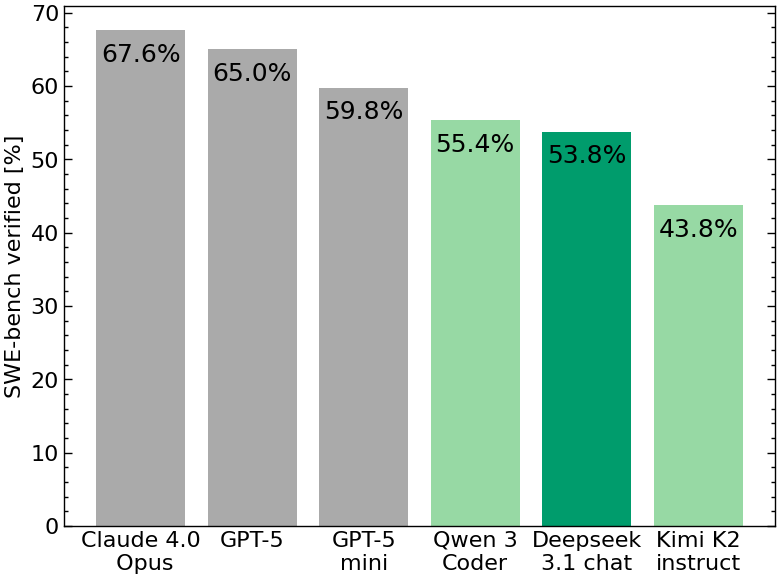

>>> Qwen3-Coder is here! ✅ We’re releasing Qwen3-Coder-480B-A35B-Instruct, our most powerful open agentic code model to date. This 480B-parameter Mixture-of-Experts model (35B active) natively supports 256K context and scales to 1M context with extrapolation. It achieves top-tier performance across multiple agentic coding benchmarks among open models, including SWE-bench-Verified!!! 🚀 Alongside the model, we're also open-sourcing a command-line tool for agentic coding: Qwen Code. Forked from Gemini Code, it includes custom prompts and function call protocols to fully unlock Qwen3-Coder’s capabilities. Qwen3-Coder works seamlessly with the community’s best developer tools. As a foundation model, we hope it can be used anywhere across the digital world — Agentic Coding in the World! 💬 Chat: chat.qwen.ai 📚 Blog: qwenlm.github.io/blog/qwen3-cod… 🤗 Model: hf.co/Qwen/Qwen3-Cod… 🤖 Qwen Code: github.com/QwenLM/qwen-co…