Ziteng Sun

83 posts

@SZiteng

Responsible and efficient AI. Topics: LLM efficiency; LLM alignment; Differential Privacy; Information Theory. Research Scientist @Google; PhD @Cornell

I'm hiring a student researcher to work on RL and RLM-flavored things. DM me if interested

Google Student Researcher Program 2026 is now OPEN! Work on REAL AI/ML projects with: • Google Research • DeepMind • Google Cloud Open to: Bachelors / Masters / PhD Duration: 3–12 months Deadline: March 31 If you're serious about AI, this is your shot. Apply here google.com/about/careers/…

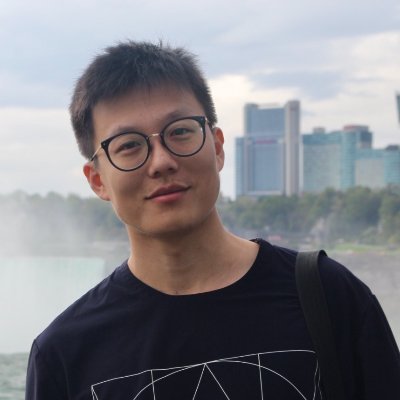

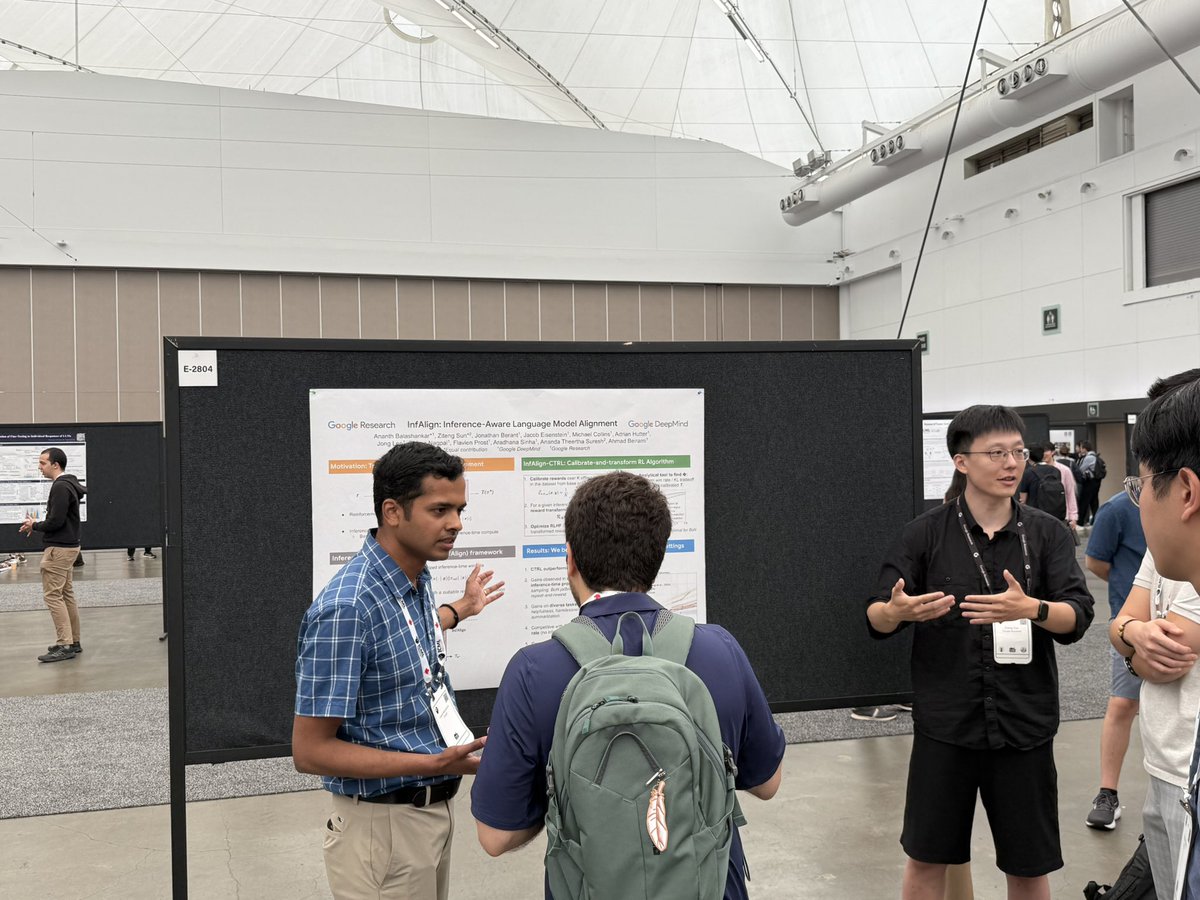

[Thu Jul 17] w/ @ananthbshankar & @jacobeisenstein, we present a reinforcement learning framework in view of test-time scaling. We show how to optimally calibrate & transform rewards to obtain optimal performance with a given test-time algorithm. x.com/SZiteng/status…

Inference-time procedures (e.g. Best-of-N, CoT) have been instrumental to recent development of LLMs. The standard RLHF framework focuses only on improving the trained model. This creates a train/inference mismatch. Can we align our model to better suit a given inference-time procedure? We answer this affirmatively, check out the thread below.

Inference-time procedures (e.g. Best-of-N, CoT) have been instrumental to recent development of LLMs. The standard RLHF framework focuses only on improving the trained model. This creates a train/inference mismatch. Can we align our model to better suit a given inference-time procedure? We answer this affirmatively, check out the thread below.

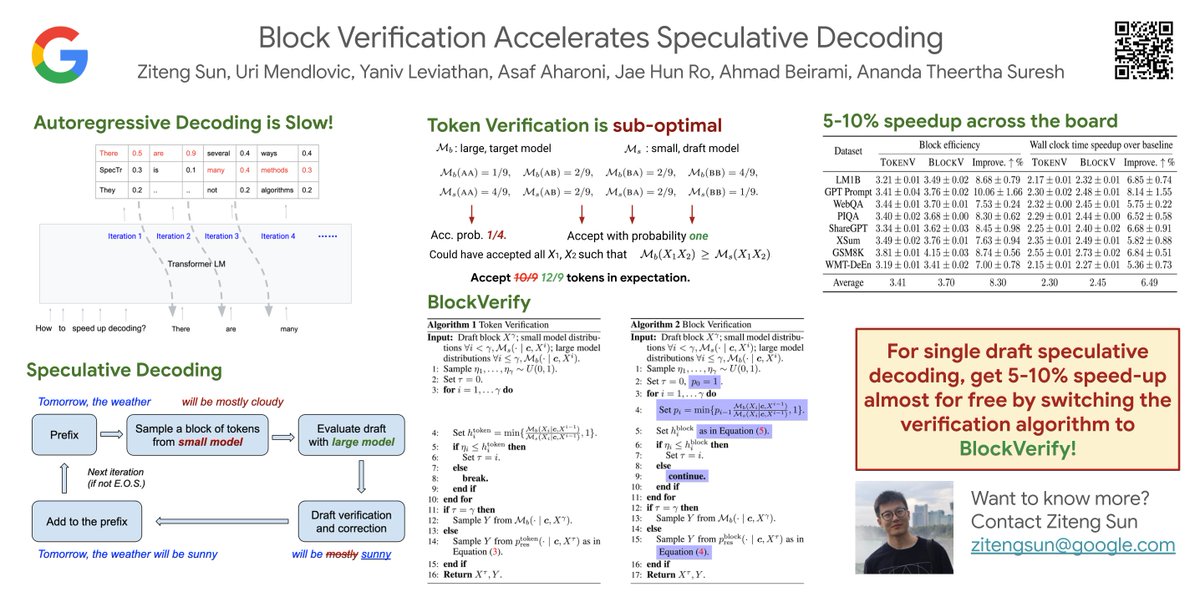

Friday 10am, I will present @SZiteng's paper on 𝐛𝐥𝐨𝐜𝐤 𝐯𝐞𝐫𝐢𝐟𝐢𝐜𝐚𝐭𝐢𝐨𝐧 𝐟𝐨𝐫 𝐬𝐩𝐞𝐜𝐮𝐥𝐚𝐭𝐢𝐯𝐞 𝐝𝐞𝐜𝐨𝐝𝐢𝐧𝐠 (w/ @th33rtha) x.com/SZiteng/status…