James Liu

63 posts

James Liu

@JamesLiuID

@AnthropicAI, prev @MIT, @togethercompute

Today, we're proud to announce @inferact, a startup founded by creators and core maintainers of @vllm_project, the most popular open-source LLM inference engine. Our mission is to grow vLLM as the world's AI inference engine and accelerate AI progress by making inference cheaper and faster. The Challenge Inference is not solved. It's getting harder. Models grow larger. New architectures proliferate: mixture-of-experts, multimodal, agentic. Every breakthrough demands new infrastructure. Meanwhile, hardware fragments: more accelerators, more programming models, and more combinations to optimize. The capability gap between models and the systems that serve them is widening. Left this way, the most capable models remain bottlenecked and with full scope of their capabilities accessible only to those who can build custom infrastructure. Close the gap, and we unlock new possibilities. And the problem is growing. Inference is shifting from a fraction of compute to the majority: test-time compute, RL training loops, synthetic data. We see a future where serving AI becomes effortless. Today, deploying a frontier model at scale requires a dedicated infrastructure team. Tomorrow, it should be as simple as spinning up a serverless database. The complexity doesn't disappear; it gets absorbed into the infrastructure we're building. Why Us vLLM sits at the intersection of models and hardware: a position that took years to build. When model vendors ship new architectures, they work with us to ensure day-zero support. When hardware vendors develop new silicon, they integrate with vLLM. When teams deploy at scale, they run vLLM, from frontier labs to hyperscalers to startups serving millions of users. Today, vLLM supports 500+ model architectures, runs on 200+ accelerator types, and powers inference at global scale. This ecosystem, built with 2,000+ contributors, is our foundation. We've been stewards of this engine since its first commit. We know it inside out. We deployed it at frontier scale—in research and in production. Open Source vLLM was built in the open. That's not changing. Inferact exists to supercharge vLLM adoption. The optimizations we develop flow back to the community. We plan to push vLLM's performance further, deepen support for emerging model architectures, and expand coverage across frontier hardware. The AI industry needs inference infrastructure that isn't locked behind proprietary walls. Join Us Through the open source community, we are fortunate to work with some of the best people we know. For @inferact, we're hiring engineers and researchers to work at the frontier of inference, where models meet hardware at scale. Come build with us. We're fortunate to be supported by investors who share our vision, including @a16z and @lightspeedvp who led our $150M seed, as well as @sequoia, @AltimeterCap, @Redpoint, @ZhenFund, The House Fund, @strikervp, @LaudeVentures, and @databricks. - @woosuk_k, @simon_mo_, @KaichaoYou, @rogerw0108, @istoica05 and the rest of the founding team

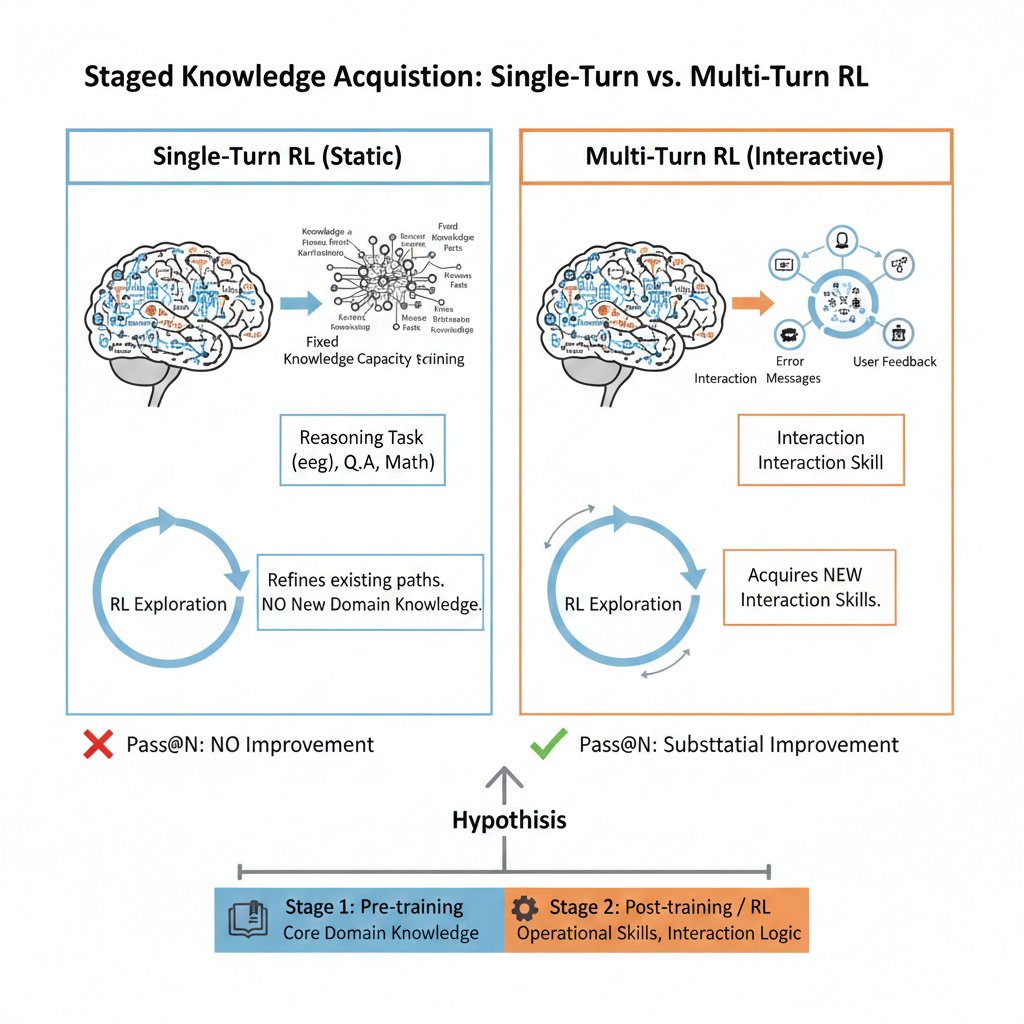

@srush_nlp Yeah, in multi-turn RL experiments, we actually see pass@N increase with the number of training steps. Maybe you can take a look at our discussion. x.com/ShengjieWa3406…

Claude 4.5 Opus will be completely irrelevant to the market situation if it's still at the same price

Generalists are useful, but it’s not enough to be smart. Advances come from specialists, whether human or machine. To have an edge, agents need specific expertise, within specific companies, built on models trained on specific data. We call this Specific Intelligence. It's what we're building at Applied Compute. We unlock the latent knowledge inside a company, use it to train custom models, and deploy an in-house agent workforce that reports to your team. We work with sophisticated companies that have already captured early gains from general models, like @cognition, @DoorDash, and @mercor_ai. They’re pulling even further ahead with proprietary in-house agents that don’t need to wait for the next public model release. Together, we are building and validating models and agents in days instead of months, achieving state-of-the-art performance on customer evals. Our team has high density and low latency. Our founders all worked on different parts of this problem while they were researchers at OpenAI — @ypatil125 as a key member on the agentic software engineer effort (Codex), @rhythmrg as a core contributor to the first RL-trained reasoning model (o1), and @lindensli as a core contributor on ML systems and infrastructure for RL training. Two-thirds of the team are former founders, and everyone brings a deep technical background, from top AI researchers to Math Olympiad winners. We are backed by $80M in funding from Benchmark, Sequoia, Lux, Elad Gil, Victor Lazarte, Omri Casspi, and others. With their support, we are growing the team, scaling deployments, and bringing to market the first generation of agent workforces built on specific models. In short: 1. We are building Specific Intelligence for specific work at specific companies. 2. That will power in-house agent workforces to support their human bosses. 3. That in turn will unlock AI’s full potential through humanity’s greatest engine of progress: thriving corporations in a free market.

What if scaling the context windows of frontier LLMs is much easier than it sounds? We’re excited to share our work on Recursive Language Models (RLMs). A new inference strategy where LLMs can decompose and recursively interact with input prompts of seemingly unbounded length, as a REPL environment. On the OOLONG benchmark, RLMs with GPT-5-mini outperforms GPT-5 by over 110% gains (more than double!) on 132k-token sequences and is cheaper to query on average. On the BrowseComp-Plus benchmark, RLMs with GPT-5 can take in 10M+ tokens as their “prompt” and answer highly compositional queries without degradation and even better than explicit indexing/retrieval. We link our blogpost, (still very early!) experiments, and discussion below.

I’m thrilled to announce @reductoai’s $75M Series B led by @a16z, which brings our total funding to $108M. Just five months after our Series A, we've surpassed 1 billion pages processed and grown our monthly volume 6x. We now process hundreds of millions of pages every month for some of the world's best AI teams. Here's what we've learned and where we're headed 🧵

⚡️ Efficiency Gains 🤖 DSA achieves fine-grained sparse attention with minimal impact on output quality — boosting long-context performance & reducing compute cost. 📊 Benchmarks show V3.2-Exp performs on par with V3.1-Terminus. 2/n