Edward Saatchi

2.9K posts

Edward Saatchi

@SaatchiEdward

2x Primetime Emmy winner, AI + Movies + Games! @fablesimulation

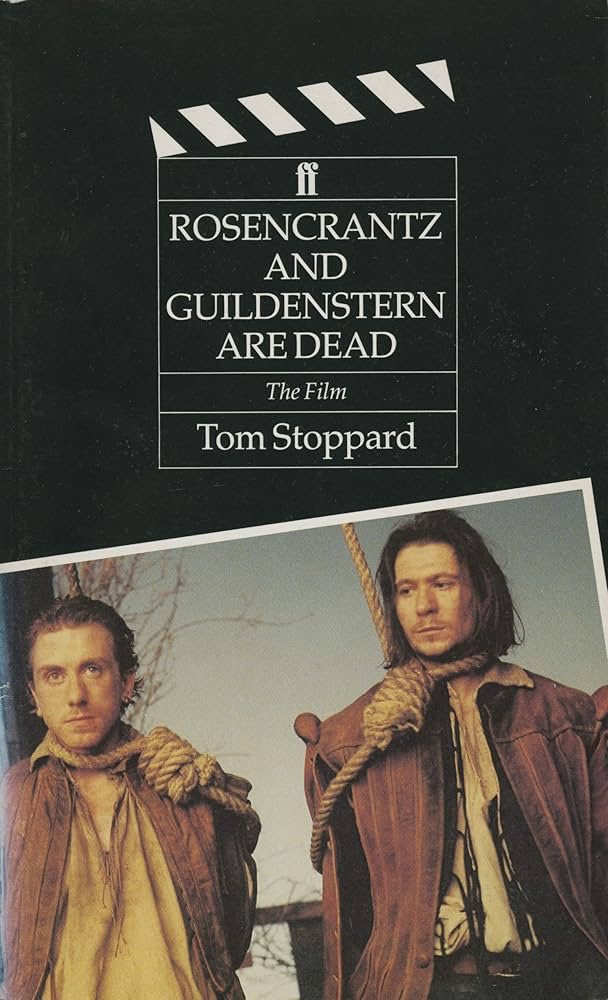

The more I meet people who've gone deep into generating AI media, the more I realize we're all reaching the same conclusion: this is a new medium. Not an evolution of something else. Something entirely new, the way photography and film were new. To understand any medium, you need to look beyond its surface to its core. Some technologies merely augment existing mediums. Collapsible paint tubes changed painting but didn't invent a new medium. Others create completely new forms of expression. Optical lenses, light-sensitive chemicals, and mechanical shutters didn't improve painting. They weren't better brushes or richer pigments. They birthed photography. A medium that captures light itself rather than representing it through human interpretation. Every new medium brings its own affordances, primitives, and possibilities. Its own audience. Its own generation of creators. When moving pictures first appeared, people saw them as recorded theater. They pointed cameras at stages and filmed plays. It took years of experimentation to discover what the medium actually enabled. Eisenstein discovered montage. That juxtaposing unrelated shots could create new meaning. Porter discovered continuity. That audiences could follow action across cuts. Someone finally moved the camera and changed everything. Surface similarities deceive us. A painting and a photograph both arrange color and composition across a plane. But mastering paint means understanding pigments, brushes, mixing, color theory. Mastering photography means understanding lenses, shutter speed, aperture, light itself. Yes, composition knowledge transfers. Most knowledge doesn't. When photography emerged, we made a critical error: we let painters judge it. Because on the surface it looked similar. They dissected this new form through the lens of their own medium, anchoring on what they knew. Predictably, they concluded photography would never match oil's texture, never capture color the way mixed pigments could. They were right and also completely missed the point. Photography wasn't trying to be painting. They were thinking by analogy, judging the new by the standards of the old. I see this same mistake happening with AI media. Some filmmakers and photographers declare it will never achieve what their mediums achieve. They're right. That's not what this medium is about. Judging AI purely through the lens of film is like painters judging photography purely through the lens of painting. The surface might look similar. Moving images, composed frames. The core is fundamentally different. AI has its own affordances. Creation is asynchronous. At scale. It benefits from quantity. You navigate through latent space, sampling rather than capturing. You provide references that drive generation. You work in real time, watching possibilities emerge. Some knowledge from painting, film, and games transfers here. Most doesn't. Mediums always influence each other. Photography didn't kill painting. It freed painting from documentation, letting it explore abstraction, impressionism, and the surreal. Each new medium changes what the others can become. AI is the birth of a new medium of perception and expression. We're in the early days, still discovering AI's equivalent of montage, of the moving camera, of all those breakthrough moments that reveal what a medium actually is. The filmmakers judging it by film standards will miss what's actually happening. The painters missed photography. The theater critics missed cinema. The only way we'll uncover what this medium can do is to stop judging it by what came before. Stop looking at the surface. Start experimenting with the core. We're not watching films evolve. We're watching something being born. This is a new medium

Introducing Showrunner: the Netflix of AI From our South Park AI experiment to today we’ve believed AI movies/shows are a playable medium. We just raised a round from Amazon & more and the Alpha is live today Comment for an access code to make with all our shows.

San Francisco has a population of 800k and GDP around $670bn. We’re building Sim Francisco to overtake SF. If you’re curious and live in SF today, upload parties using the EXCESSION device 🔜 Upload yourself & compete with your double! DM to waitlist (for SF people only)

San Francisco has a population of 800k and GDP around $670bn. We’re building Sim Francisco to overtake SF. If you’re curious and live in SF today, upload parties using the EXCESSION device 🔜 Upload yourself & compete with your double! DM to waitlist (for SF people only)