Sam Elrad

291 posts

Sam Elrad

@SamElrad

Leading Enterprise AI Strategy | AI is changing everything and most of the coverage is noise. I break down what actually matters and why.

Anthropic just automated the first-year analyst job at every bank on Wall Street. They released these 10 AI agents for finance: → Pitch builder → Meeting preparer → Earnings reviewer → Model builder → Market researcher → Valuation reviewer → GL reconciler → Month-end closer → Statement auditor → KYC screener The analyst pyramid just got a lot flatter.

This is the the quote I've been citing a lot recently.

Company Brain @t_blom Every company has critical know-how scattered across people's heads, old Slack threads, support tickets, and databases, and AI agents can't operate like that. We think every company in the world is going to need a new primitive: a living map of how the company works that turns its own artifacts into an executable skills file for AI.

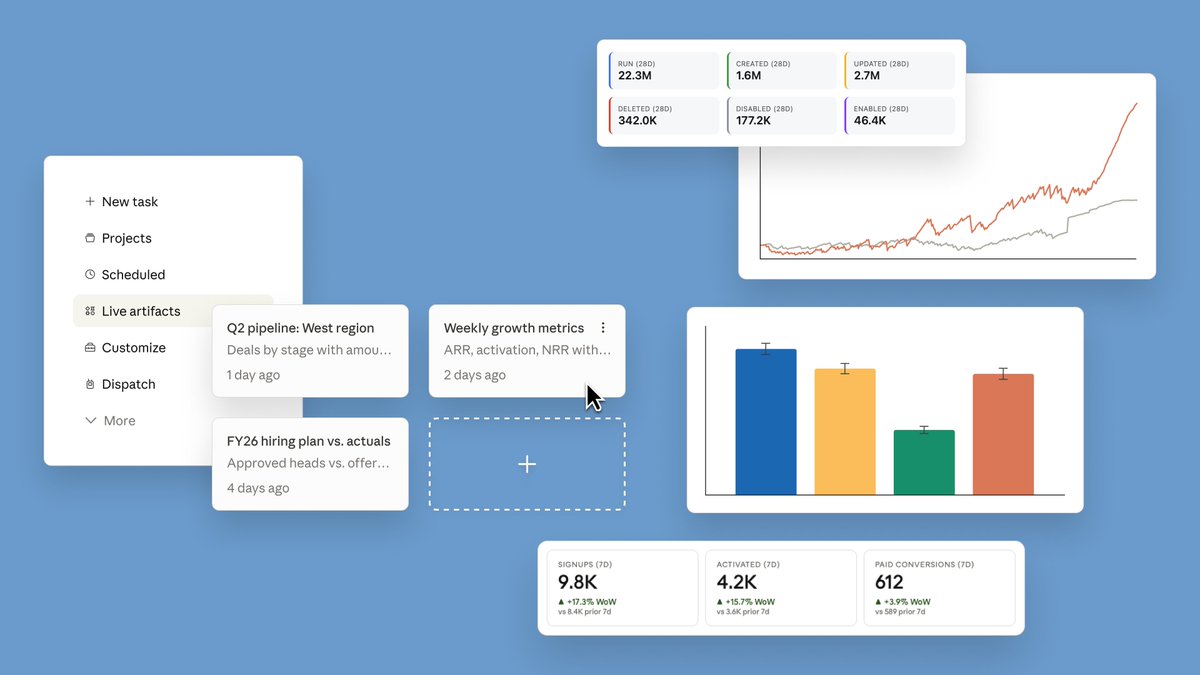

Introducing Claude Design by Anthropic Labs: make prototypes, slides, and one-pagers by talking to Claude. Powered by Claude Opus 4.7, our most capable vision model. Available in research preview on the Pro, Max, Team, and Enterprise plans, rolling out throughout the day.

Our run-rate revenue has surpassed $30 billion, up from $9 billion at the end of 2025, as demand for Claude continues to accelerate. This partnership gives us the compute to keep pace. Read more: anthropic.com/news/google-br…

You can now enable Claude to use your computer to complete tasks. It opens your apps, navigates your browser, fills in spreadsheets—anything you'd do sitting at your desk. Research preview in Claude Cowork and Claude Code, macOS only.

Sam Altman predicted the first one-person billion-dollar company. Matthew Gallagher built a $401M company in year one with $20,000, AI tools, and zero employees. This year he's on track for $1.8B. With 2 people. The playbook has changed: Old path: - Come up with an idea - Fundraise from friends or VCs - Hire a team - Build the product - Hope it works New path: - Start with an audience (X, Instagram, TikTok) - Vibe code something for that audience - Build a community around it - Automate fulfillment with AI agents - Repeat That's the new barrier to entry is a laptop and an idea.

Today, we closed our latest funding round with $122 billion in committed capital at an $852B post-money valuation. The fastest way to expand AI’s benefits is to put useful intelligence in people’s hands early and let access compound globally. This funding gives us resources to lead at scale. openai.com/index/accelera…