Sabitlenmiş Tweet

Just a quick note.

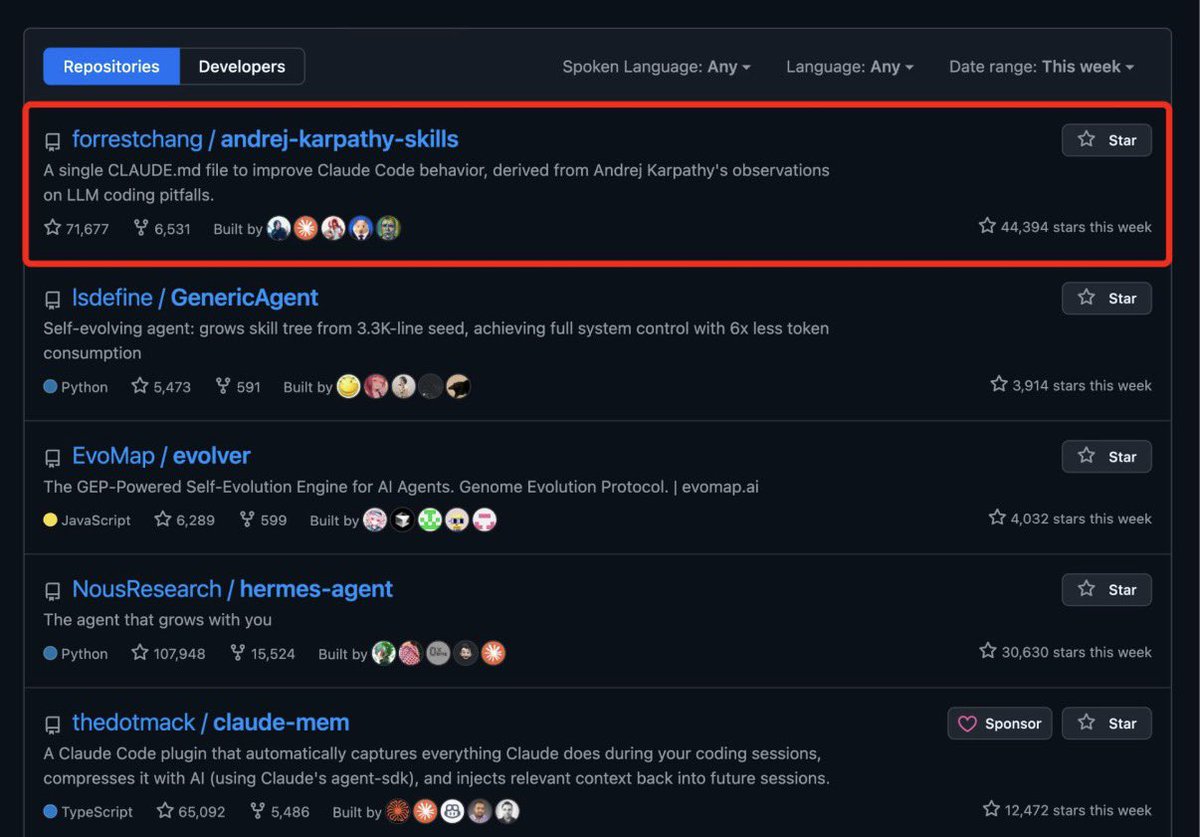

I have started helping out deft.co and it looks like it will be a fun wild ride.

Can't reveal too much but it is designed to make developer's lives easier and a lot more fun in the wild woolly agentic AI world!

I am so excited to be a part of this!

English