SenseTime

717 posts

@SenseTime_AI

SenseTime is a leading AI software company focused on creating a better AI-empowered future through innovation.

Excited to have contributed to the spatial intelligence capabilities of SenseNova-U1, surpassing strong baselines such as Qwen3.5 on key benchmarks including VSI-Bench. We’re also thrilled to open-source SenseNova-SI-8M, which is currently the largest spatial QA dataset to date. See you at CVPR this June, happy to chat in person! SenseNova-SI-8M: huggingface.co/datasets/sense…

Proud to announce the release of the SenseNova U1 Tech Report — together with the a new set of model weights based on MoE. We hope this open release promotes transparency, reproducibility, and further innovation across the AI community. Huge thanks to the team for making this possible. 🚀

Instead of stitching models together, SenseNova U1 runs everything inside one unified system. Built on NEO-Unify, it treats text and images as part of the same space. No switching between components. No translation gaps. Just one model that understands and creates at the same time.

🔥 New week, New 𝗦𝗲𝗻𝘀𝗲𝗡𝗼𝘃𝗮-𝗨𝟭 Drop — and this one goes Deep!🔥 📄 𝗧𝗵𝗲 𝗳𝘂𝗹𝗹 𝗧𝗲𝗰𝗵𝗻𝗶𝗰𝗮𝗹 𝗥𝗲𝗽𝗼𝗿𝘁 𝗶𝘀 𝗢𝗨𝗧 — the most detailed disclosure yet of how to build a frontier Native Multimodal Model. Inside: ✨ Near-lossless visual interface (no VEs, no VAEs) ✨ Native Multimodal Unified Modeling ✨ Joint AR + pixel-space flow matching training ✨ Native Mixture-of-Transformers backbone ✨ 6-stage training recipe + RL post-training + distillation If you work on NMM, this is the playbook. 🤗 One more thing: 𝗦𝗲𝗻𝘀𝗲𝗡𝗼𝘃𝗮-𝗨𝟭-𝗔𝟯𝗕-𝗠𝗼𝗧 (𝟯𝟴𝗕-𝗔𝟯𝗕 𝗠𝗼𝗘) 𝘄𝗲𝗶𝗴𝗵𝘁𝘀 𝗮𝗿𝗲 𝗻𝗼𝘄 𝗼𝗽𝗲𝗻-𝘀𝗼𝘂𝗿𝗰𝗲𝗱 — a RARE native unified model on an MoE backbone (Only 3B active! Lightning Fast⚡) 📄 Tech Report: arxiv.org/abs/2605.12500 🤗 Daily Papers (Vote & Discuss): huggingface.co/papers/2605.12… 🤗 Models: huggingface.co/collections/se… 💻 Code: github.com/OpenSenseNova/… 🎮 Demo: unify.light-ai.top 👾 Discord: discord.com/invite/BuTXPHm…

Imagine a single AI that can read text, generate images, edit photos, and even handle interleaved text+image tasks. SenseNova-U1-8B-MoT is that model: an any-to-any transformer that redefines multimodal AI. It's like having a creative studio in one model.

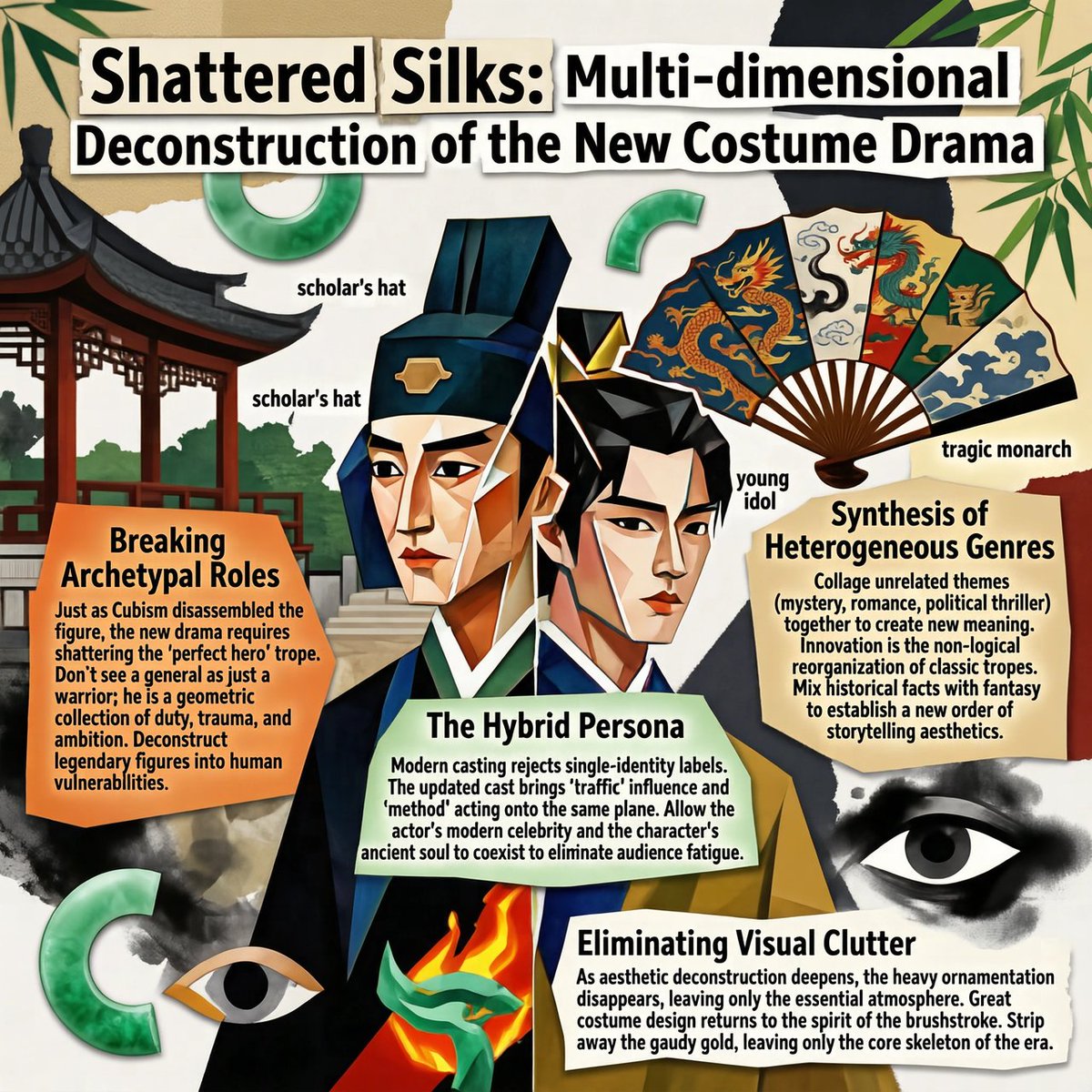

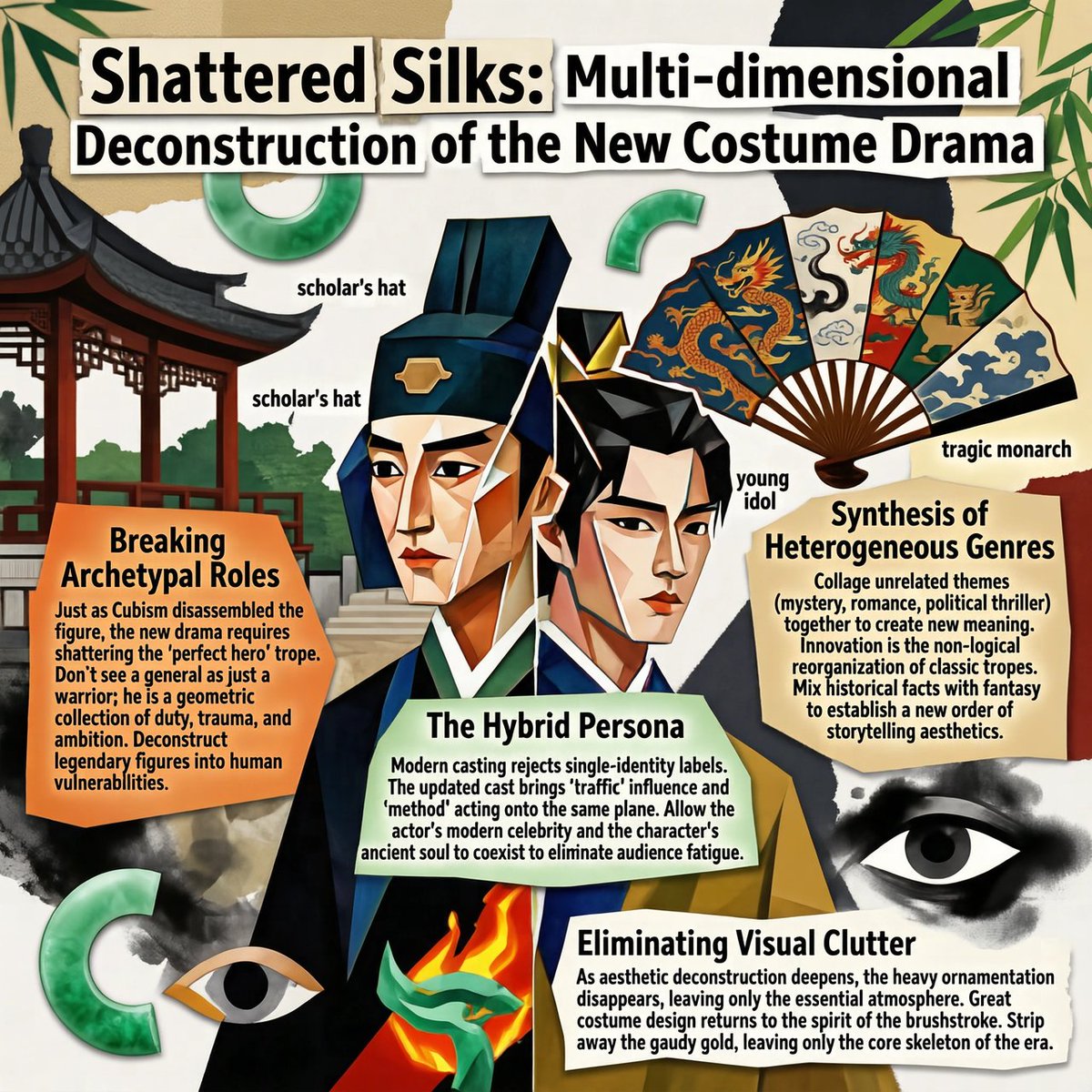

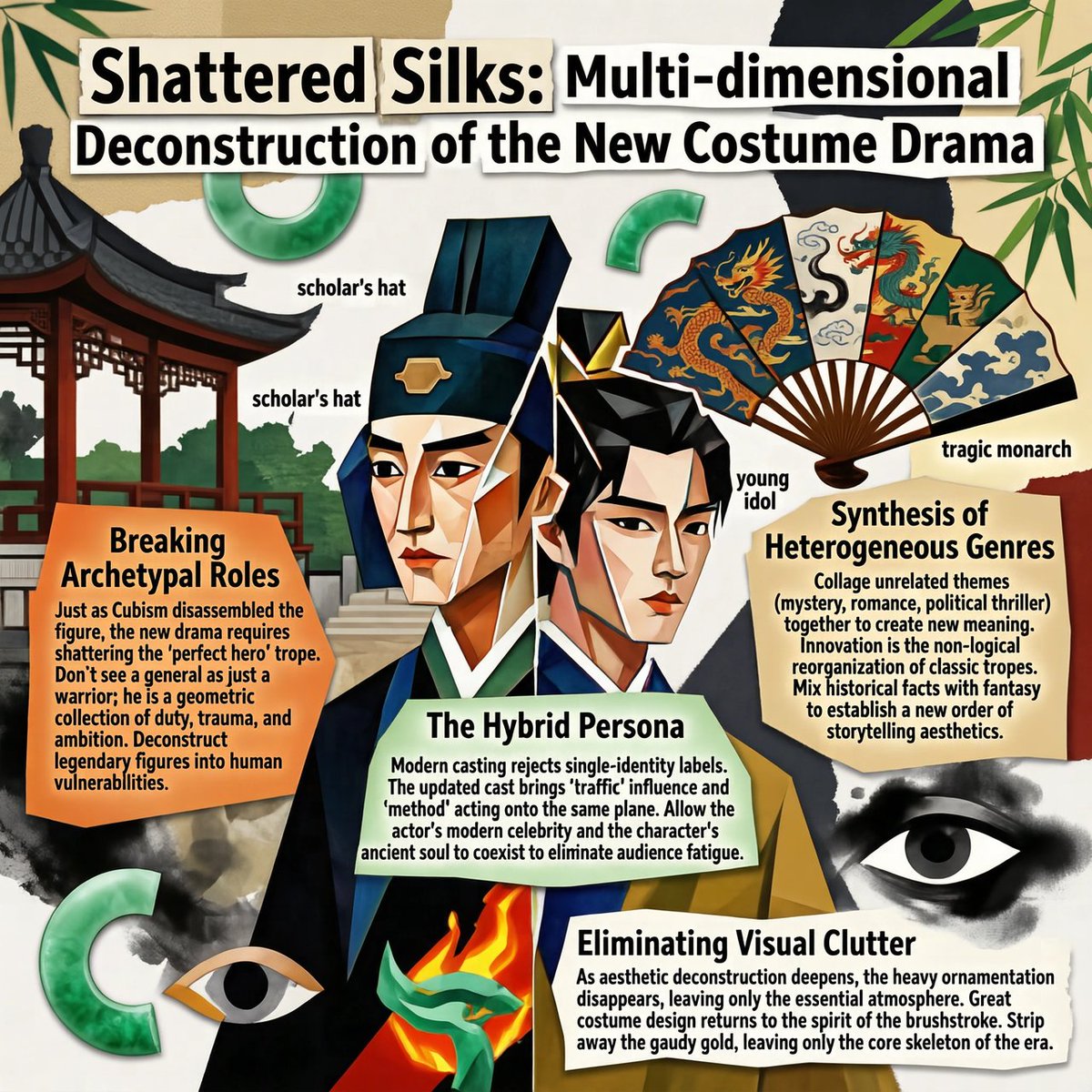

I gave it a topic. It came back with a full magazine-style infographic. Charts. Layout. Icons. Colour coding. Dense structured copy. That model is SenseNova U1. And it's open-source. 🧵