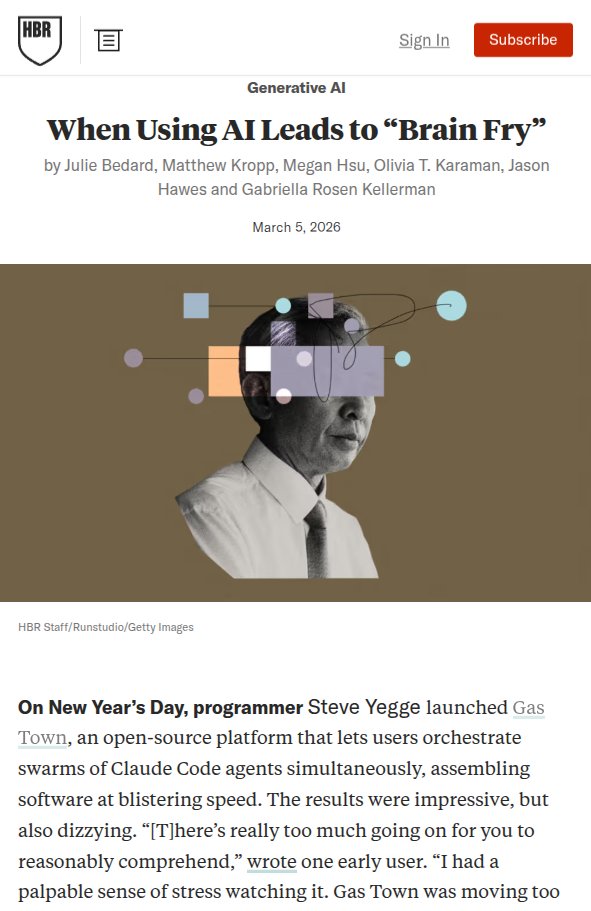

Evals are the new PRD. The companies building AI products that actually work are running 12.8 eval experiments per day. Here is the playbook with @ankrgyl, Founder and CEO of @braintrust ($800M valuation, behind Vercel, Replit, Ramp, Zapier, Notion, Airtable): ⏱ 1:43 Why vibe checks stop scaling ⏱ 6:35 Evals are the new PRD ⏱ 8:45 The Claude Code evals controversy ⏱ 18:48 Building an eval live from zero ⏱ 29:51 Connecting Linear MCP and iterating ⏱ 39:12 Why you need evals that fail ⏱ 43:36 Offline vs online evals ⏱ 47:40 Three mistakes killing eval culture The core framework: every eval is exactly three things. A set of inputs your product needs to handle. A task that takes those inputs and generates outputs. A scoring function that produces a number between 0 and 1. We built one from scratch on camera. Score went from 0 to 0.75 in under 20 minutes.