Seth Cronin

3.3K posts

Seth Cronin

@SethCronin

Invent things, build things, and get intellectual property to maximize the value of the things you build and invent.

maybe this is not yet clear, so let me state it plainly: as of right now Anthropic, and really a small number of individuals at Anthropic, has the capacity to directly attack and cause major damage to the United States Government, China, and generally global superpowers. government agencies like the NSA do not have internal models or defense capabilities that outclass frontier models. if they chose to do so, they could likely exfiltrate top secret information from government systems, gain control over critical infrastructure including military infrastructure, sabotage or modify communications between members of government at the highest level, and potentially carry on activities for some time without detection. the thing about having access to a huge number of zerodays your adversaries don't know about is it gives you a massive asymmetric advantage. they did not exploit this to gain power or destabilize the world order. they publicly released the information that they had these capabilities and worked to mitigate these flaws. you should be grateful american frontier labs have proven themselves remarkably trustworthy and concerned with the public good. but it's critical you understand we are in a new regime. private entities now have power that directly rivals and impacts the government's monopoly on influence and violence. and anthropic is certainly not the only one, there's little chance OpenAI's internal models are far behind. this trend will accelerate on virtually every dimension, not slow down. my prediction for how it plays out is the relatively imminent seizure and nationalization of labs by the US government, sometime over the next two years. it's very tough for me to see how they accept the existence of this kind of threat. but this adds a whole new class of governance issues, as then we've handed these extremely wide-reaching capabilities from private entities to public ones.

We’ve partnered with Amazon Web Services, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks. Together we’ll use Mythos Preview to help find and fix flaws in the systems on which the world depends.

I’ve never really understood ads you can’t skip. Are they supposed to convince me to buy your product? You’re just annoying me.

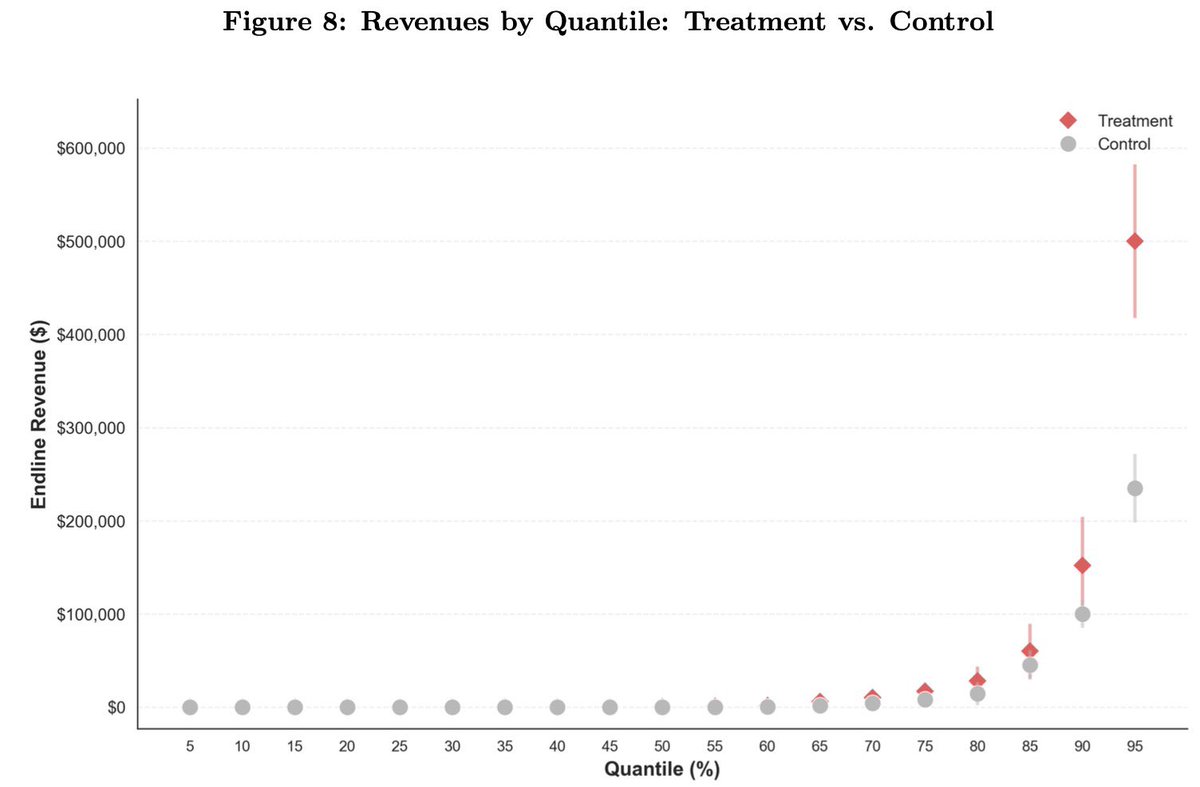

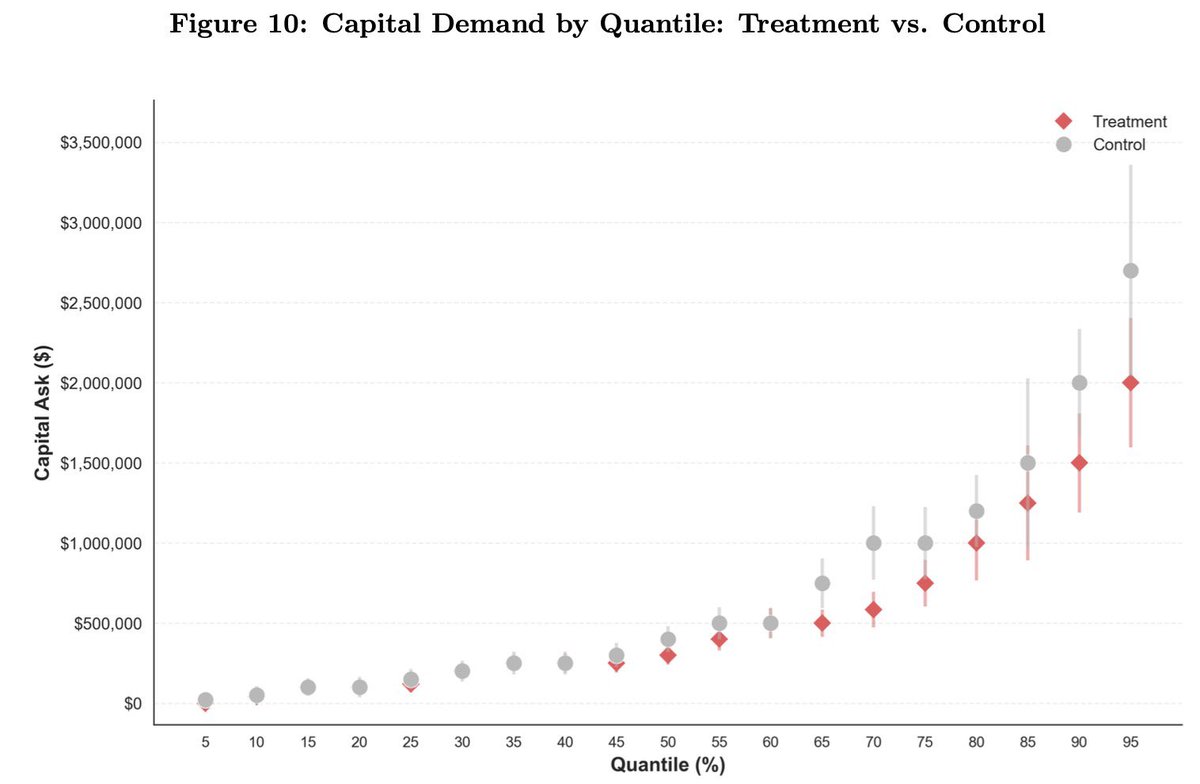

🚨 Excited to share a new working paper! 🚨 AI can improve individual tasks. But when does it improve firm performance? Our paper proposes one key friction firms face: the "mapping problem" -- discovering where and how AI creates value in a firm's production process. 🧵1/

like I’ve said a few times, well within TOS to do this, they built the model, if they wanna give you inference at pennies on the dollar on the condition that you use their harness, great, they have the right to do this. On this topic in particular, I don’t understand the “evil” or “rugpull”, jeers. There was never any promise to give people cheap inference. Before the claude code max plan we were all paying per token to use this stuff. And we’re more or less happy to do it (sure the VC funding helps). Every enterprise I know pays per token because when you use subsidized inference, YOU are the product. “Have some cheap code, in exchange for helping to train the next gen of models” You can hate on that particular behavior if you want but nobody is making you take part in that particular market dynamic. Do I wanna see a world where model companies take some of their massive financial gains and use that to pull everybody up? Of course. I hope it happens some day. An allegory perhaps: If public e-bike company gave you a subscription on rides and you proceeded to around ripping out batteries and sticking them in your own bike and ride around town, you’d get banned for that too. Especially if your bike was poorly wired and overloaded the batteries/cause them to flame up etc. Banning that behavior would deliver far better results for the people who were using the system as designed

Microsoft 365 connectors are now available on every Claude plan. Connect Outlook, OneDrive, and SharePoint to bring your email, docs, and files into the conversation. Get started here: claude.ai/customize/conn…