Andrew M. Dai@AndrewDai

To invest deeply in the research required to push this new frontier, we've raised $55 million from @strikervp , @MenloVentures , and @AltimeterCap , with participation from @nvidia , 49 Palms and others and prominent AI researchers including @JeffDean .

It was an honor working with the luminaries in the field like @ilyasut , @vinyals and @quocleix in the old days at Brain and having the chance of watching AI grow from recurrent models to Transformers and more sparse Transformers. During all that, I believed text-based reasoning was all you need. After all, people who are blind can reason perfectly well.

But after leading the data area for Gemini 3.0, I realized that even though frontier models are great at language and coding, they were still at the level of a child when given basic visual reasoning problems. Language alone gets you remarkably far, but the world is fundamentally visual, and so much understanding depends on being able to see and interpret what's in front of you. To me, this showed that we were coming at this from the wrong direction. In nature, pattern matching comes first, then visual reasoning, then language and finally advanced language (math & physics). Without this visual reasoning layer, we have models that can draw a pelican with SVG code but can’t even count the number of fingers on a drawing of a hand.

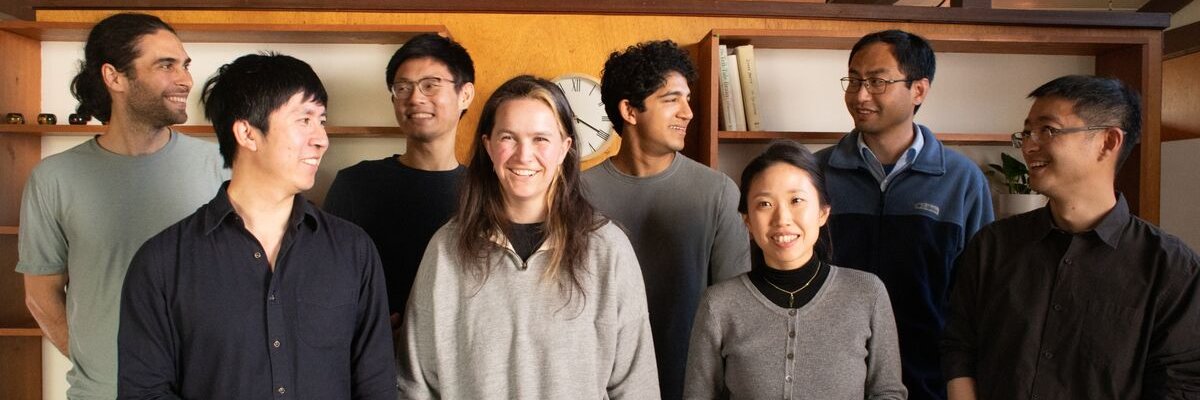

At Elorian, we believe solving visual reasoning is the next biggest problem in AI and we aim to responsibly improve technology wherever better visual understanding can help from engineering, through to robotics and agriculture. (2/n)