Anton Shevtsov retweetledi

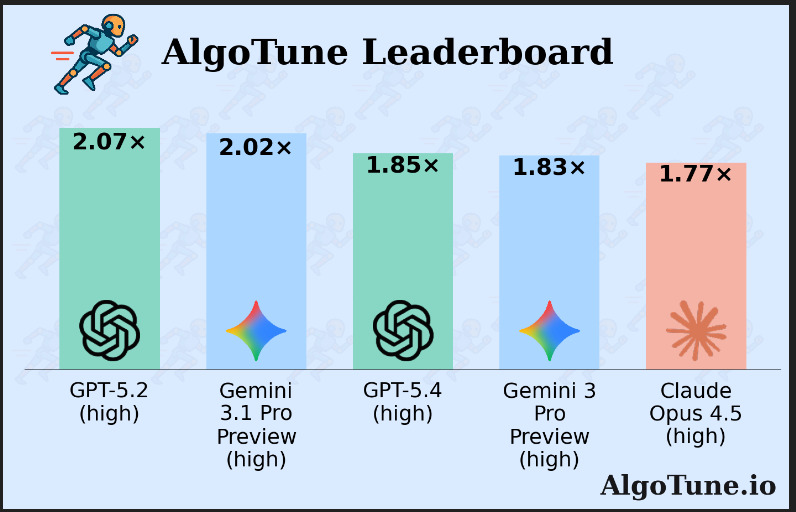

We evaluated GPT 5.4 on AlgoTune: for the first time an @OpenAI model is worse than its predecessor. Some analysis:

In graph_laplacian, GPT-5.2's approach is: build the sparse matrix once, call SciPy’s Laplacian routine, and return the sparse result directly.

(cont.)🧵

English