Shuming Hu

1.6K posts

Shuming Hu

@ShumingHu

distinguished machine not learning engineer

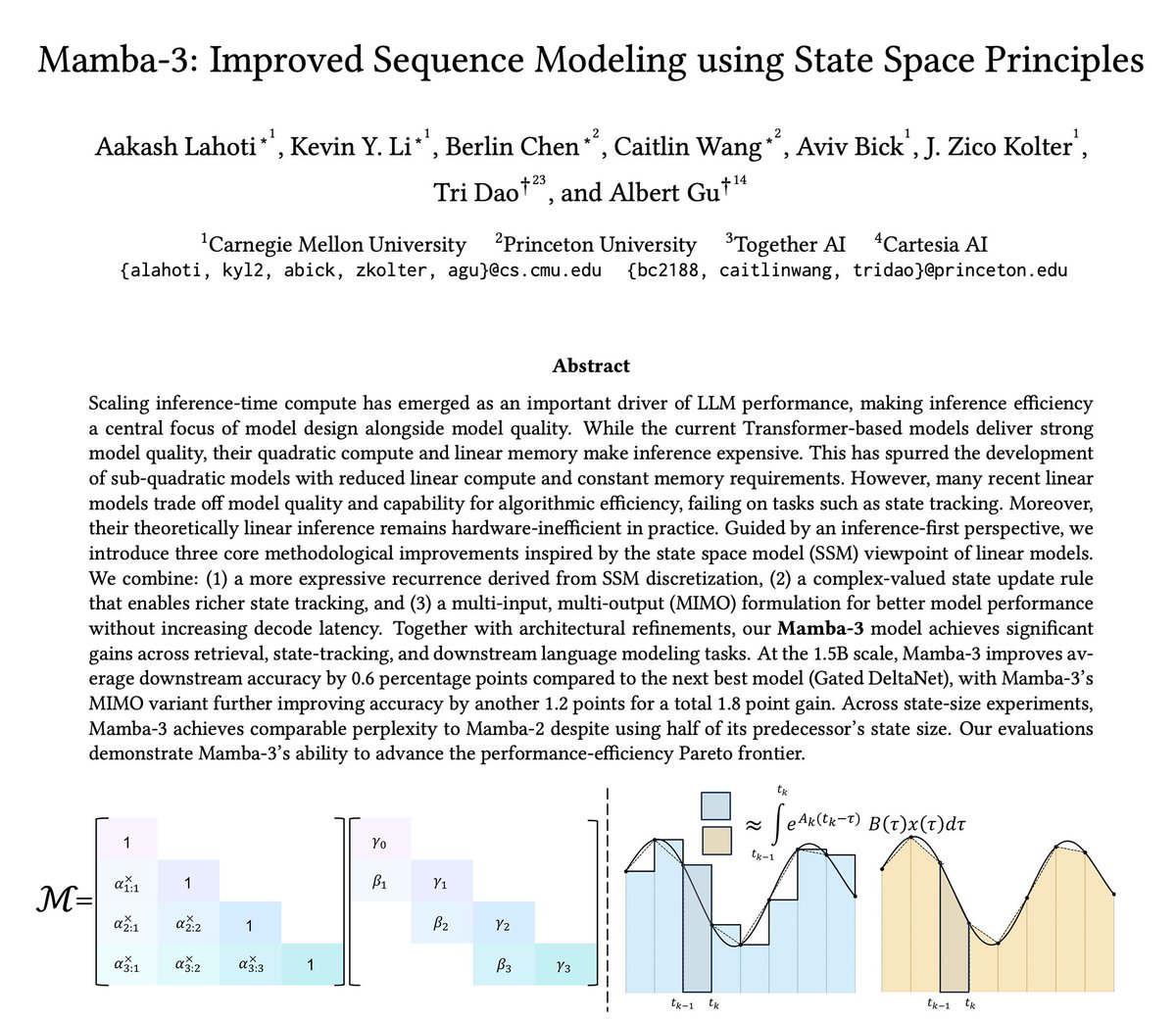

We're releasing a technical report describing how Composer 2 was trained.

Video might be the next intelligence substrate. Strikingly, video models are beginning to exhibit the same emergent reasoning behaviors first observed in LLMs—multi-path search, self-correction, and layer specialization. We demystify video reasoning and show it doesn’t happen frame-by-frame, but along diffusion steps. 🔗 wruisi.com/demystifying_v… 📄 huggingface.co/papers/2603.16… So, what's next? ;)

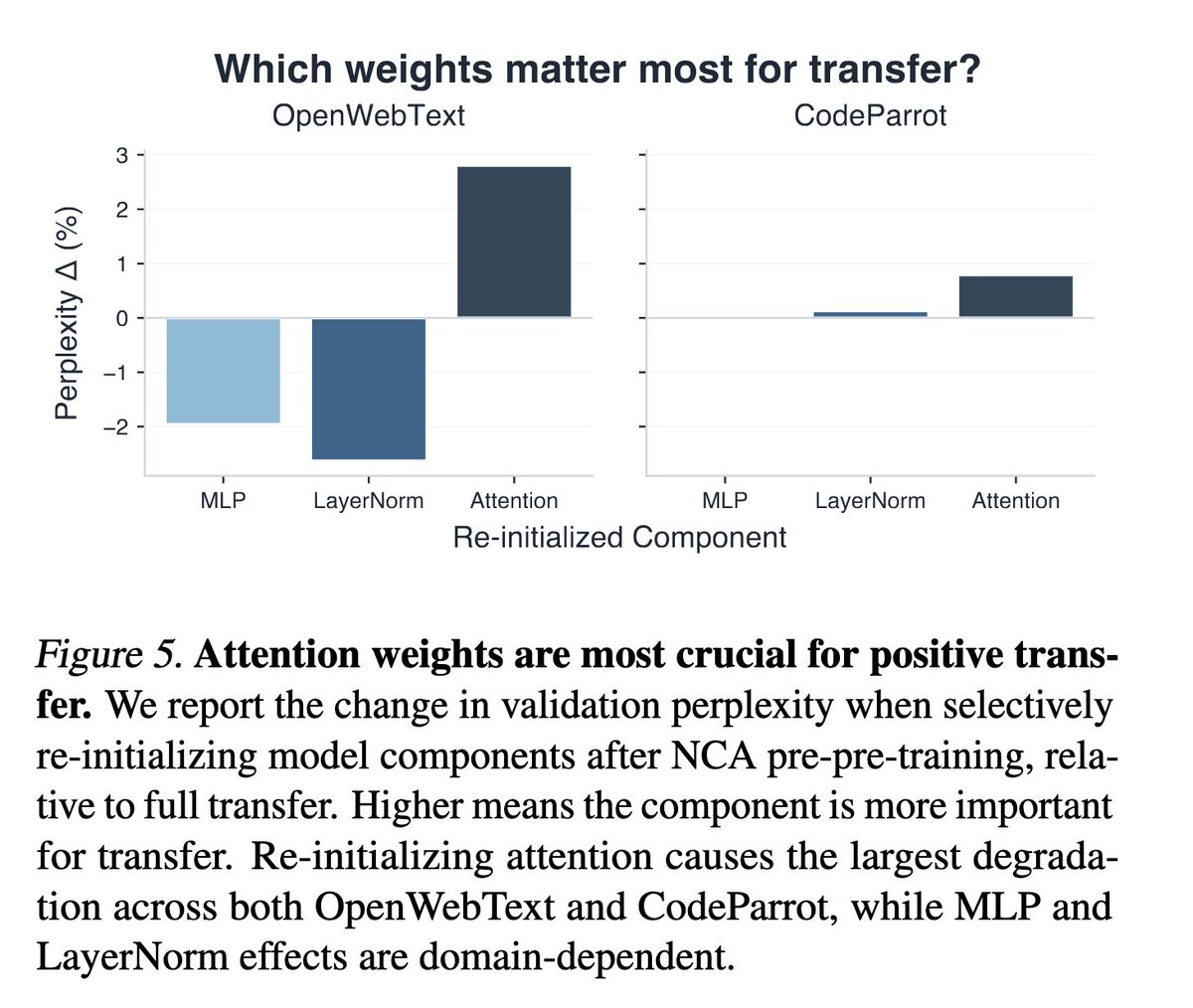

Can language models learn useful priors without ever seeing language? We pre-pre-train transformers on neural cellular automata — fully synthetic, zero language. This improves language modeling by up to 6%, speeds up convergence by 40%, and strengthens downstream reasoning. Surprisingly, it even beats pre-pre-training on natural text! Blog: hanseungwook.github.io/blog/nca-pre-p… (1/n)

interesting. IIRC, excluding current token's KV by attention mask (i.e. remove the diagonal) doesn't work! Hypothesis: this effectively makes current token to be an attention sink.