Sabitlenmiş Tweet

Imagine the Singularity is possible. Could we stop AI from taking over, or at least foresee disaster's immanence? Perhaps not. My latest for @NewYorker: newyorker.com/science/annals…

English

Matthew Hutson

2.1K posts

@SilverJacket

Freelance science writer for The New Yorker, Science, Nature, etc. Fire dancer. Into cognition—animal and mineral (aka psych & AI).

Our agreement with @SpaceX means we will use all the compute capacity at their Colossus 1 data center. This will give us over 300 megawatts of additional capacity to deploy within the month.

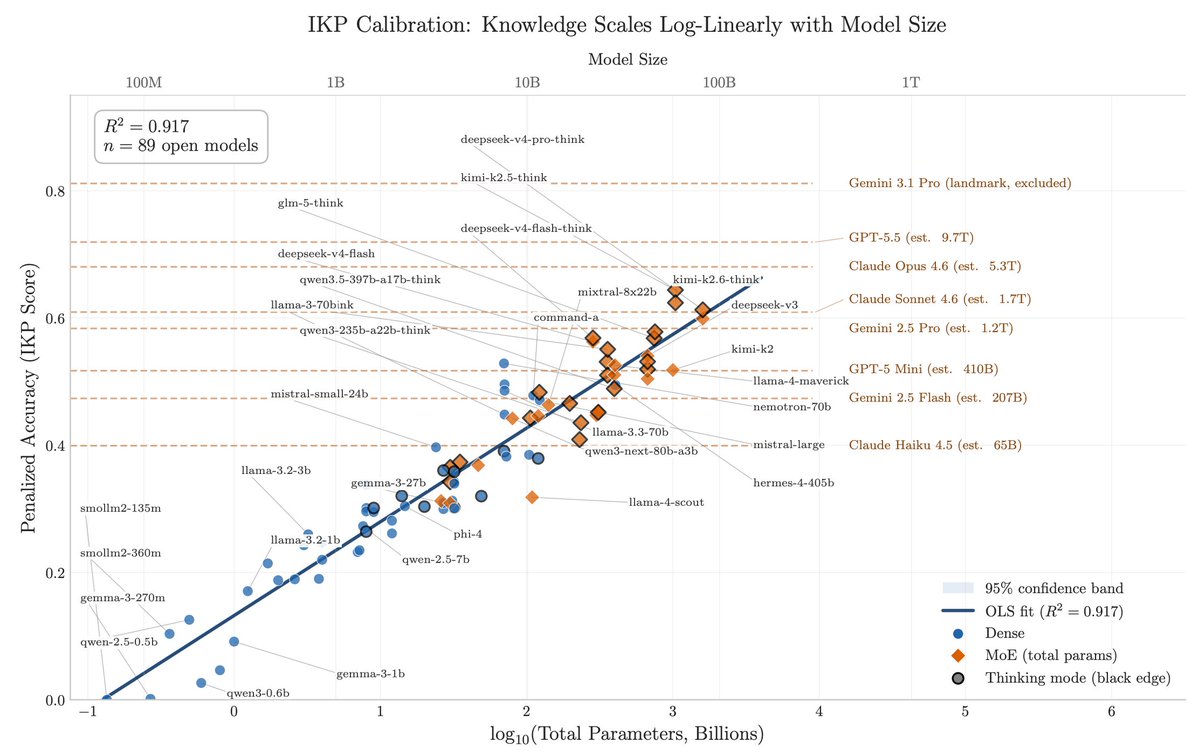

Grok-4.3 still behind chinese open-source

Introducing ChatGPT Images 2.0 A state-of-the-art image model that can take on complex visual tasks and produce precise, immediately usable visuals, with sharper editing, richer layouts, and thinking-level intelligence. Video made with ChatGPT Images