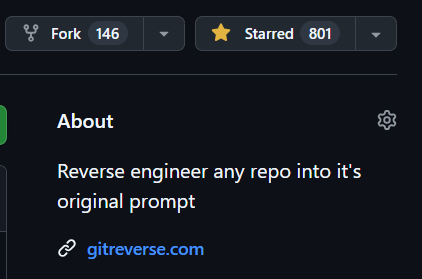

Lovepreet Singh

5.2K posts

Lovepreet Singh

@SinghDevHub

backend + ml | ex @cred_club • cs @iitgn

Unpopular opinion: Most agent evals are theatre. You run them once before the deployment. It'll take 800ms+ as another LLM would be judging your LLM. Most annoying part - no one tells where in the chain things went wrong. I wasted a lot of time in this loop. And then I came across @FutureAGI_ bringing 5 different tools under one umbrella, best part - the platform is completely open source. They open sourced their entire platform and the eval layer is noticeably different. It is multimodal - works on everything text, image, audio, pdf. Not an LLM-as-judge adding latency but an agent with memory and tools. The biggest win are learned classifiers trained on actual production failure patterns to run evals at low cost. It also runs across the full reasoning chain, not just the final response. Check out → github.com/future-agi/fut… Try it here → shorturl.at/PRSGX

TOMORROW: Design, build, and validate entire systems from a single spec 🏗️ Hands-on Stay COTI session with @TraycerAI, the AI workflow for spec-first engineering teams. 📅 Tuesday April 28th @ 2:00 PM UTC ▶️ Live here on X & YouTube 🔗 youtube.com/live/Hto3p42mQ…