Sipeed

5.3K posts

Sipeed

@SipeedIO

AIoT opensource hardware platform

中华人民共和国 Katılım Eylül 2018

240 Takip Edilen24.8K Takipçiler

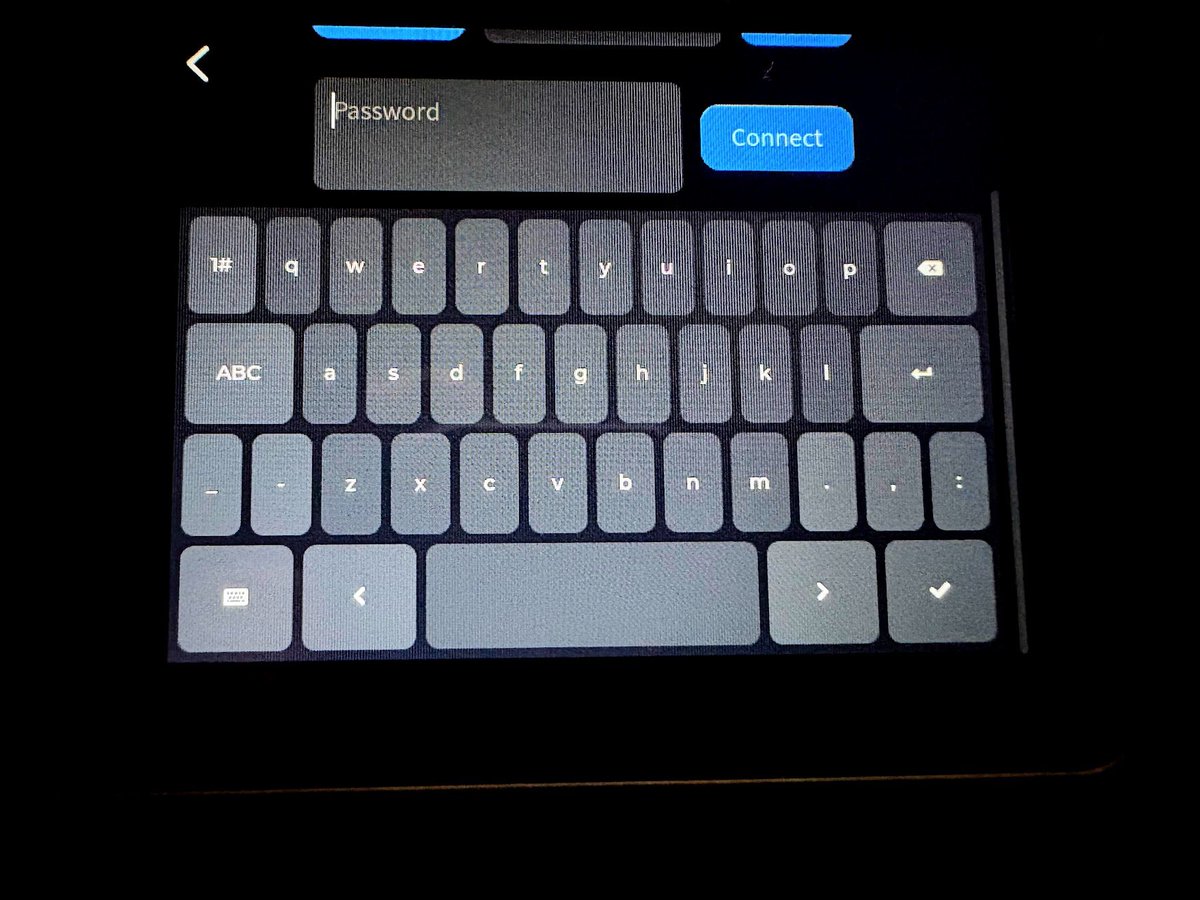

@UmbraAtrox_ Hi, DNS setting guide: #Setting-DNS" target="_blank" rel="nofollow noopener">wiki.sipeed.com/hardware/en/kv…

English

@SipeedIO nanokvm pcie. oled doesn't turn off despite 30s timeout, dhcp dns ignored/hardcoded, udhcpc/default.script appends interface comment in resolv.conf -> breaks dns lookup, hid becomes unresponsive. those i encountered. fw 2.3.6

English

@TheCareyHolzman kvm pro is 45℃,so this typec can't be so small, that will be more hot..

English

@SipeedIO I don't know. How hot does the nanokvm pro get? Because whatever temp that is very hot!

English

@wolfyportal_r18 A,B big enough to cool down, C too small and will be very hot. And as KVM usage, it should on the desktop never move...

English

@SipeedIO A and B have radiator, but C don't have

it's that mean? but small one has excellent portability

even stick a heat sink on the outside will still good

English

@KrisDarbyshire we support OS04A10, 1/1.8" . other sensor need fineturn ISP, that is hard.

English

@SipeedIO Wow cool! Only need to write a custom driver and rebuilding the entire kernel now😅 Has there been any other drivers included say in a MAIXCAM build other than the GC4653 & OV5647? Love the size, and capabilities of this board, but the camera options leave much to be desired! thx

English

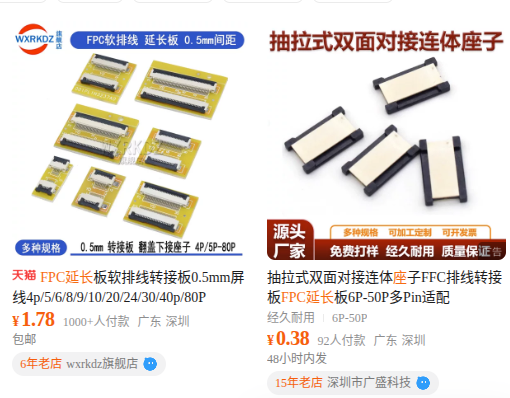

@SipeedIO is this connector going to happen? (github.com/sipeed/sipeed_…) Raspberry Pi Camera Adapter Ribbon Cable (Coming Soon)

English

@KrisDarbyshire RVNano's camera pin order is same as rpi camera, so you can use normal 22p fpc. A complementary fpc cable is included in gc4653 camera accessories

English