Sabitlenmiş Tweet

Saya.writes

409 posts

Saya.writes

@SiyaWrites

Developer | Writer |Fintech Enthusiast |Stoic Founder : https://t.co/xxh0PQ7VG1

Pretoria, South Africa Katılım Aralık 2019

95 Takip Edilen26 Takipçiler

Saya.writes retweetledi

Saya.writes retweetledi

Saya.writes retweetledi

Saya.writes retweetledi

Saya.writes retweetledi

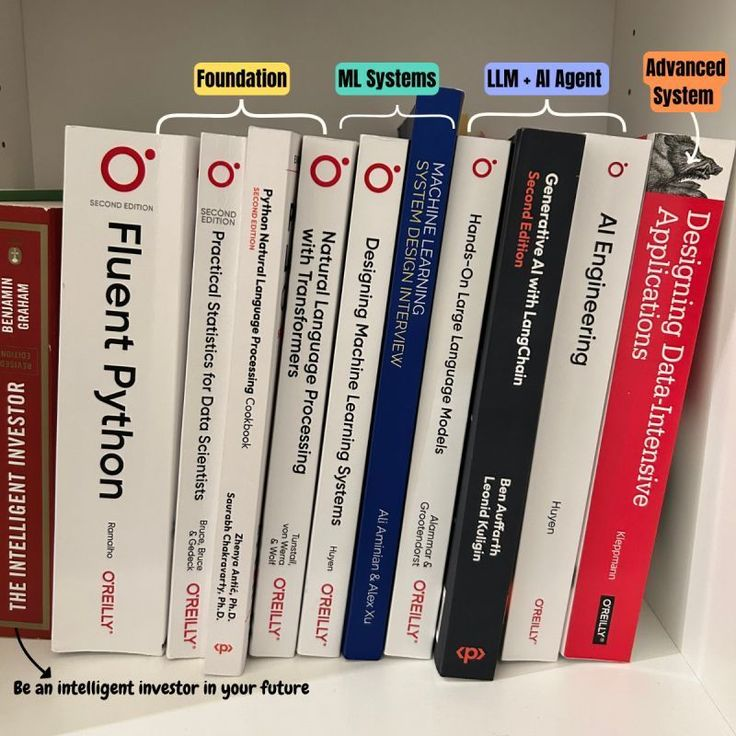

You only need to read four books to truly get what’s going on in data science and AI:

• Designing Machine Learning Systems by Chip Huyen

• AI Engineering by Chip Huyen

• Practical Statistics for Data Scientists by Peter Bruce, Andrew Bruce, and Peter Gedeck

• Hands-On Machine Learning with Scikit-Learn, Keras, and TensorFlow by Aurélien Géron

If you read these four technical books and then read these four books on business value and leadership, you’ll be well on your way to career success:

• The Lean Startup

• Good Strategy Bad Strategy

• The First 90 Days

• The Hard Thing About Hard Things

Any more you'd add?

English

Saya.writes retweetledi

2001: Learn SQL → get a job

2005: SQL + Excel → get a job

2010: SQL + Python + Stats → get a job

2015: SQL + Python + Stats + ML → get a job

2020: SQL + Python + Stats + ML + A/B Testing + Dashboards → get a job

2026:

SQL + Python + Stats + ML + A/B Testing + Dashboards

* Data Engineering

* System Design

* LLMs

* AI Agents

* MLOps

* Cloud (AWS/GCP/Azure)

* Data Pipelines

* Streaming (Kafka/Spark)

* Experimentation Platforms

* Business Understanding

* Communication Skills

* Domain Expertise

* “Ownership mindset”

* “Startup hustle”

* 5 YOE

→ Entry-level role

Somewhere along the way…

“entry-level” stopped meaning entry.

If you’re feeling overwhelmed, you’re not alone.

The bar didn’t just rise…

it multiplied.

English

Saya.writes retweetledi

start robotics in simulation before hardware.

hardware slows you down. simulation lets you think.

how to actually do it:

• pick a simulator → Gazebo or Isaac Sim. both give you physics, sensors, and robots out of the box

• learn the middleware → use ROS 2. nodes, topics, transforms. this is how real robots are wired

• spawn a simple robot → differential drive or a basic arm. don’t chase complexity early

• run teleop first → control it with keyboard/joystick. verify commands → motion works

• add sensors → camera, lidar. inspect topics, visualize in rviz

• close the loop → write a node that reads sensors and publishes cmd_vel. simple obstacle avoidance is enough

• log + replay → record bags, debug offline, iterate faster

why this works:

• crashes are free

• iteration is fast

• you debug logic, not wires

once the behavior is stable in sim, move to hardware.

simulation isn’t a shortcut.

it’s the fastest path to understanding.

English

Saya.writes retweetledi

Saya.writes retweetledi

𝐖𝐡𝐚𝐭 𝐢𝐬 𝐌𝐂𝐏 (𝐌𝐨𝐝𝐞𝐥 𝐂𝐨𝐧𝐭𝐞𝐱𝐭 𝐏𝐫𝐨𝐭𝐨𝐜𝐨𝐥)?

Most AI agents are trapped inside their own walls.

MCP is the protocol that connects them to the outside world data sources, tools, and workflows.

𝐖𝐡𝐚𝐭 𝐢𝐬 𝐌𝐂𝐏?

• MCP is an open-source standard that connects AI applications to external systems like data sources, tools, and workflows.

• It enables seamless integrations, allowing AI models like ChatGPT to access data, use tools, and perform tasks like web app creation or database queries.

• MCP simplifies development, reducing complexity and time by providing a standardized way to connect AI systems to various resources.

• It enhances AI capabilities, making models more powerful and personalized by allowing them to interact with external systems and data on behalf of users.

𝐁𝐞𝐟𝐨𝐫𝐞 𝐌𝐂𝐏

LLM → Slack, Google Drive, GitHub (separate connections for each).

Every integration is custom. Every tool requires its own API client. Every agent reinvents the wheel.

𝐀𝐟𝐭𝐞𝐫 𝐌𝐂𝐏

LLM → Unified API (MCP) → Slack, Google Drive, GitHub.

One protocol. One connection layer. Every tool accessible through a standardized interface.

𝐇𝐨𝐰 𝐌𝐂𝐏 𝐖𝐨𝐫𝐤𝐬?

User → User Query → MCP Client → Invoke Graph → LangGraph → Route Request → OpenAI GPT → Tool Decision → Call MCP Tool → MCP Server → External API Call → External APIs → API Response → MCP Server → Tool Result → OpenAI GPT → Generate Response → MCP Client → Natural Language Response → Final Result User → Agent Response → User.

𝐓𝐡𝐞 𝐅𝐥𝐨𝐰

1. User sends a query to the MCP Client.

2. MCP Client invokes LangGraph to route the request.

3. OpenAI GPT makes a tool decision and calls the MCP Tool.

4. MCP Server makes an external API call to the appropriate service (Slack, Google Drive, GitHub, etc.).

5. External API returns a response to the MCP Server.

6. MCP Server sends the tool result back to OpenAI GPT.

7. OpenAI GPT generates a natural language response.

8. MCP Client delivers the final result to the user.

Before MCP, every agent built its own integrations. After MCP, every agent shares the same connection layer.

MCP is the protocol that turns isolated AI models into connected AI agents.

𝐀𝐫𝐞 𝐲𝐨𝐮 𝐛𝐮𝐢𝐥𝐝𝐢𝐧𝐠 𝐀𝐈 𝐚𝐠𝐞𝐧𝐭𝐬 𝐰𝐢𝐭𝐡 𝐜𝐮𝐬𝐭𝐨𝐦 𝐢𝐧𝐭𝐞𝐠𝐫𝐚𝐭𝐢𝐨𝐧𝐬 𝐨𝐫 𝐰𝐢𝐭𝐡 𝐌𝐂𝐏?

♻️ Repost this to help your network get started

Cc : respective author.

GIF

English

Saya.writes retweetledi

Learn Coding by playing games

1. Kubernetes

k8sgames.com

2. DevOps

devops.games

3. Linux

overthewire.org

4. Git

ohmygit.org

5. Python

codecombat.com

6. CSS & HTML

codepip.com

7. Cybersecurity

picoctf.org

8. Mobile Coding (like Duolingo)

sololearn.com

9. For Complete Beginners

scratch.mit.edu

10. 25+ Programming Languages

codingame.com

English

Saya.writes retweetledi

Saya.writes retweetledi

AI concepts developers should know:

1. RAG (Retrieval-Augmented Generation)

↳ Retrieves relevant external data to ground model responses.

2. MCP (Model Context Protocol)

↳ A standard for connecting models to external tools, data, and context.

3. Model Routing

↳ Dynamically selecting the best model for a given task.

4. Embeddings

↳ Turning data into vectors so models can search and compare meaning.

5. Context Windows

↳ The amount of information a model can process at once.

6. Evals

↳ Measuring the quality, reliability, and behavior of AI systems.

7. Multi-Agent Systems

↳ Multiple agents collaborating to solve complex tasks.

8. A2A (Agent-to-Agent)

↳ How agents communicate and coordinate with each other.

9. Memory & State Management

↳ Persisting and retrieving context across interactions.

Understanding these concepts is one thing. Seeing them work together is where it clicks.

Oracle has a great guide that walks you through building a scalable multi-agent RAG system → lucode.co/multi-agent-ra…

Their free DeepLearning course on building memory-aware agents also puts these concepts into action: lucode.co/building-memor…

What else should be on the list?

——

♻️ Repost to help others learn AI.

🙏 Thanks to @Oracle for sponsoring this post.

➕ Follow me ( Nikki Siapno ) to improve at AI engineering.

English

Saya.writes retweetledi

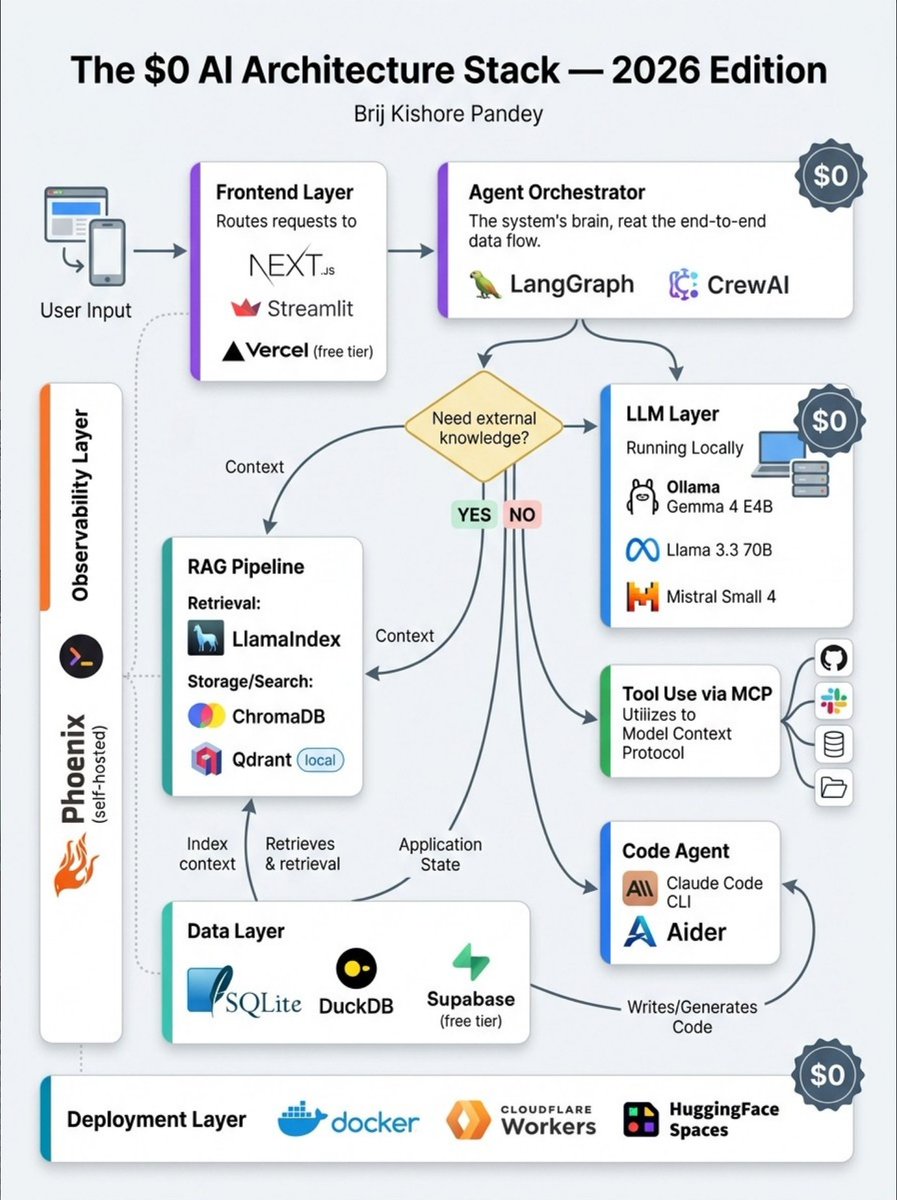

You don't need to spend a single dollar to build a production AI system in 2026.

Here's the full stack:

→ LLM: Ollama + Gemma 4 / Llama 3.3 / Mistral Small 4 (local, free)

→ Orchestration: LangGraph / CrewAI (open source)

→ RAG: LlamaIndex + ChromaDB / Qdrant (local)

→ Tool Layer: MCP — the open protocol connecting agents to everything

→ Code Agent: Claude Code CLI / Aider

→ Frontend: Next.js + Vercel free tier / Streamlit

→ Data: SQLite / DuckDB / Supabase free tier

→ Observability: Langfuse / Phoenix (self-hosted)

→ Deploy: Docker / Cloudflare Workers / HuggingFace Spaces

Total cost → $0.

The tools are free.

The architecture knowledge is what's valuable.

Save this for your next build 🔖

Credit: codewithbrij

#AIArchitecture #AgenticAI #LLM #Ollama #Gemma4 #LangGraph

English

Saya.writes retweetledi

Saya.writes retweetledi

Saya.writes retweetledi

Congratulations to the newly appointed HoD for the Gauteng Provincial Department of Roads & Transport Mr. J.Makhafola

We join the Gauteng Government and Transport stakeholders in wishing you the best in your new role.

@Dotransport @CityTshwane @City_Ekurhuleni

English

Saya.writes retweetledi

Saya.writes retweetledi

Saya.writes retweetledi

The WORST part about tech right now? Nobody understands how their own apps work anymore.

We are officially entering the era of "Fear-Driven Development."

Here is how it happens:

You need to set up a complex background job system. You ask an AI agent to build it using Google Cloud Task Queue and Firebase.

It spits out 800 lines of perfect, functional Python and configuration logic in 12 seconds.

You deploy it. It works. The product managers cheer.

Six months later, the system starts silently dropping events under heavy load.

You open the codebase. You stare at the 800 lines.

You realize you have absolutely no idea how the routing logic actually works. You didn't struggle through the documentation. You didn't build the mental model.

You are suddenly terrified to change a single variable. You didn't write the app—you just approved the Pull Request.

We used to build software block by block. Now we just summon it, and hope the spell doesn't wear off.

If you want to survive this next decade of engineering, make this your golden rule:

Never deploy AI-generated code that you couldn't explain on a whiteboard.

You don't own the code you didn't architect. You are just renting it from the AI.

English

Saya.writes retweetledi