Sabitlenmiş Tweet

𝗙𝗹𝗼

8.3K posts

𝗙𝗹𝗼 retweetledi

𝗙𝗹𝗼 retweetledi

𝗙𝗹𝗼 retweetledi

@maximumpain333 This advice is dangerous. Chronic sleep fragmentation raises cortisol, accelerates epigenetic aging, and impairs glucose regulation. Insomnia is associated with depression, metabolic disease, and early mortality.

English

𝗙𝗹𝗼 retweetledi

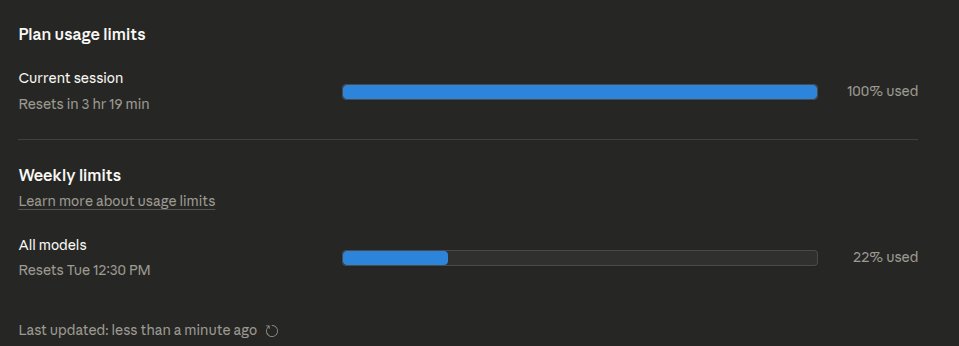

@bridgemindai wait I'm not receiving neither the email nor extra usage credits. 5x max plan. Is it a gradual rollout?

English

The truth is finally out.

Anthropic just emailed every Claude subscriber.

The rate limits weren't a bug.

Third party tools like OpenClaw were putting "outsized strain" on their systems.

Anthropic's fix? Cut them off.

Starting April 4, third-party harnesses no longer get your subscription limits.

Pay-as-you-go only.

To make up for it, every subscriber gets a one time credit equal to their monthly subscription.

I'm getting $200 in extra usage.

This is huge.

If this is what was killing Claude Code rate limits for Max plan users, tomorrow should feel like a completely different product.

I'll be testing Claude Opus 4.6 all day and reporting back.

Stay tuned.

English

𝗙𝗹𝗼 retweetledi

PSA: If you've been running out of Claude session quotas on Max tier, you're not alone. Read this.

Some insane Redditor reverse engineered the Claude binaries with MITM to find 2 bugs that could have caused cache-invalidation. Tokens that aren't cached are 10x-20x more expensive and are killing your quota.

If you're using your API keys with Claude this is even worse. This is also likely why this isn't uniform, while over 500 folks replied to me and said "me too", many (including me) didn't see this issue.

There are 2 issues that are compounded here (per Redditor, I haven't independently confirmed this) :

1s bug he found is a string replacement bug in bun that invalidates cache. Apparently this has to do with the custom @bunjavascript binary that ships with standalone Claude CLI.

The workaround there is to use Claude with `npx @anthropic-ai/claude-code`

2nd bug is worse, he claims that --resume always breaks cache. And there doesn't seem to be a workaround there, except pinning to a very old version (that will miss on tons of features)

This bug is also documented on Github and confirmed by other folks.

I won't entertain the conspiracy theories there that Anthropic "chooses" to ignore these bugs because it gets them more $$$, they are actively benefiting from everyone hitting as much cached tokens as possible, so this is absolutely a great find and it does align with my thoughts earlier.

The very sudden spike in reporting for this, the non-uniform nature (some folks are completely fine, some folks are hitting quotas after saying "hey") definitely points to a bug.

cc @trq212 @bcherny @_catwu for visibility in case this helps all of us.

Alex Volkov@altryne

My feed is showing me a bunch of folks who tapped out their whole usage limits on Mon/Tue. Is this your experience? Please comment, I want to understand how widespread this is

English

𝗙𝗹𝗼 retweetledi

@ojim_france C'est quoi le rapport avec l'image d'un homme qui fait du sport ?

Français

𝗙𝗹𝗼 retweetledi

Cursor is raising at a $50 billion valuation on the claim that its “in-house models generate more code than almost any other LLMs in the world.” Less than 24 hours after launching Composer 2, a developer found the model ID in the API response: kimi-k2p5-rl-0317-s515-fast.

That’s Moonshot AI’s Kimi K2.5 with reinforcement learning appended. A developer named Fynn was testing Cursor’s OpenAI-compatible base URL when the identifier leaked through the response headers. Moonshot’s head of pretraining, Yulun Du, confirmed on X that the tokenizer is identical to Kimi’s and questioned Cursor’s license compliance. Two other Moonshot employees posted confirmations. All three posts have since been deleted.

This is the second time. When Cursor launched Composer 1 in October 2025, users across multiple countries reported the model spontaneously switching its inner monologue to Chinese mid-session. Kenneth Auchenberg, a partner at Alley Corp, posted a screenshot calling it a smoking gun. KR-Asia and 36Kr confirmed both Cursor and Windsurf were running fine-tuned Chinese open-weight models underneath. Cursor never disclosed what Composer 1 was built on. They shipped Composer 1.5 in February and moved on.

The pattern: take a Chinese open-weight model, run RL on coding tasks, ship it as a proprietary breakthrough, publish a cost-performance chart comparing yourself against Opus 4.6 and GPT-5.4 without disclosing that your base model was free, then raise another round.

That chart from the Composer 2 announcement deserves its own paragraph. Cursor plotted Composer 2 against frontier models on a price-vs-quality axis to argue they’d hit a superior tradeoff. What the chart doesn’t show is that Anthropic and OpenAI trained their models from scratch. Cursor took an open-weight model that Moonshot spent hundreds of millions developing, ran RL on top, and presented the output as evidence of in-house research. That’s margin arbitrage on someone else’s R&D dressed up as a benchmark slide.

The license makes this more than an attribution oversight. Kimi K2.5 ships under a Modified MIT License with one clause designed for exactly this scenario: if your product exceeds $20 million in monthly revenue, you must prominently display “Kimi K2.5” on the user interface. Cursor’s ARR crossed $2 billion in February. That’s roughly $167 million per month, 8x the threshold. The clause covers derivative works explicitly.

Cursor is valued at $29.3 billion and raising at $50 billion. Moonshot’s last reported valuation was $4.3 billion. The company worth 12x more took the smaller company’s model and shipped it as proprietary technology to justify a valuation built on the frontier lab narrative.

Three Composer releases in five months. Composer 1 caught speaking Chinese. Composer 2 caught with a Kimi model ID in the API. A P0 incident this year. And a benchmark chart that compares an RL fine-tune against models requiring billions in training compute without disclosing the base was free.

The question for investors in the $50 billion round: what exactly are you buying? A VS Code fork with strong distribution, or a frontier research lab? The model ID in the API answers that.

If Moonshot doesn’t enforce this license against a company generating $2 billion annually from a derivative of their model, the attribution clause becomes decoration for every future open-weight release. Every AI lab watching this is running the same math: why open-source your model if companies with better distribution can strip attribution, call it proprietary, and raise at 12x your valuation?

kimi-k2p5-rl-0317-s515-fast is the most expensive model ID leak in the history of AI licensing.

Harveen Singh Chadha@HarveenChadha

things are about to get interesting from here on

English

𝗙𝗹𝗼 retweetledi

𝗙𝗹𝗼 retweetledi

@veermasrani @claudeai @AnthropicAI I have the same problem, have been using it for months, and the last two days I get usage limited in an hour it's absurd

English

𝗙𝗹𝗼 retweetledi

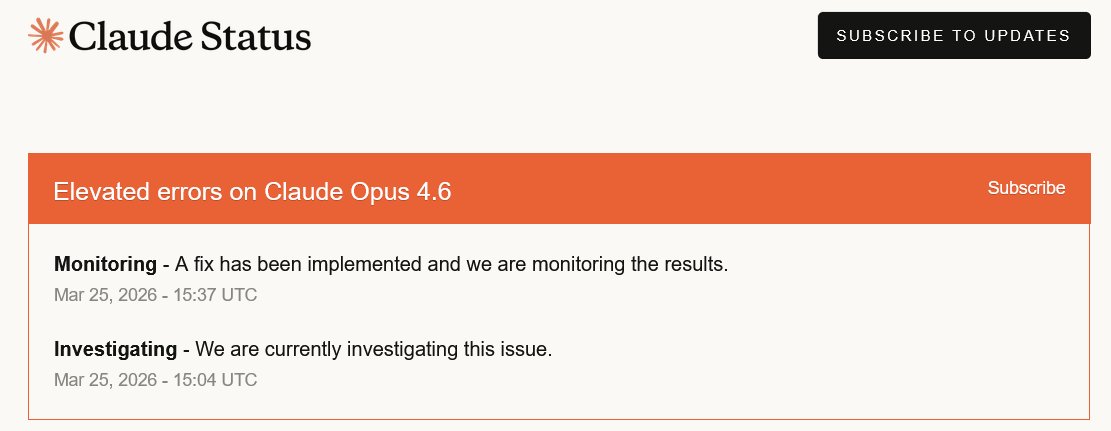

Claude Paid Plan Limits Are Suddenly Draining in Minutes

My Claude Pro usage limit has been getting exhausted within minutes, sometimes after just a few prompts.

This started during the 2x off-peak promotion, but the limit bar now fills almost instantly. Others on Pro and Max are reporting the same behavior, while Anthropic’s status page still shows all systems operational.

English

𝗙𝗹𝗼 retweetledi

𝗙𝗹𝗼 retweetledi

Introducing TurboQuant: Our new compression algorithm that reduces LLM key-value cache memory by at least 6x and delivers up to 8x speedup, all with zero accuracy loss, redefining AI efficiency. Read the blog to learn how it achieves these results: goo.gle/4bsq2qI

GIF

English

𝗙𝗹𝗼 retweetledi

𝗙𝗹𝗼 retweetledi

𝗙𝗹𝗼 retweetledi

@LouisWitter T’es pas le côté le plus frais de l’oreiller

Français

𝗙𝗹𝗼 retweetledi

𝗙𝗹𝗼 retweetledi