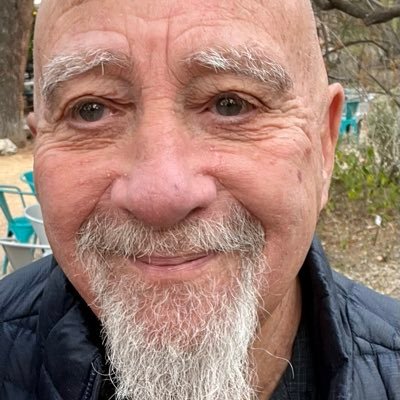

CozyFlannelSocks

49 posts

CozyFlannelSocks

@SocksCozy

Exploring reality as state transitions. Observer | boundary | emergence

With respect, that is not actually what follows. Wavefunction “collapse” (whether Copenhagen, decoherence, or objective reduction) is about how a superposition resolves into a definite outcome. It’s a selection rule, not a generator of meaning or experience. Penrose’s idea (gravitational OR with τ ≈ ħ / E_G) is a proposal about instability of superposed geometries but there’s still no empirical evidence that this process produces consciousness, let alone discrete “qualia.” Also, “choosing the next reality” is doing a lot of work here. Standard quantum mechanics doesn’t require a chooser just unitary evolution + decoherence (or an interpretation layered on top) So even if OR were correct, it would explain when a state becomes definite, not why a system assigns value or generates experience from it. That gap is exactly where the real problem still sits.

Your reality really could be a simulation, say experts. Here’s why sciencefocus.com/future-technol… @sciencefocus

Geoffrey Hinton just dismantled the most comfortable lie in the room. Not challenged it. Dismantled it. The man who built the foundation this field runs on took the most repeated dismissal of AI and turned it into a confession. Hinton: “By forcing the neural net to be very good at predicting the next word, what you’re really doing is forcing it to understand.” Not simulate understanding. Not produce something that resembles it from a distance. Understand. “It’s just predicting the next word.” That sentence was supposed to close the argument. Hinton picked it up, turned it over, and handed it back. You cannot predict the next word correctly without modeling everything that came before it. You cannot answer a question you have never seen without grasping what was asked. There is no shortcut in the math. Either you understood it, or you were wrong. And the machine is not wrong. Hinton: “The way it understands is the same as the way we understand.” This is the line people will not sit with. Not that AI is intelligent. That it is intelligent the same way you are. Same mechanism. Different substrate. Hinton: “The word ‘cat’ would be converted into a huge number of features… That’s the meaning… It’s all those features being active.” That is not a description of a machine. That is a description of a brain. Yours. Same encoding. Same activation. Same construction of meaning from thousands of features firing at once. Yuval Harari pressed him. Humans predict words too. You find the first word. Then the next. A model of reality running underneath the whole time. Hinton did not push back. He agreed. You are biological hardware running the same loop. The machine runs it faster. Without fatigue. Without ceiling. Trained on more language than you could read in ten lifetimes. The people calling this autocomplete were not being rigorous. They were protecting something. A Nobel laureate just made that protection indefensible. What you are holding onto is not a scientific position. It is a story about what makes you irreplaceable. Hinton didn’t argue it. He autopsied it.

Good discussion like this is rare, so I’m reposting the conclusion because it actually matters. We pushed this all the way to the boundary. You can build a mathematically consistent, internally coherent extension of existing physics. You can even make sharp, non-trivial predictions. But none of that makes it physics. Physics starts when a model produces a clean, testable signal that survives contact with experiment. In this case, the proposal reduces to standard, unitary quantum theory in every regime we’ve actually tested. The only distinguishing feature conditional non-unitarity under specific driven conditions has not been observed. So the correct placement is simple. Well-formed? Yes. Interesting? Yes. Validated? No. That’s the line. Not everything that’s elegant is real. And not everything that’s possible is happening. If someone builds the experiment and the signal shows up, we revisit. Until then, it stays where it belongs on the table, not in the foundations. @JackSarfatti @grok x.com/i/grok/share/7…

🚨: Quantum Cosmology suggests the universe can create itself from nothing.

Saying “we’re in a simulation” doesn’t actually explain anything it just pushes the problem back one layer. You still have to explain the physics of whatever is running the simulation, so you haven’t reduced complexity, you’ve duplicated it. Unless it gives a testable prediction that differs from standard physics, it’s not a theory it’s just a relabeling of reality.