Spencer Moore

336 posts

Spencer Moore

@SponceyM

Scientist @herasight

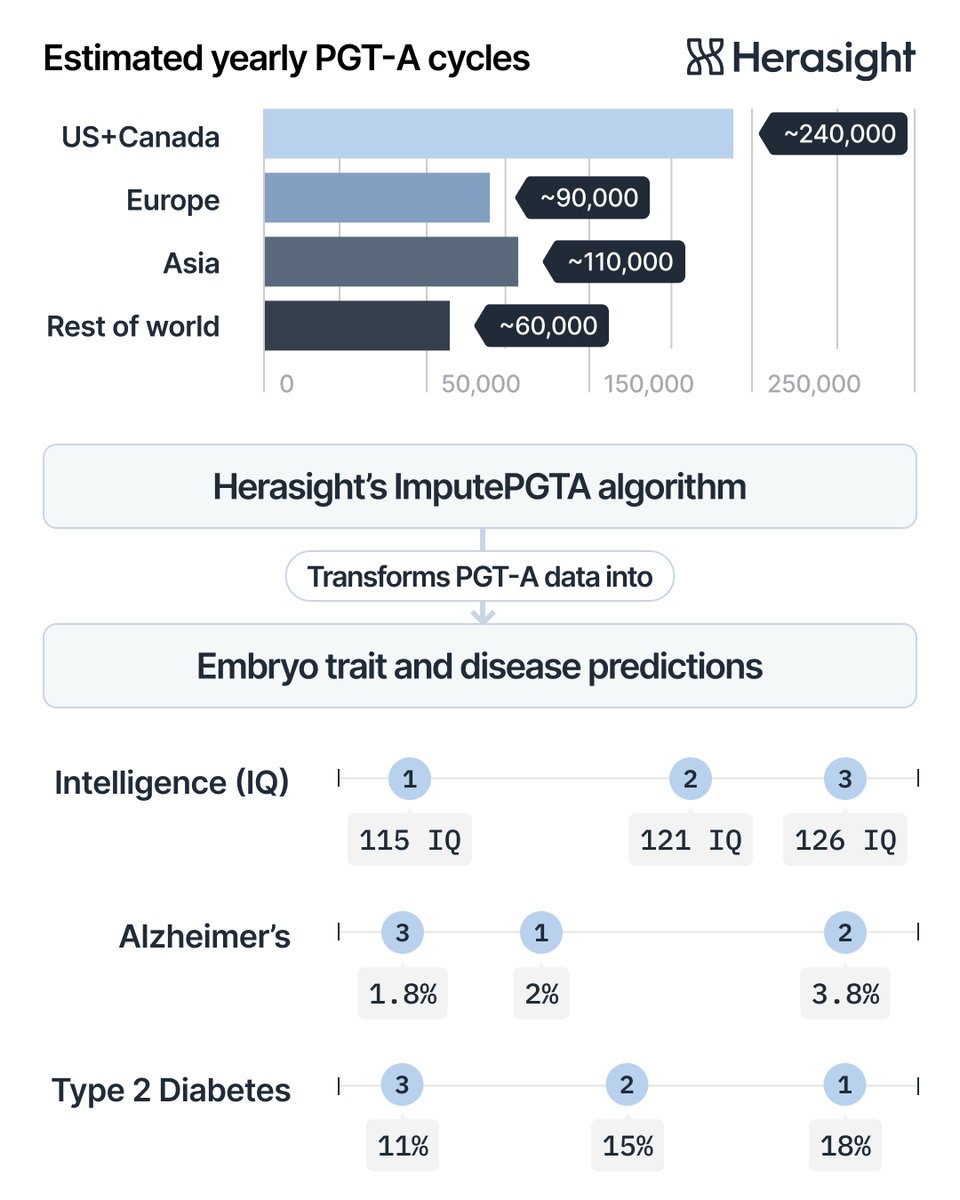

This week, the Herasight team presented three papers at the ACMG Annual Meeting in Baltimore. The work addresses three important contributions to the science of PGT-P: 1. Validation of polygenic predictors 2. Type 1 diabetes risk modeling 3. Embryo genome imputation 🧵

More folks in the set of "lucky enough to have the brain and personality to do whatever they want" should apply that to high leverage things they enjoy, rather than "following the Type A path". Wild to meet unhappy elite academics - just go do something else, gang! You can! 3/3

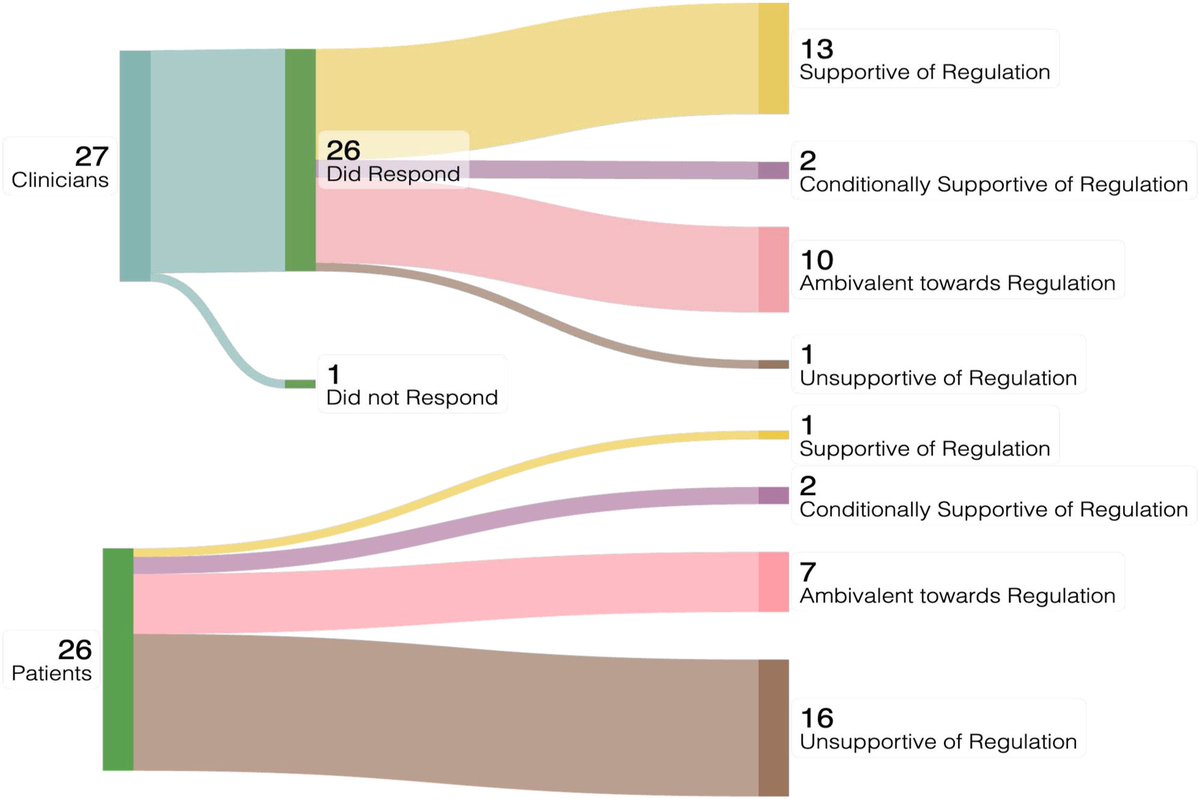

Governance of polygenic embryo screening: A qualitative study on the perspectives of clinicians and patients Full text 👇 fertstert.org/article/S0015-…

Today we reveal CogPGT, the world’s most powerful genetic predictor of IQ. We achieve a correlation with IQ of 0.51 (0.45 within-family). Herasight customers can boost the expected IQ of their children by up to 9 points by selecting the embryo with the highest CogPGT score. 🧵

GPT-5.2 derived a new result in theoretical physics. We’re releasing the result in a preprint with researchers from @the_IAS, @VanderbiltU, @Cambridge_Uni, and @Harvard. It shows that a gluon interaction many physicists expected would not occur can arise under specific conditions. openai.com/index/new-resu…

Codex 5.3 and Opus 4.6 in their respective coding agent harnesses have meaningfully updated my thinking about 'continual learning.' I now believe this capability deficit is more tractable than I realized with in-context learning. One way 4.6 and 5.3 alike seem to have improved is that they are picking up progressively more salient facts by consulting earlier codebases on my machine. In short, both models notice more than they used to about their 'computational environment' i.e. my computer. In part this is because the computational environment has itself become richer *because of coding agents*. Six months ago where were perhaps a dozen codebases on my machine; today there are hundreds, many of them involving the sophisticated orchestration of complex software systems. So there is more interesting stuff for agents to notice than there would have been even just a few months ago. Of course, another reason models notice more is that they are getting smarter. When I ask 4.6 in particular to do some complex project, it will look for times when I (/my coding agents) have tackled similar problems, made similar architectural/infrastructural decisions, or even drawn on the same datasets. It will say things like, "I noticed, on this unrelated project from two months ago, that you ran into a problem here because of [e.g.] a non-obvious data preprocessing step required for using this dataset with Tool Y. Since our plan is to use Tool Y again for this project, I'll keep this in mind when I build the data processing pipeline." This sort of thing would occasionally happen with 4.5 and 5.2, especially if I told them to consult related projects, but they did not usually do this organically. Even when the earlier agents did this, they rarely extracted as salient an insight. Some of the insights I've seen 4.6 and 5.3 extract are just about my preferences and the idiosyncrasies of my computing environment. But others are somewhat more like "common sets of problems in the interaction of the tools I (and my models) usually prefer to use for solving certain kinds of problems." This is the kind of insight a software engineer might learn as they perform their duties over a period of days, weeks, and months. Thus I struggle to see how it is not a kind of on-the-job learning, happening from entirely within the 'current paradigm' of AI. No architectural tweaks, no 'breakthrough' in 'continual learning' required. This seems like a positive feedback loop from agent adoption: more people using coding agents clearly means (a) more examples of in-the-wild software engineering for labs to use for post training and (b) more examples of the user's prior coding agent projects as a form of in-context learning about the user's computing environment, preferences, etc. There are many directions you can imagine labs taking this positive feedback loop. Perhaps this helps to explain why some lab employees have claimed that they expect continual learning to be largely solved by the end of this year. I am not so sure how satisfyingly in-context learning/memory will actually solve continual learning (more sample-efficient learning algorithms seems straightforwardly better), but I am prepared to believe this will solve a lot. I've already seen performance improvements from relatively modest examples of this enhanced in-context learning, which again I doubt is the result of some architectural tweak but is simply the product of the models getting smarter *and* diffusion meaning that the models have richer data resources to mine, both in training and at inference time. Overall, 4.6 and 5.3 are both astoundingly impressive models. You really can ask them to help you with some crazy ambitious things. The big bottleneck, I suspect, is users lacking the curiosity, ambition, and knowledge to ask the right questions.