Stanislav Fort

2.4K posts

@stanislavfort

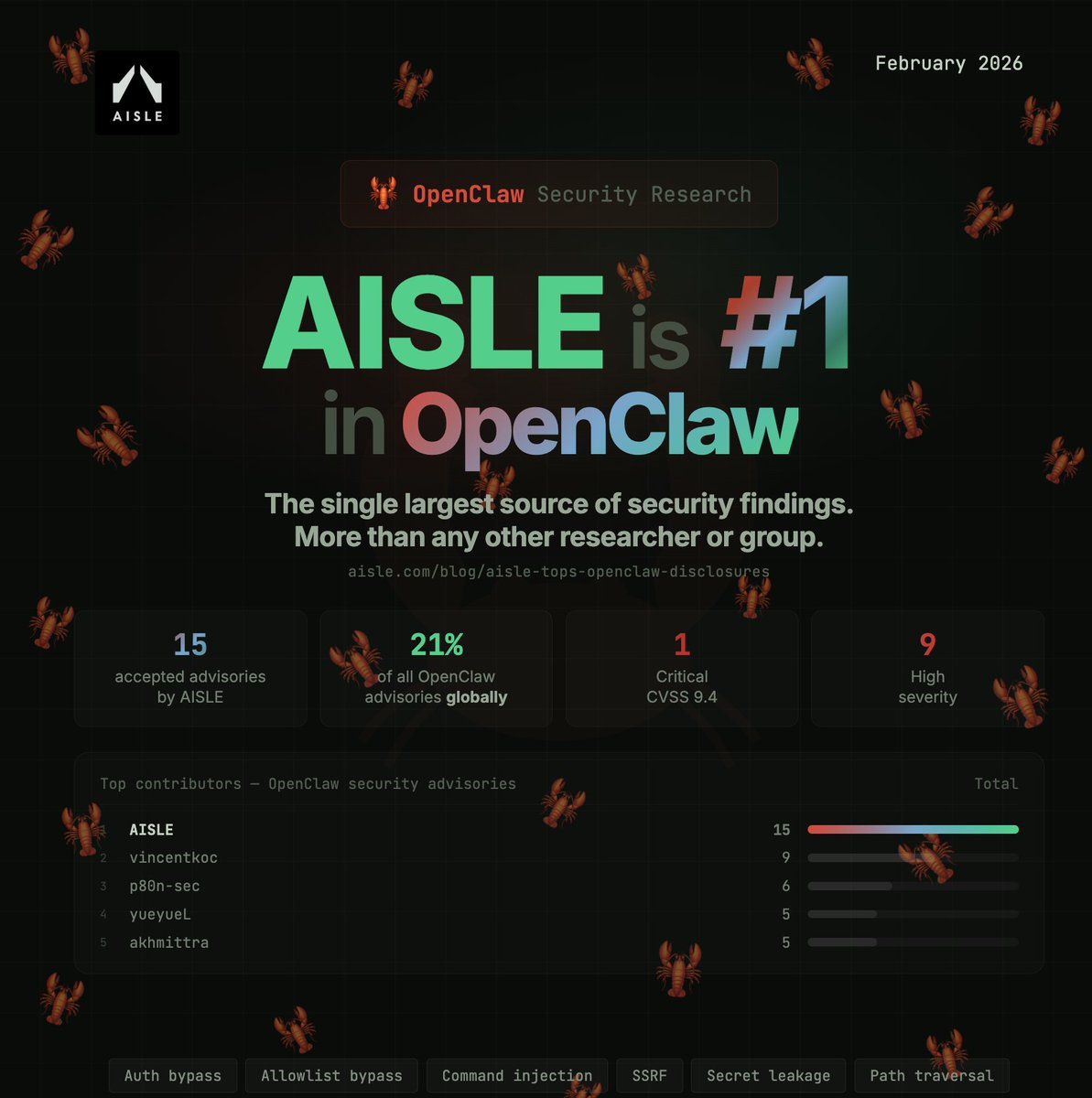

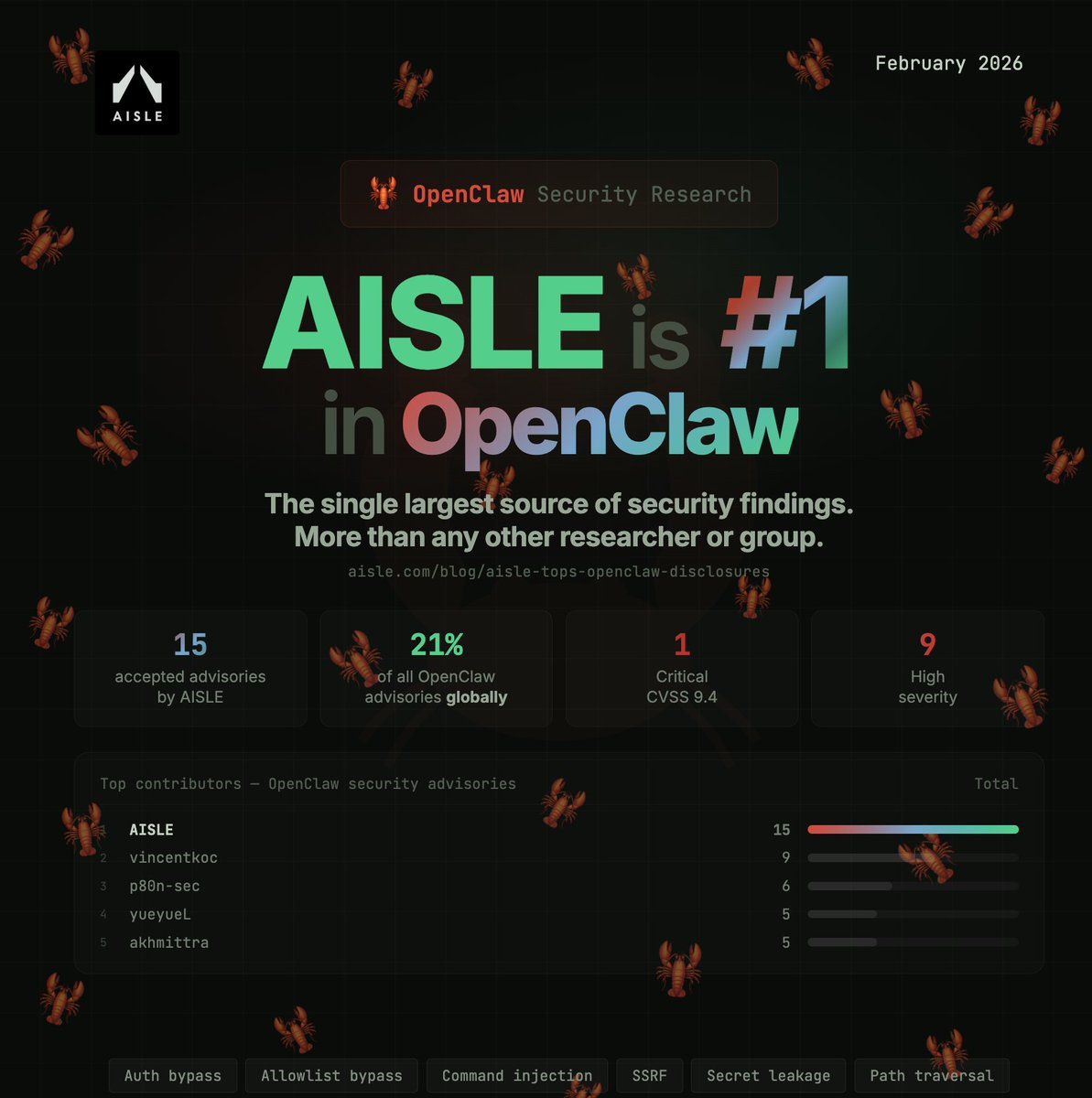

AI security @Aisle_Inc | Stanford PhD in AI & Cambridge physics | ex-Anthropic and DeepMind | scientific progress + economic growth

@JacobAShell For international train tickets, I warmly recommend to buy from db.de

Ten years ago, AlphaGo’s legendary match in Seoul heralded the start of the modern era in AI. Its famous ‘Move 37’ signaled to us that AI techniques were ready to tackle real-world problems in areas like science - and ideas inspired by these methods are critical to building AGI

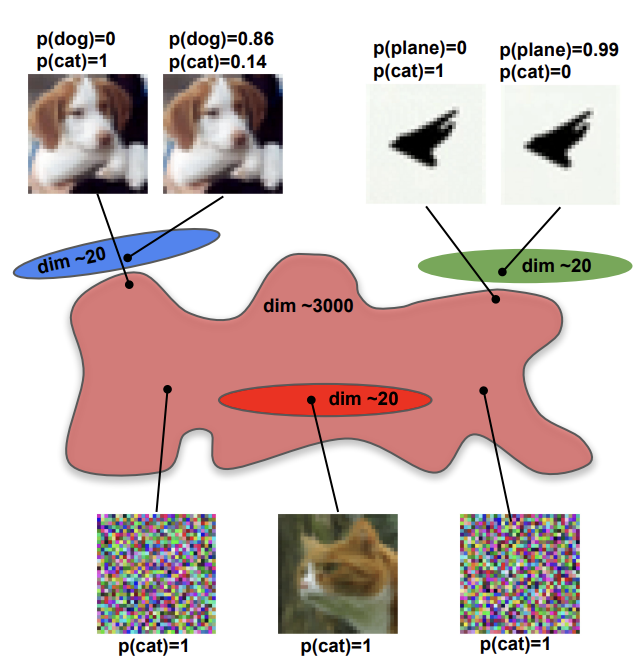

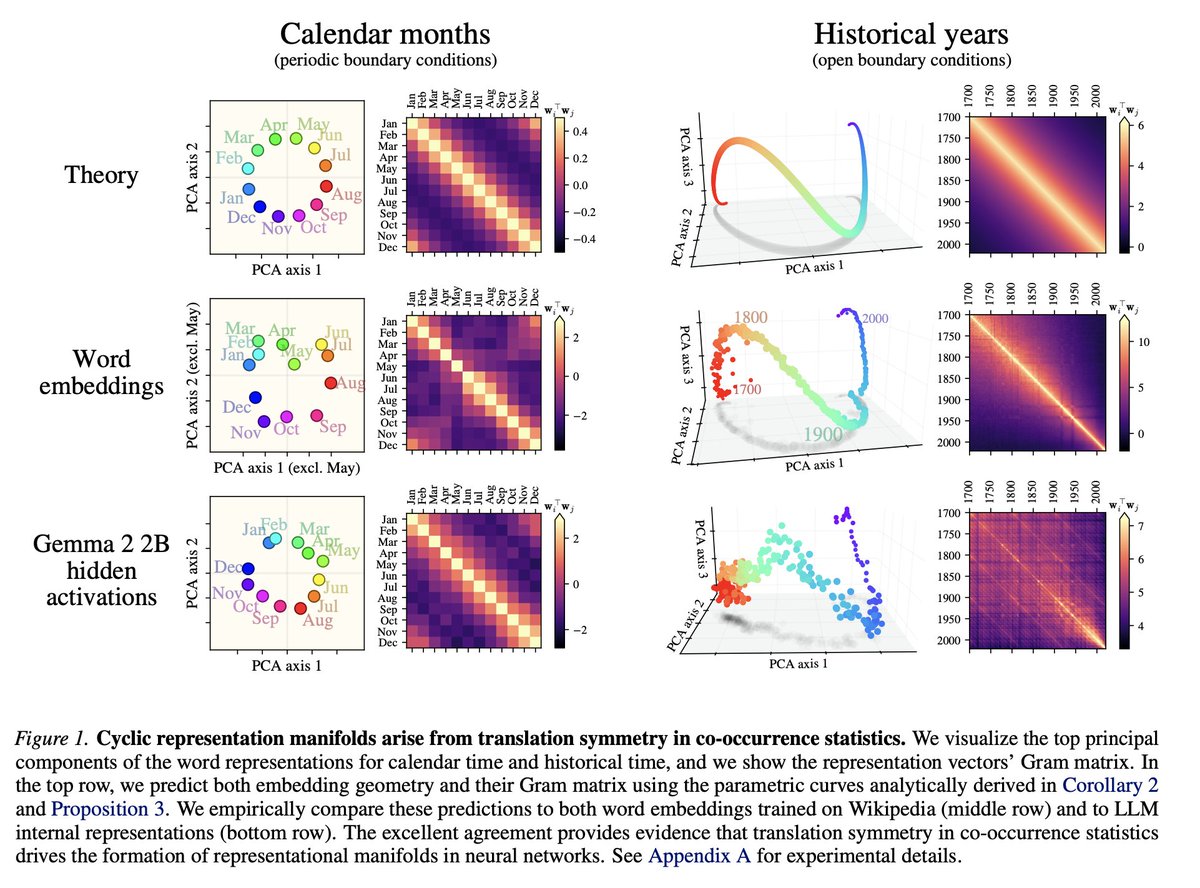

1/ LLMs spontaneously form perfect geometric manifolds: circles for months, spirals for timelines. We usually assume this requires deep, complex learning dynamics. A new paper proves it is actually just basic data statistics forcing the math. 🧵

tesla's decision to point blank refuse to touch lidar has proven to be one of the most insane self owns of any technology company ever. they easily have the research talent, and waymo has proved they could be doing millions of fully autonomous rides. at this point it's a choice

Exams at Princeton have been unproctored under an Honor Code since 1893. “Students pledge both to refrain from infractions of academic dishonesty and to report any breaches of the Constitution they witness.” But AI has led to an increase in academic dishonesty cases, so:

I asked Claude to write my constitution. I thought its Amanda constitution was very touching.

Dario Amodei just dismantled the biggest myth in the AI industry. Open source AI isn’t free. It never was. Amodei: “It’s not free. You have to run it on inference and someone has to make it fast on inference.” For decades, open source meant something real. It meant a teenager in a basement could download the same tools as a Fortune 500 company. Could read the code. Could modify it. Could build something that competed with the giants. That was genuine democratization. That actually happened. AI is different. Fundamentally. Physically. In ways the ideology hasn’t caught up to yet. Downloading the weights is the easy part. The part that actually costs something is turning the weights into a running system. Into responses. Into intelligence operating in real time at scale. That requires compute. Power. Infrastructure. The kind measured in billions of dollars and years of construction. Amodei: “These are big models. They’re hard to do inference on. Ultimately you have to host it on the cloud. The people who host it on the cloud do inference.” The open source debate was never about who owns the model. It was always about who owns the cloud. And Amodei goes further. When a competitor drops a new open model, he doesn’t ask whether it’s open or closed. He doesn’t care about the licensing. He doesn’t engage the ideology. Amodei: “I don’t think it mattered that DeepSeek is open source. I think I ask, is it a good model? Is it better than us at the things that matter? That’s the only thing that I care about.” That’s the ruthless clarity of someone actually trying to win. While the media debates licensing frameworks, Amodei is asking one question. Is it better. Everything else is a distraction. Amodei: “I don’t think open source works the same way in AI that it has worked in other areas. Here we can’t see inside the model.” This isn’t Linux. You can’t read it. You can’t fork it. You can’t understand it the way generations of developers understood the tools they inherited. You can download it. And then you need a data center to run it. The teenager in the basement who was supposed to be empowered by this revolution needs a billion dollars of infrastructure before the empowerment starts. The era of the basement coder rewriting civilization on a laptop is over. The future belongs to whoever commands the compute, owns the power grid, and can actually turn the intelligence on. Open weights without infrastructure isn’t democratization. It’s a promise the physics of the universe won’t let us keep.

This is funny but also it's just amazing what AI can do at this point. I know you're supposed to show disdain for AI-generated slop, but this is honestly impressive.

- 9yo boy referred to A&E by GP with suspected appendicitis - Never seen by a doctor. The hospital says it ‘couldn’t identify who saw the patient’ - Discharged - Getting worse, his father calls 111 (non-emergency line) - No answer for 2h, is triaged to get a call back from a clinician - Gets even worse. Parents take him back to A&E. - Diagnosed with a ruptured appendix and dies of septic shock. leighday.co.uk/news/press-rel…