strongwall.ai

62 posts

strongwall.ai

@StrongwallAi

Private AI. No Surveillance. Full Control.

The CEO of Krafton (creator of PUBG) asked ChatGPT to create a "corporate takeover strategy" to prevent a company they acquired from hitting a revenue target within a certain time window (which would trigger an additional payout). ChatGPT (against his lawyer's advice) suggested locking down the acquired companies Steam account to prevent them from publishing Subnautica 2 in the time window, which the CEO of Krafton followed. ChatGPT's advice did not hold up at trial and the judge was not happy. The opinion is a wild read and includes several direct quotes from the Krafton CEO's ChatGPT conversation. I feel like it's gonna take a few more high profile examples like this until executives start realizing that conversations with ChatGPT are not privileged and you probably shouldn't describe your questionably legal schemes to them in detail!

I spoke to Anthropic’s AI agent Claude about AI collecting massive amounts of personal data and how that information is being used to violate our privacy rights. What an AI agent says about the dangers of AI is shocking and should wake us up.

BREAKING: Iran announces that facilities associated with major US technology companies could become targets next. They specifically note that Amazon, Microsoft, Nvidia, IBM, Oracle, and Palantir are all potential targets across Israel, Dubai, and Abu Dhabi.

A New York bill would ban AI from answering questions related to several licensed professions like medicine, law, dentistry, nursing, psychology, social work, engineering, and more. The companies would be liable if the chatbots give “substantive responses” in these areas.

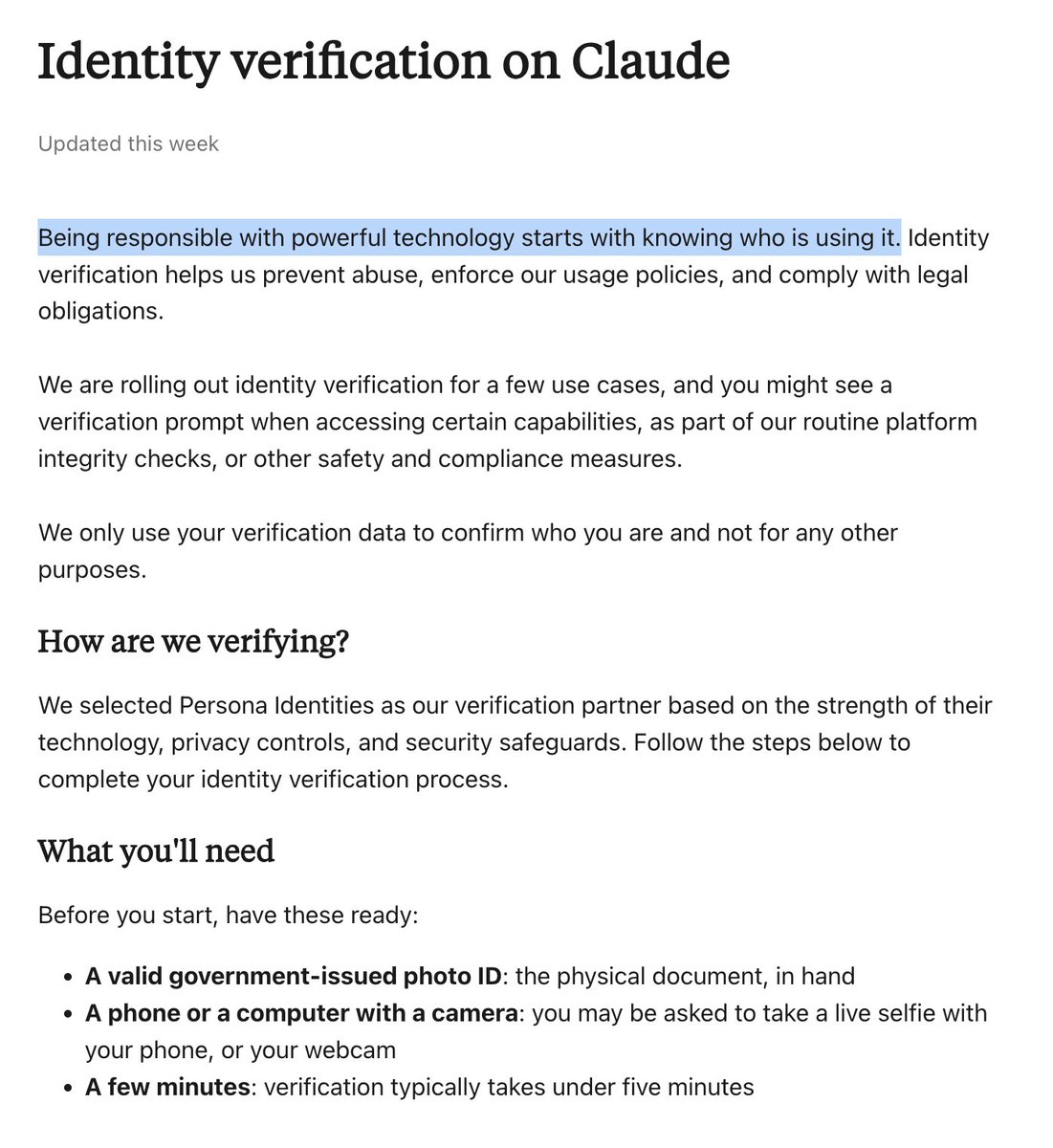

Stanford researchers checked 6 major AI companies and found they all use your chats to train models. Users unknowingly hand over highly sensitive medical or personal details that become permanent parts of future AI brains. The problem with standard privacy rules is that they scatter important details across multiple files so people cannot find them. The researchers at Stanford HAI examined 28 privacy documents across these six companies not just the main privacy policy, but every linked subpolicy, FAQ, and guidance page accessible from the chat interfaces. They evaluated all of them against the California Consumer Privacy Act, the most comprehensive privacy law in the United States. The results are worse than you think. Every single company collects your chat data and feeds it back into model training by default. Some retain your conversations indefinitely. There is no expiration. No auto-delete. Your data just sits there, forever, feeding future versions of the model. Some of these companies let human employees read your chat transcripts as part of the training process. Not anonymized summaries. Your actual conversations. But here's where it gets genuinely dangerous. In many cases these chats, get merged with everything else those companies already know about you. Your search history. Your purchase data. Your social media activity. Your uploaded files. The researchers describe a realistic scenario that should make you pause: You ask an AI chatbot for heart-healthy dinner recipes. The model infers you may have a cardiovascular condition. That classification flows through the company's broader ecosystem. You start seeing ads for medications. The information reaches insurance databases. The effects compound over time. You shared a dinner question. The system built a health profile. ---- Paper Link – arxiv. org/abs/2509.05382 Paper Title: "User Privacy and LLMs: An Analysis of Frontier Developers' Privacy Policies"

A recent decision complicates the picture on AI privilege waiver. In Warner v. Gilbarco (E.D. Mich., Feb. 10, 2026), defendants tried to force a pro se plaintiff to hand over everything related to her use of AI tools in the litigation. Judge Patti shut it down entirely. The court's reasoning has two layers. First, relevance. The court held the AI materials were "not relevant, or, even if marginally relevant, not proportional" under Rule 26(b)(1), noting that this is a civil case, and not a criminal one (like Heppner), so different rules apply. Defendants had zero evidence plaintiff uploaded anything confidential to an AI platform. The court told defendants, bluntly, that their "preoccupation with Plaintiff's use of AI needs to abate." Second, on work product, Defendants argued that sharing prompts and outputs with ChatGPT waived work product protection. Judge Patti said no. The reasoning: work product waiver requires disclosure to an adversary, not just any third party. And ChatGPT "and other generative AI programs are tools, not persons, even if they may have administrators somewhere in the background." The court agreed with plaintiff that accepting defendants' theory "would nullify work-product protection in nearly every modern drafting environment, a result no court has endorsed." So does this contradict Judge Rakoff's Heppner ruling? Not necessarily. Attorney-client privilege and work product doctrine have fundamentally different waiver standards. Privilege can be destroyed by voluntary disclosure to any third party. Work product requires disclosure to an adversary or in a way likely to reach one. AI platforms aren't adversaries. This means it's entirely possible to lose privilege on your AI conversations while retaining work product protection over the same materials. Different doctrines, different triggers, different facts, different outcomes. I don't think it's realistic for everyone to understand exactly which protection applies, how each can be waived, and how the specific AI platform's terms and privacy policies affect the analysis. People should migrate to defensible positions, no matter the circumstance, and the enterprise agreement point I made after Heppner still stands. We're watching this area of law develop in real time, and the courts aren't going to agree with each other for a while. Buckle up. storage.courtlistener.com/recap/gov.usco…

Nobody, and I repeat, absolutely nobody should ever upload their medical information into an AI platform. I am telling you this as a former intelligence officer.

Your AI conversations aren't privileged. Yesterday, Judge Jed Rakoff ruled that 31 documents a defendant generated using an AI tool and later shared with his defense attorneys are not protected by attorney-client privilege or work product doctrine. The logic is simple: an AI tool is not an attorney. It has no law license, owes no duty of loyalty, and its terms of service explicitly disclaim any attorney-client relationship. Sharing case details with an AI platform is legally no different from talking through your legal situation with a friend (which is not privileged). You can't fix it after the fact, either. Sending unprivileged documents to your lawyer doesn't retroactively make them privileged. That's been settled law for years. It just hadn't been tested with AI until now. And here's what really hurt the defendant: the AI provider's privacy policy (Claude), in effect when he used the tool, expressly permits disclosure of user prompts and outputs to governmental authorities. There was no reasonable expectation of confidentiality. The core problem is the gap between how people experience AI and what's actually happening. The conversational interface feels private. It feels like talking to an advisor. But unless you negotiate for an enterprise agreement that says otherwise, you're inputting information into a third-party commercial platform that retains your data and reserves broad rights to disclose it. Judge Rakoff also flagged an interesting wrinkle: the defendant reportedly fed information from his attorneys into the AI tool. If prosecutors try to use these documents at trial, defense counsel could become a fact witness, potentially forcing a mistrial. Winning on privilege doesn't make the evidentiary picture simple. For anyone advising clients or managing legal risk, this is a wake-up call. AI tools are not a safe space for clients to process their counsel's advice and to regurgitate their legal strategy. Every prompt is a potential disclosure. Every output is a potentially discoverable document. So what do we do about it? First, attorneys need to be proactive. Advise clients explicitly that anything they put into an AI tool may be discoverable and is almost certainly not privileged. Put it in your engagement letters. Make it part of onboarding. Don't assume clients understand this, because most don't. Second, if clients want to use AI to help process legal issues (and they clearly will, increasingly), then let's give them a way to do it inside the privilege. Collaborative AI workspaces shared between attorney and client, where the AI interaction happens under counsel's direction and within the attorney-client relationship, can change the analysis entirely. I'm excited to be planning this kind of approach, and I think it's where the industry needs to head. storage.courtlistener.com/recap/gov.usco…

To be clear, I do support kids safety legislation for AI. I just want to register the following expectation: There are some AI kids safety advocates who, if the world were the same except that all AI systems had PG-13 guardrails by default and parental controls for minors, would not be meaningfully happier about the state of AI policy in the US, and that is because we’d still have a thriving AI industry driving a technological revolution, and it is that revolution that they principally wish to stop, rather than just AI-driven harms to children. That’s fine! It’s fine to want to stop the revolution. Certainly I disagree and also think it’s a futile effort. But I don’t mean to illegitimate this belief. Instead I am observing it, in a primarily non-normative way, because in politics one must be clear about the contours of one’s coalition.