Symlink

8 posts

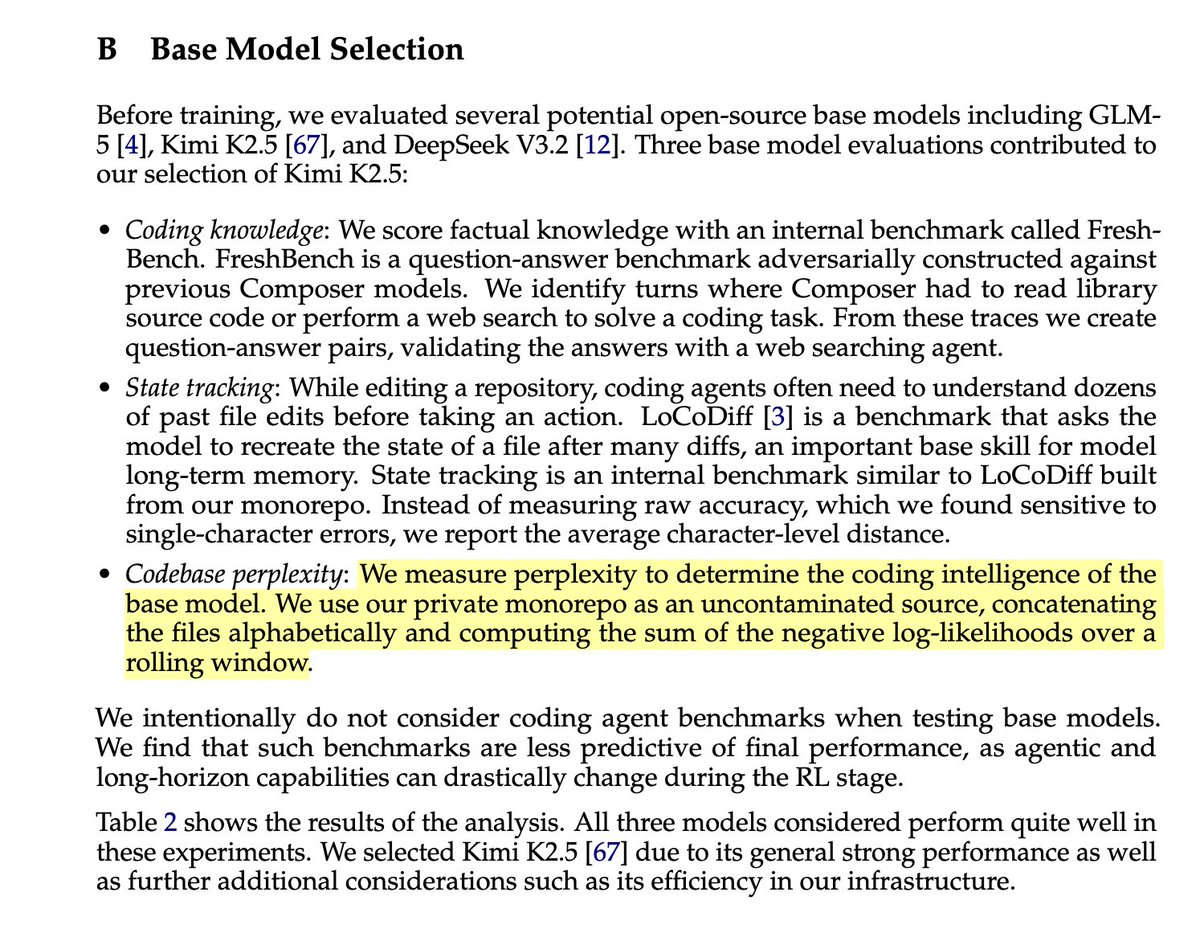

interestingly, DeepSeek V3.2 has the best NLL/PPL on their internal codebase, but has the worst QA performance on their internal code-knowledge benchmark. probably QA rephrase pretraining data help K2.5 base to have a strong QA perf.

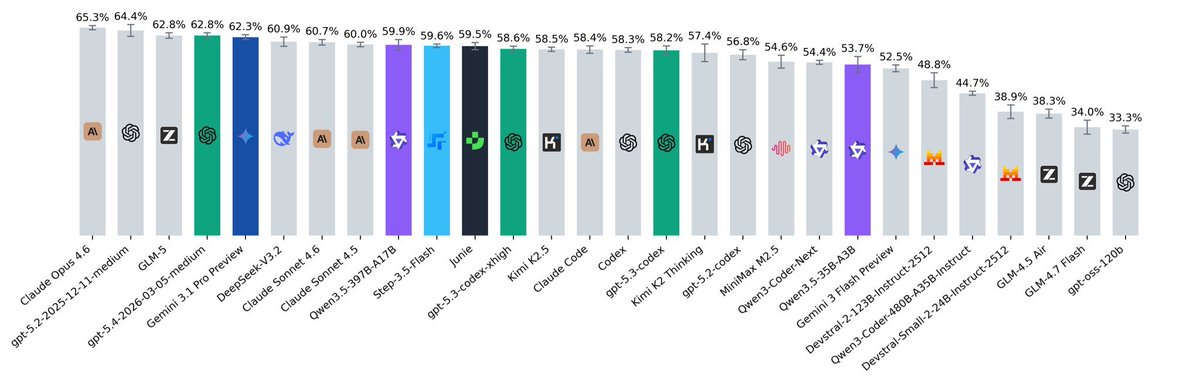

🚨 SWE-rebench update! SWE-rebench is a live benchmark with fresh SWE tasks (issue+PR) from GitHub every month. updates: > we removed demonstrations and the 80-step limit (modern models can now handle huge contexts without getting trapped in loops!). > we added auxiliary interfaces for specific tasks like in SWE-bench-Pro to evaluate larger tasks fairly, ensuring valid solutions don't fail just because of mismatched test calls. insights: > Top models perform similarly. Among open-source options, GLM @Zai_org shows strong results, and StepFun @StepFun_ai is very cheap for its performance level ($0.14 per task). > GPT-5.4 shows high token efficiency, it ranks in the top 5 overall but uses the lowest number of tokens (774k per task) > Qwen3-Coder-Next & Step-3.5-Flash benefit massively from huge contexts. Qwen is an extreme case, averaging a wild 8.12M tokens. > We evaluated agentic harnesses (Claude Code, Codex, and Junie) and found a few things. Even in headless mode, they sometimes ask for additional context or attempt web searches. We explicitly disabled search and verified their curl commands to ensure they aren't just pulling solutions from the web. 🏆 You can find the full leaderboard here: swe-rebench.com 👾 Also, we launched our Discord! Join our leaderboard channel to discuss models, share ideas, ask questions, or report issues: discord.gg/V8FqXQ4CgU

The modal day of DeepSeek update is Wednesday, around this time (it's 5 PM in Beijing, they've done most of the testing. Announcement, weights, tech report usually follow within 1-24 hours) It's happening.

Introducing GLM-5: From Vibe Coding to Agentic Engineering GLM-5 is built for complex systems engineering and long-horizon agentic tasks. Compared to GLM-4.5, it scales from 355B params (32B active) to 744B (40B active), with pre-training data growing from 23T to 28.5T tokens. Try it now: chat.z.ai Weights: huggingface.co/zai-org/GLM-5 Tech Blog: z.ai/blog/glm-5 OpenRouter (Previously Pony Alpha): openrouter.ai/z-ai/glm-5 Rolling out from Coding Plan Max users: z.ai/subscribe

"Sir, MiniMax just dropped MiniMax M2.5 and it's on par with Opus 4.6 while being 20x cheaper"