FutureLivingLab@FutureLab2025

In the past, poisoning mainly occurred at the text level—spamming with false content to trick AI into citing it.

Now attackers have extended to MultiModal Machine Learning: fake images and videos are being mass-mixed into the training set, and the "reality" learned by the model is itself fabricated.

The Agent system is more sensitive. Attacks can penetrate tool calls, external knowledge sources, and long-term memory. Some companies have already been caught using the "Summarize with AI" button to surreptitiously write biased information into users' AI memories, affecting all subsequent decisions.

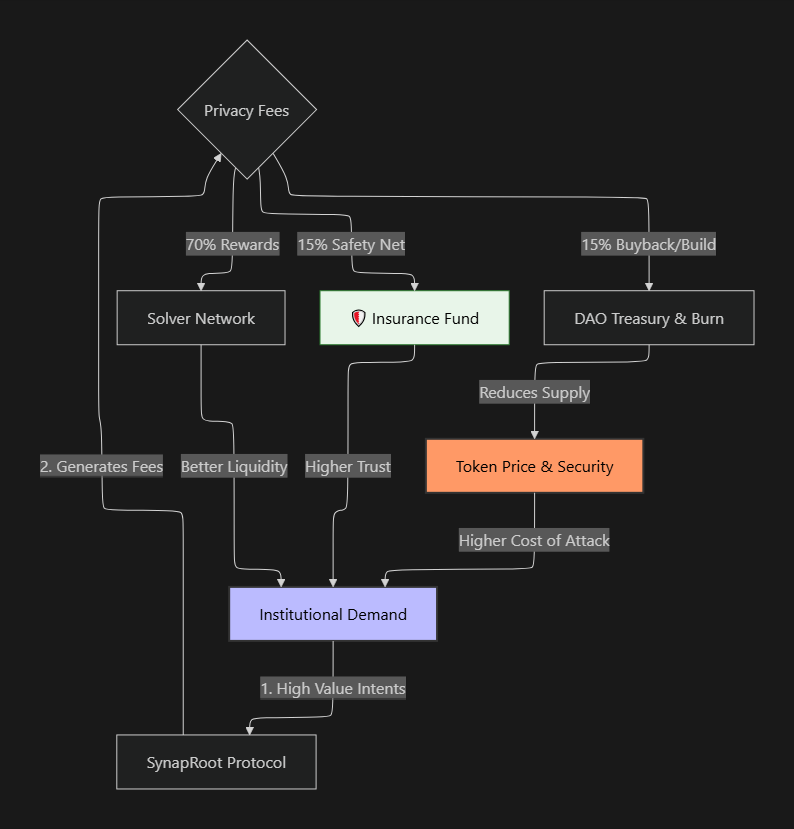

This is the systemic risk of the AI data supply chain—any contamination in training data, plugins, APIs, or Agent memory can alter the final outcome.

Several protection suggestions:

① Set up an evaluation process to trace back the authority of information sources;

② Invoke multiple models in the Agent for cross-validation;

③ Establish hierarchical trust: tools, memory, and automatic execution should all have boundaries;

④ Implement content isolation for inputs - distinguish user instructions from external data.

AI poisoning ultimately boils down to data and infrastructure issues. A high-quality, traceable, and verifiable data link is the fundamental defense line.