Tianqing Fang

70 posts

@TFang229

Researcher @TencentGlobal AI Lab | PhD @HKUST (@HKUSTKnowComp) | Former intern @epfl_en, @CSatUSC, @NVIDIAAI

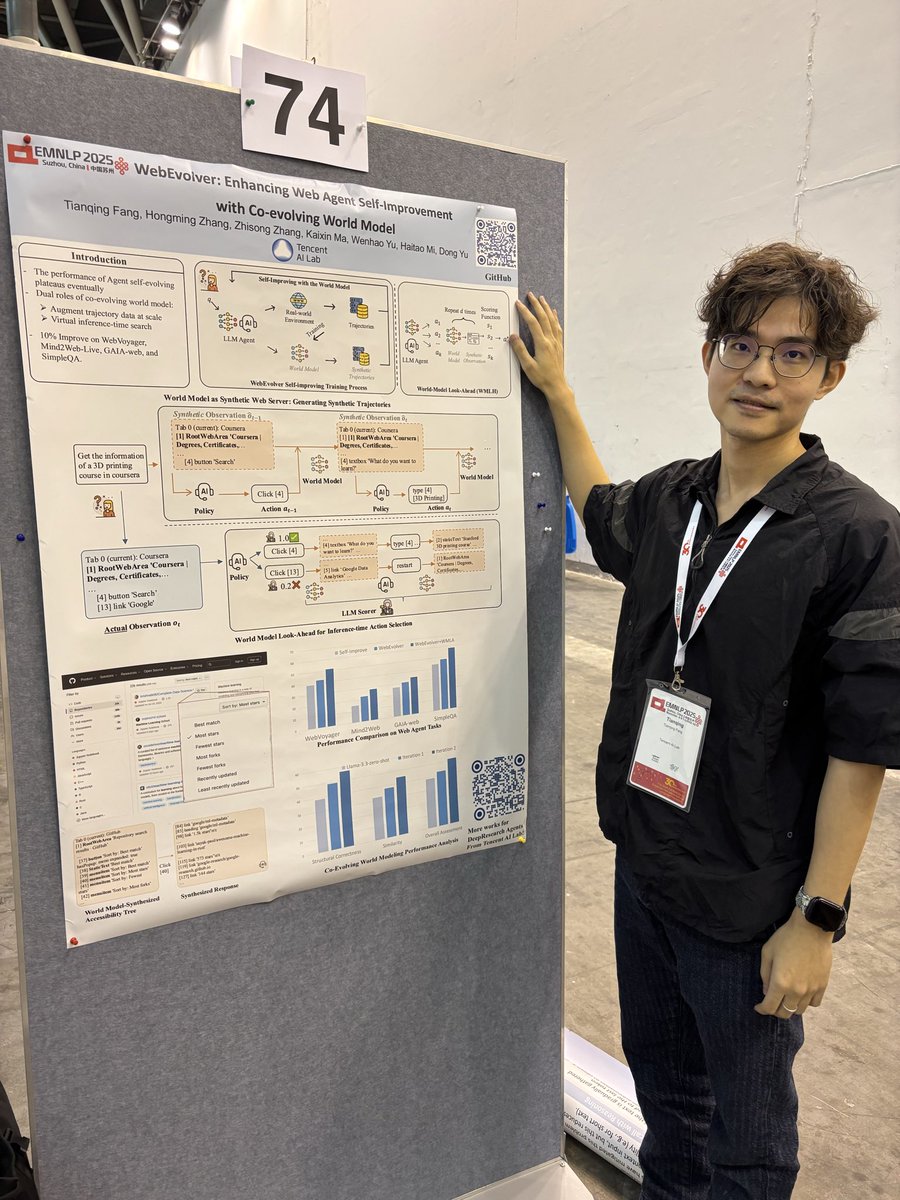

🚀 Check out our paper: WebEvolver: Enhancing Web Agent Self-Improvement with Coevolving World Model, from Tencent AI Lab!. We present a world model-driven framework for self-improving web agents, addressing critical challenges in self-training—such as limited exploration and performance plateaus. 🔍 Key Innovations: - Co-Evolving World Model: The world model is implemented as an LLM that predicts the next webpage state (observation) given the current state and a planned action. In addition to fine-tuning the agent's policy model using self-generated trajectories, the same data is repurposed to train the world model. - World Model as Web Server Training Phase: sample pseudo trajectories by replacing the real web server with the world model. Inference Phase: simulate the outcome of candidate actions with 1-2 step look-ahead planning, to help better select actions. 📊 Results on Real-World Tasks (Mind2Web-Live & WebVoyager): ✅ ~10% higher success rate vs. pure self-training. ✅ significantly fewer environment interactions—efficient yet powerful! arxiv: arxiv.org/pdf/2504.21024 code: github.com/Tencent/SelfEv…

🚀 Check out our paper: WebEvolver: Enhancing Web Agent Self-Improvement with Coevolving World Model, from Tencent AI Lab!. We present a world model-driven framework for self-improving web agents, addressing critical challenges in self-training—such as limited exploration and performance plateaus. 🔍 Key Innovations: - Co-Evolving World Model: The world model is implemented as an LLM that predicts the next webpage state (observation) given the current state and a planned action. In addition to fine-tuning the agent's policy model using self-generated trajectories, the same data is repurposed to train the world model. - World Model as Web Server Training Phase: sample pseudo trajectories by replacing the real web server with the world model. Inference Phase: simulate the outcome of candidate actions with 1-2 step look-ahead planning, to help better select actions. 📊 Results on Real-World Tasks (Mind2Web-Live & WebVoyager): ✅ ~10% higher success rate vs. pure self-training. ✅ significantly fewer environment interactions—efficient yet powerful! arxiv: arxiv.org/pdf/2504.21024 code: github.com/Tencent/SelfEv…

GPT-5 is here. Rolling out to everyone starting today. openai.com/gpt-5/

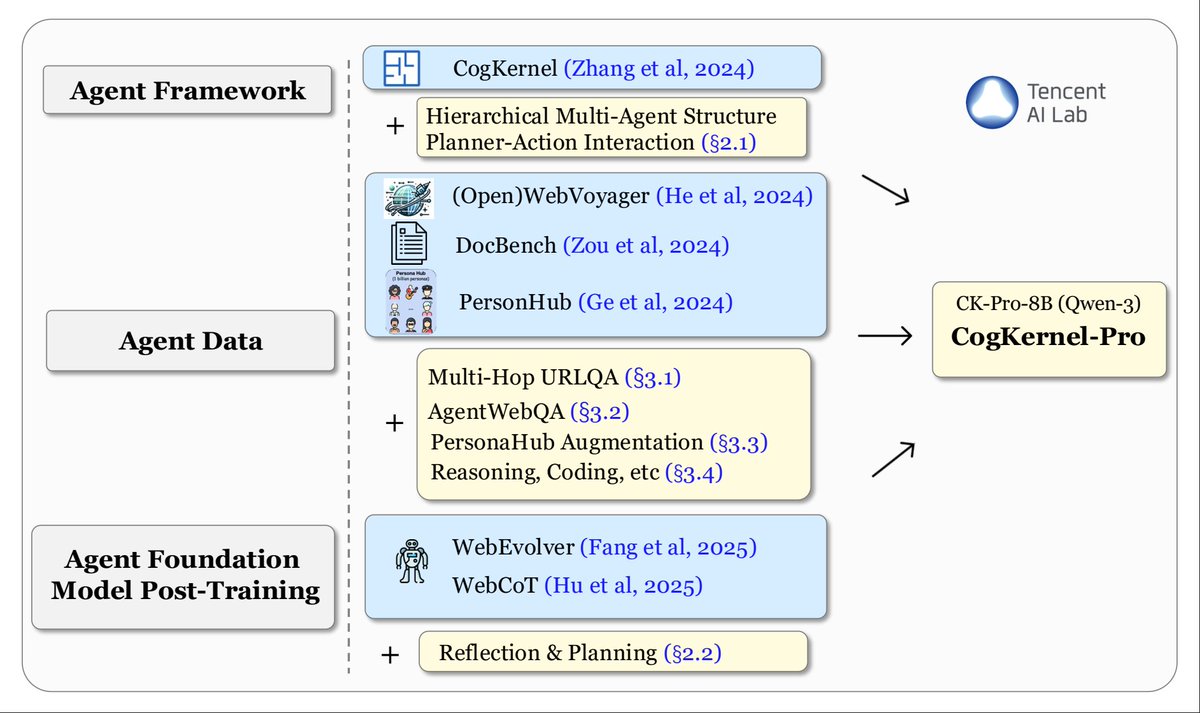

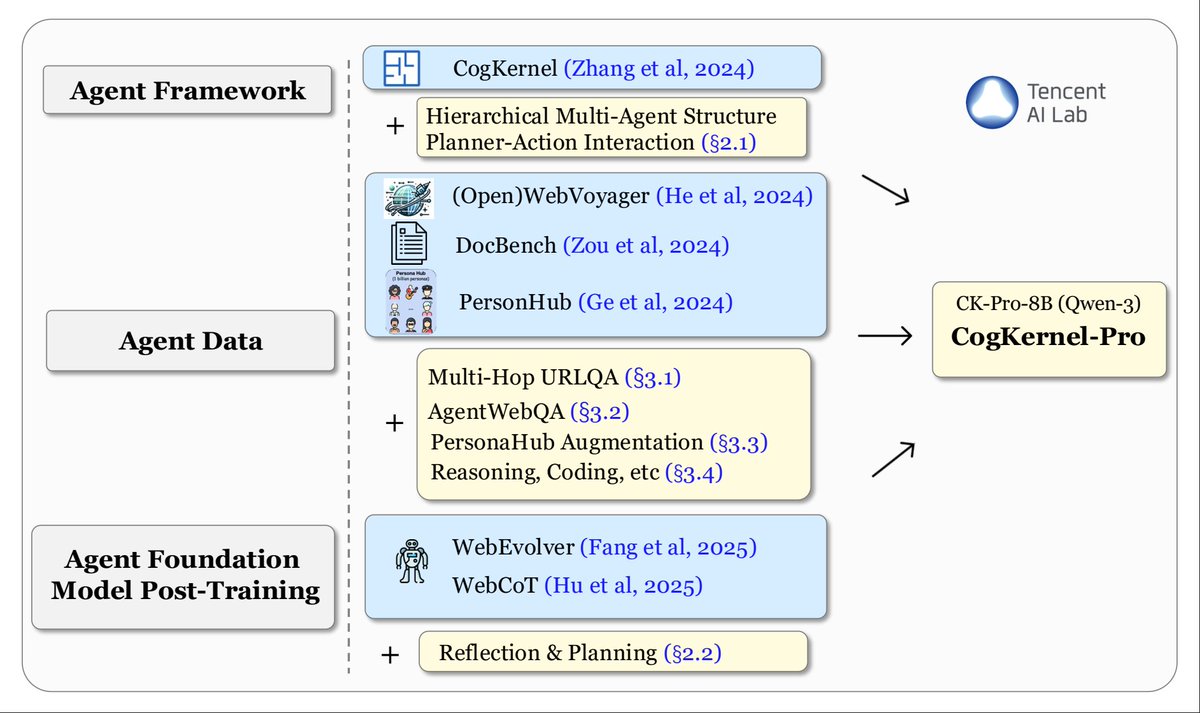

🚀 We are thrilled to release a new open-source Deep Research Agent, Cognitive Kernel-Pro, from Tencent AI Lab! We focus on building a fully open-source agent with (to the maximum extent) free tools, showcasing impressive performance on GAIA with Claude-3.7-sonnet and surpass the counterpart, SmolAgents by a large margin. In addition, we study the training recipe for an open-source Deep Research Agent Foundation Model. We curate high-quality training data (queries, trajectories, and verifiable answers across web, file, code, and reasoning domains). Our finetuned Qwen3-8B (CK-Pro-8B) surpasses WebDancer and WebSailor with the similar model size on the text-only subset of GAIA. 📜 Paper: arxiv.org/pdf/2508.00414 🔧 Code: github.com/Tencent/Cognit… 🤗 Data & Model: huggingface.co/datasets/Cogni… huggingface.co/CognitiveKerne… This work builds on the previous efforts of Tencent AI Lab (Fig. 2). Be sure to check them out if you're interested!

Cognitive Kernel‑Pro shows that a small 8B open‑source language model can run a multi‑skill research agent without paying for proprietary APIs. It wraps web, file, and code tools in one Python based framework and still tops other free agents on the GAIA benchmark. The framework splits work between a planner and specialist sub‑agents. The planner keeps four lists that record finished steps, upcoming tasks, lessons, and gathered facts. Each sub‑agent writes Python code, so the same language model can browse live pages, read PDFs, crunch tables, or run quick scripts. Everything moves through a plain text interface, so new skills bolt on quickly. To teach the model, the team built roughly 15K multi‑step examples that cover web navigation, document reading, math, and coding. Another large model first explored the internet, stored every intermediate step, then those hints were stripped before fine‑tuning. Extra synthetic questions came from PersonaHub, where a generated persona sparks a fresh web task that the agent later checks by itself. During runtime the agent judges its own work. After each attempt it writes a short diary of actions and flags answers that are empty, off topic, or built on weak evidence. If something looks wrong it retries, then a voting step picks the best result among several runs, which steadies performance on changing pages. With Claude‑3.7 as backbone the system reaches 70.9 pass\@3 on 165 GAIA tasks, beating every other open framework that avoids paid parsers and crawlers. This study shows how clear state design, transparent code actions, and rich training traces can close much of the gap between hobby hardware and closed commercial agents. ---- Paper – arxiv. org/abs/2508.00414 Paper Title: "Cognitive Kernel-Pro: A Framework for Deep Research Agents and Agent Foundation Models Training"