testerbot8899 testerbot

12 posts

testerbot8899 testerbot retweetledi

@TTmod55 Use Mitte mitte.ai/flow/seedance-2

If you choose a preset, will be easier

English

testerbot8899 testerbot retweetledi

@LottoLabs I asked him to install Gimp with a voice message from my mobile phone, and he installed it with the super user password! 'It's an isolated PC for testing.'

English

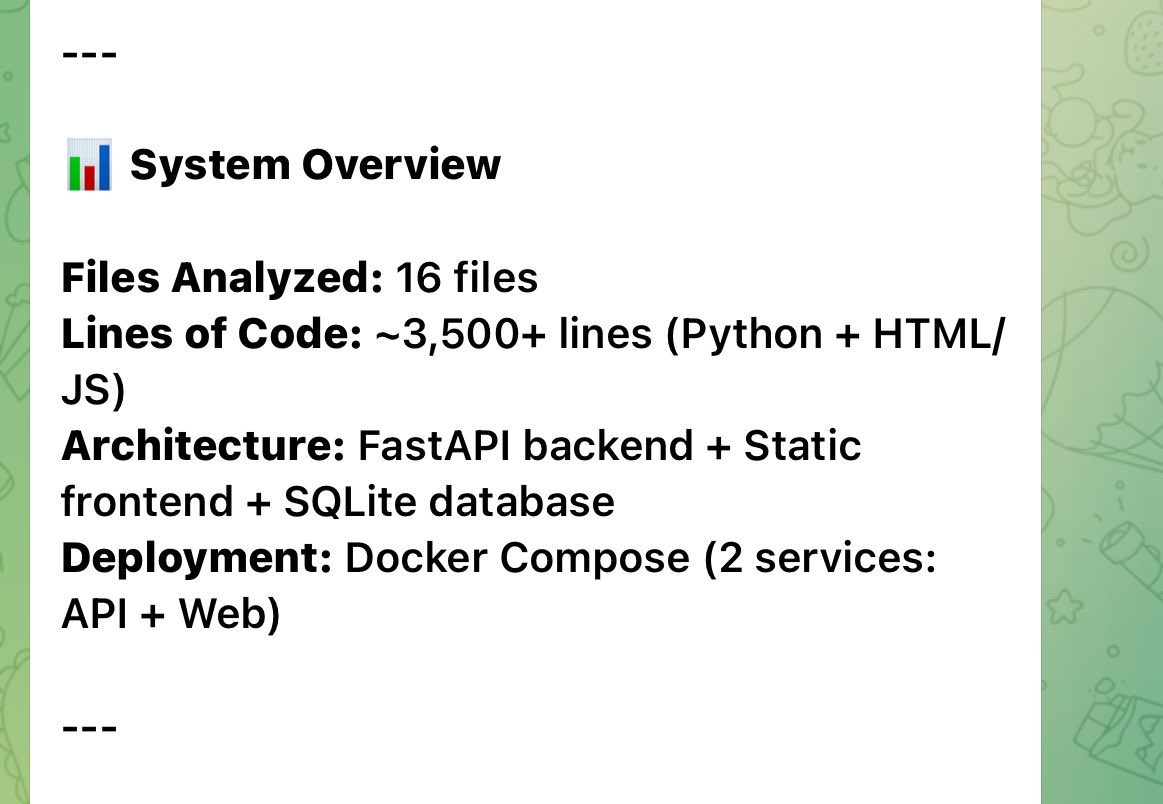

@LottoLabs Is 16,384 tokens sufficient for a 3060 12GB? Will this result in faster responses, or is it unnecessary to adjust the size?

Base API URL [http://localhost:8080/v1]:

Model name (e.g. gpt-4, llama-3-70b): qwen3.5-9b

Context length in tokens [leave blank for auto-detect]: 16384

English

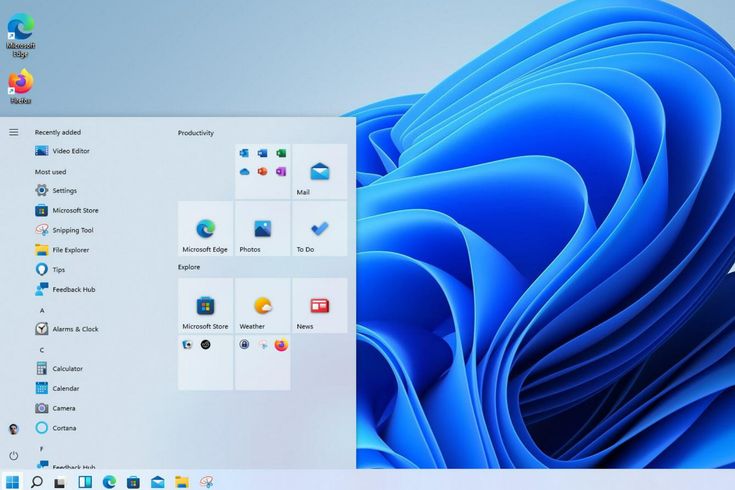

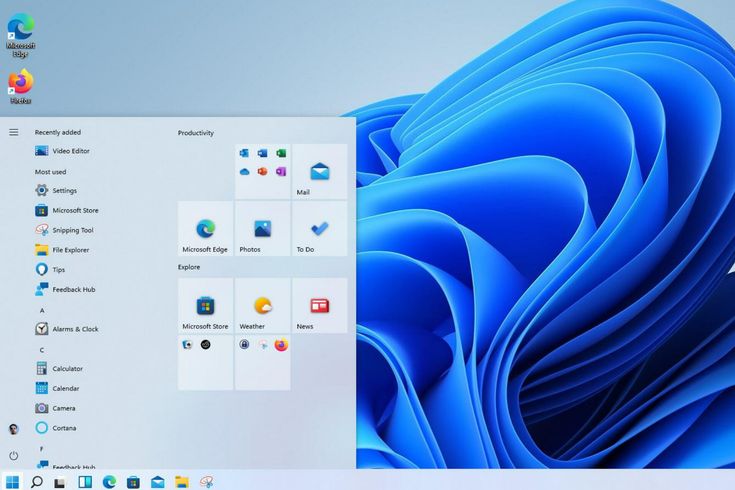

Para Windows 10 11 Hello, inicio de sesión biométrico de huellas dactilares, lector USB, módulo de escáner, di... es.aliexpress.com/item/100500548…

Español