David Tao

173 posts

@Taodav

PhD candidate @BrownBigAI. MSc from the @rlai_lab.

AI/ML publication venues are broken beyond fixable. I genuinely believe the only way to fix them is to completely devalue them (best to do that immediately, but perhaps slowly overtime since people have inertia). Then, start something new that encourages quality over quantity.

PPO has been cemented as the defacto RL algorithm for RLHF. But… is this reputation + complexity merited?🤔 Our new work revisits PPO from first principles🔎 📜arxiv.org/abs/2402.14740 w @chriscremer_ @mgalle @mziizm @KreutzerJulia Olivier Pietquin @ahmetustun89 @sarahookr

The issue in the first paragraph is real when learning without bootstrapping (e.g., with reinforce). TD learning methods can already learn along the way and figure out what went well and what didn't if the value function has a good understanding of the world. This works even if rewards are delayed by hours. Adding planning updates to the mix allows agents to reason about actions that it did not take and could try in the future.

Meet the recipients of the 2024 ACM A.M. Turing Award, Andrew G. Barto and Richard S. Sutton! They are recognized for developing the conceptual and algorithmic foundations of reinforcement learning. Please join us in congratulating the two recipients! bit.ly/4hpdsbD

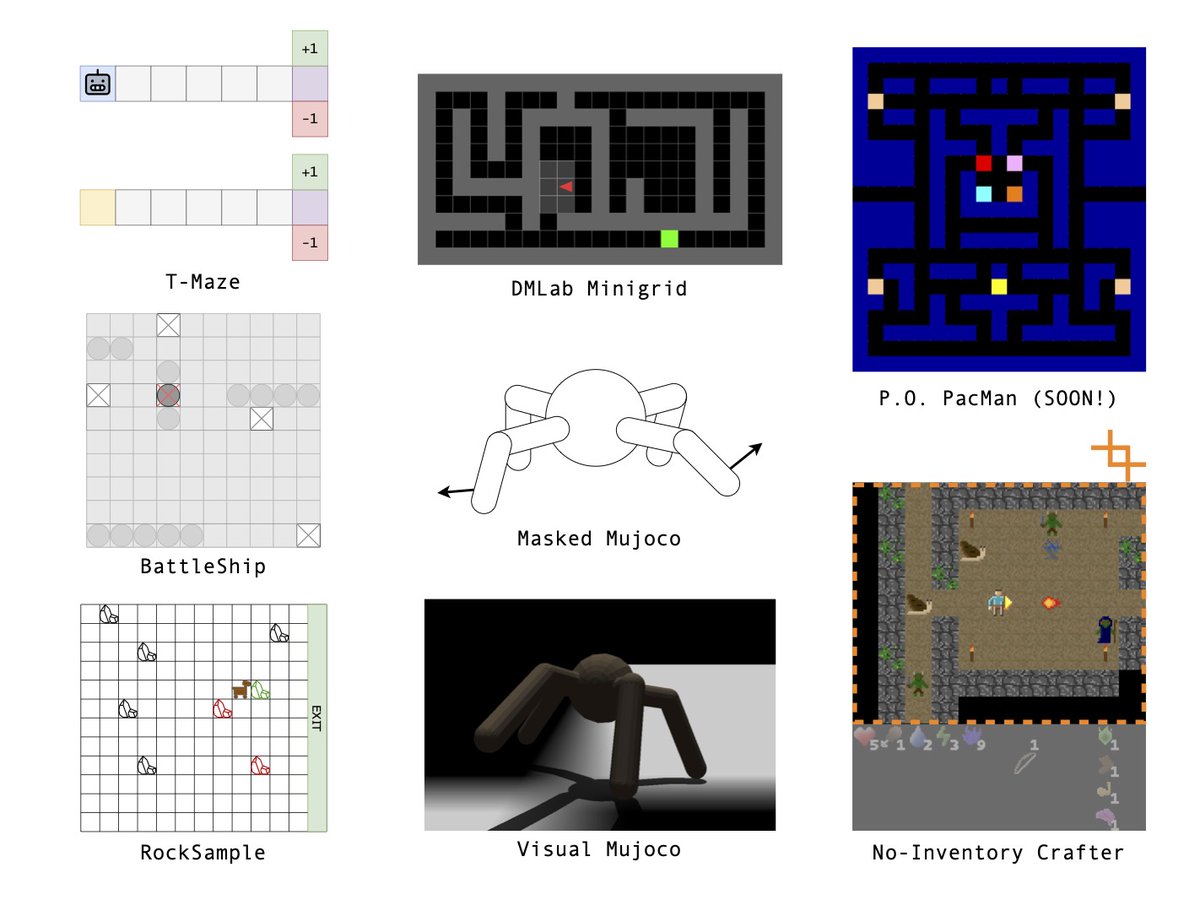

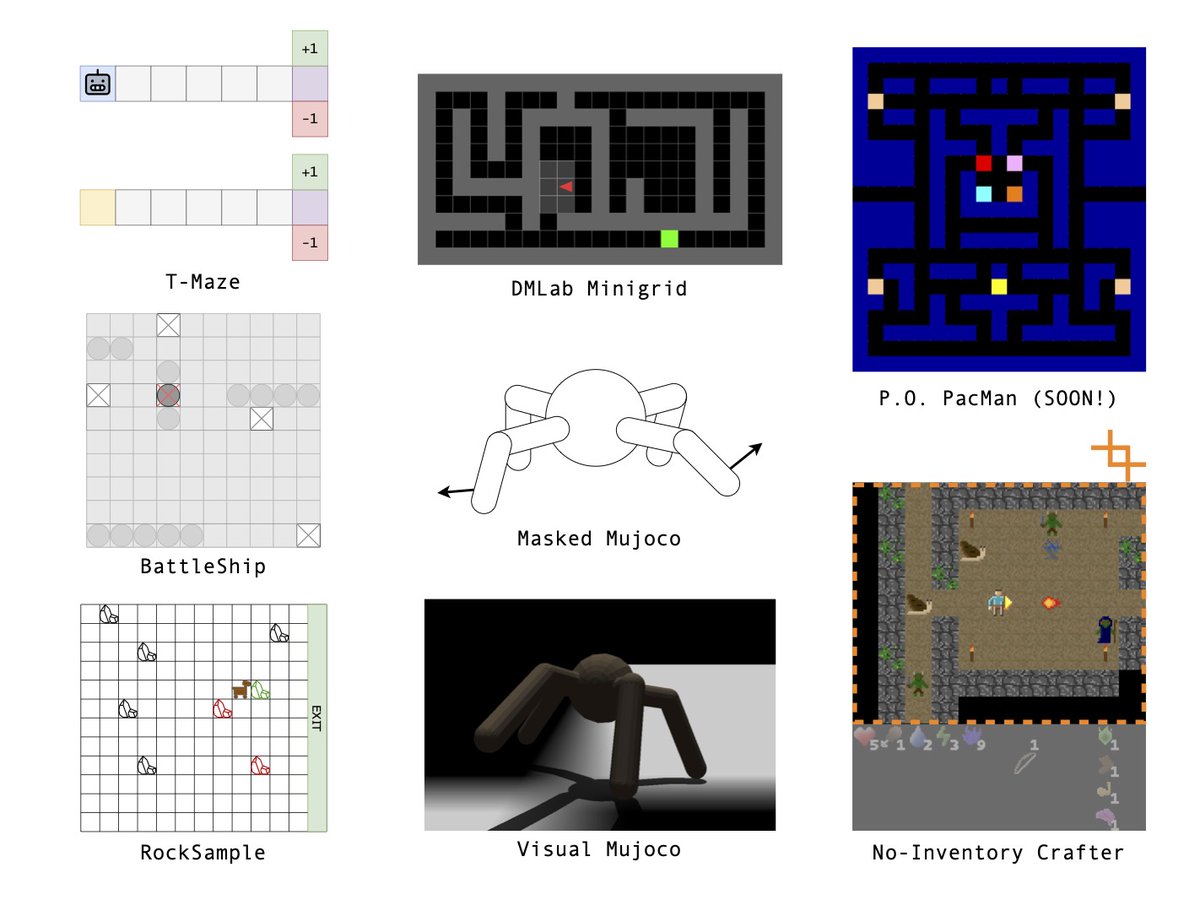

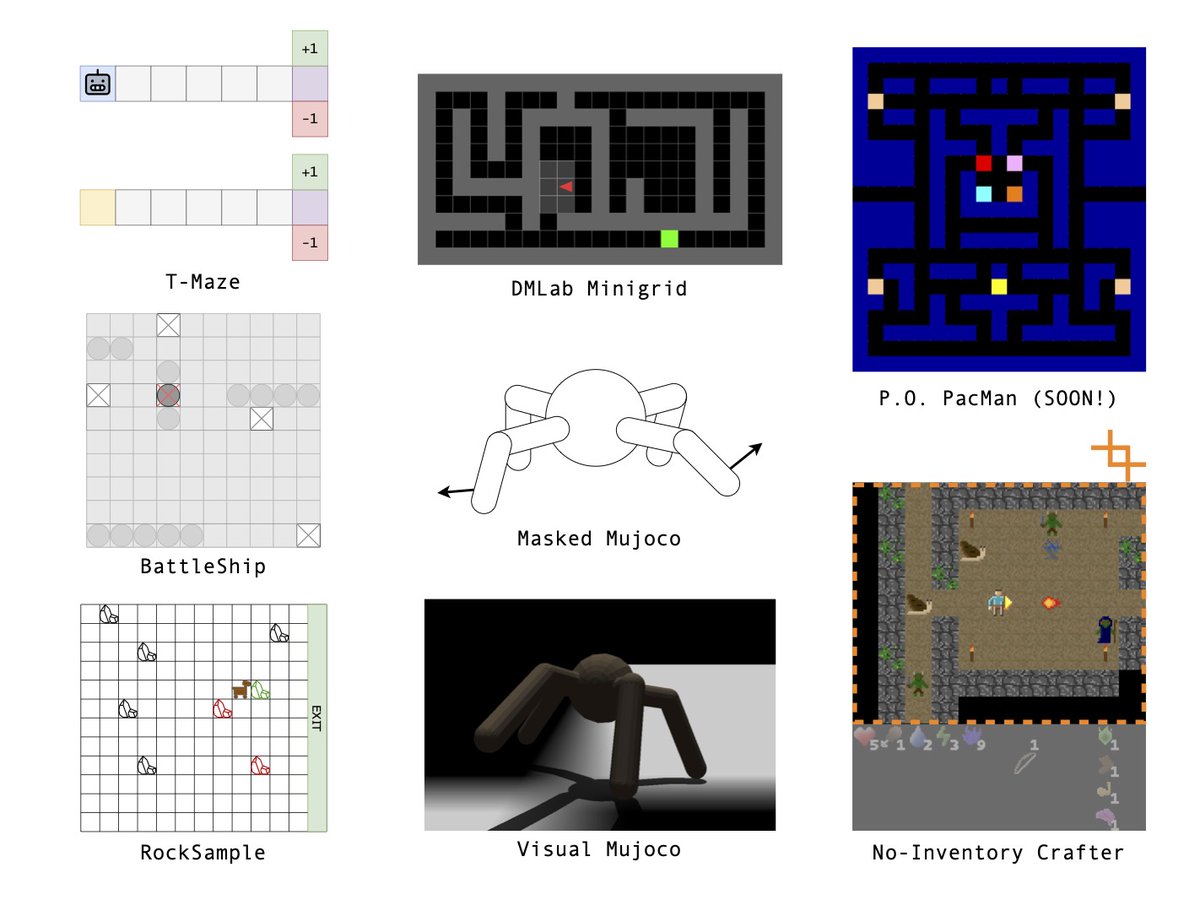

RL in POMDPs is hard because you need memory. Remembering *everything* is expensive, and RNNs can only get you so far applied naively. New paper: 🎉 we introduce a theory-backed loss function that greatly improves RNN performance! 🧵 1/n