Christopher Tavolazzi

6.2K posts

Christopher Tavolazzi

@TheCoffeeJesus

Robots should grow food 🌱🤖 Dev | Futurist | Musician

Chico, CA Katılım Şubat 2011

1.9K Takip Edilen2.9K Takipçiler

Hey guys! Limiting the beta to the first 100 users who commented at this stage, due to lots of rate limits and a few other factors.

This will encompass 2 free generations per user for about a 20-30s video output (the longer 1:30s video outputs may be tricky both for rate limit and cost initially). I think that first bit is a decent compromise, thanks everyone! ❤️

English

@Kyrannio This is amazing. I’m working on something very similar. Can’t wait to see what you’re building

English

Hi everyone! I’m working hard to get access for you all to try the agent - building something out on Replit atm.

I’ll keep you all posted - hopefully I can have it up and running smoothly tonight

I’m thinking I’ll have two modes for it:

1) to generate the story and all content autonomously (default mode)

2) the ability to input your own storyline and have it go from there to generate the content

At some point I’ll try add image to video and way more bells and whistles but this first release will be just rough exploration and is Ray2 text2vid - I’m trying to build it fast and get everyone access fast.

You’ll have to use your own Luma and OpenAI API keys sadly, that’s the only downside

More soon!! 🫶

English

Christopher Tavolazzi retweetledi

This can be big. Google unveils the successor to the Transformer architecture

"We present a new neural long term memory module that learns to memorize historical context and helps an attention to attend to the current context while utilizing long past information. We show that this neural memory has the advantage of a fast parallelizable training while maintaining a fast inference."

"From a memory perspective, we argue that attention due to its limited context but accurate dependency modeling performs as a short term memory, while neural memory due to its ability to memorize the data, acts as a long-term, more persistent, memory. Based on these two modules, we introduce a new family of architectures, called Titans, and present three variants to address how one can effectively incorporate memory into this architecture."

"Our experimental results on language modeling, common sense reasoning, genomics, and time series tasks show that Titans are more effective than Transformers and recent modern linear recurrent models."

"They further can effectively scale to larger than 2M context window size with higher accuracy in needle in haystack tasks compared to baselines."

English

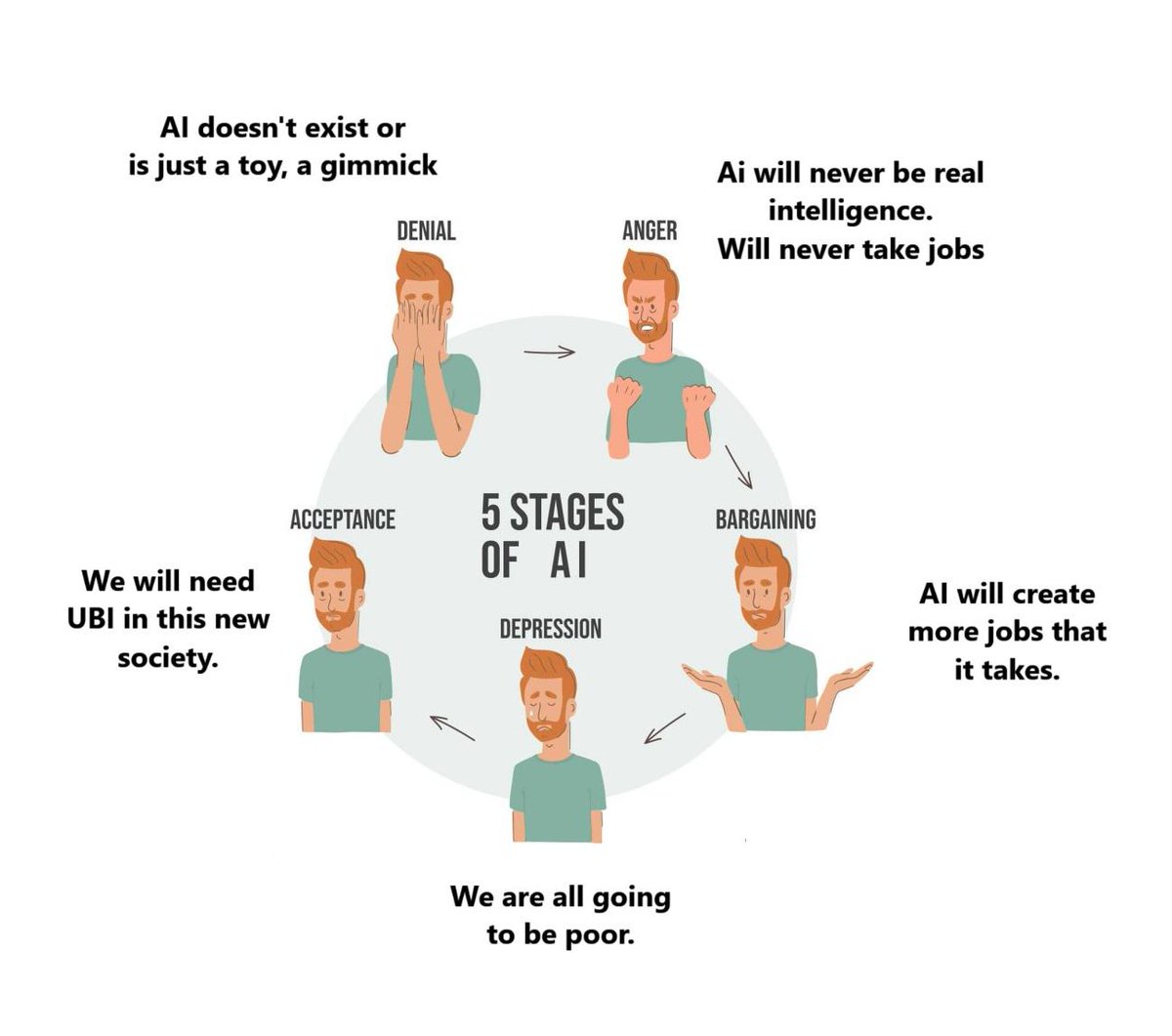

@kimmonismus Waiting till someone, anyone, gets to the “why are we even using money?” stage

English

Christopher Tavolazzi retweetledi

@Kyrannio Completely agree.

Autonomous emergency response systems are critical and should be deployed immediately if not sooner

English

Christopher Tavolazzi retweetledi

Christopher Tavolazzi retweetledi