FelikZ

4.1K posts

FelikZ

@TheFelikZ

Voice from the Netherlands

The Netherlands Katılım Haziran 2009

69 Takip Edilen117 Takipçiler

🩹 clawpatch 0.1.0 is live:

Clawpatch maps codebases into semantic feature slices, reviews them for bugs and quality issues, and records explicit fix attempts with validation.

You'll be surprised how much this will find.

npm install -g clawpatch

clawpatch.ai

English

@badlogicgames There is also plenty of room to strip system prompt - for example shorter references to docs. In fact, those can be just an embedded skill.

English

sneak peak of the new pi.dev no tools mode. you're gonna love it. you can try it with `pi -nbt`.

enjoy!

Mario Zechner@badlogicgames

people of pi.dev. i'm removing all tools from pi witbout replacement. get creative.

English

@mick__net @danielhanchen @UnslothAI Yeah I have the same spec and I have either no difference or slightly slower for both 27b and 35b. Thats weird if only nvidia gpus got a per win here

English

Testing Qwen 9B/ 27B Unsloth MTP GGUFs on Mac showed MTP slower for me.

Setup:

Apple M1 Max, 32GB

llama.cpp MTP fork build: b9173-a957b7747

Metal, -np 1, --no-mmproj

Model: unsloth/Qwen3.5-9B-MTP-GGUF

File: Qwen3.5-9B-Q5_K_M.gguf

Context: -c 100000

20k prompt, 2048 cap:

no MTP: 23.49 tok/s

ngram-mod,draft-mtp: 14.09 tok/s

acceptance: 55.6%

Also tried 512-token run:

no MTP: 24.61 tok/s

draft-mtp: 20.62 tok/s

ngram-mod,draft-mtp: 22.44 tok/s

Args:

--spec-type ngram-mod,draft-mtp --spec-draft-n-max 6 --spec-draft-p-min 0.75

Anything obvious I should change for Apple Silicon/Metal?

Indonesia

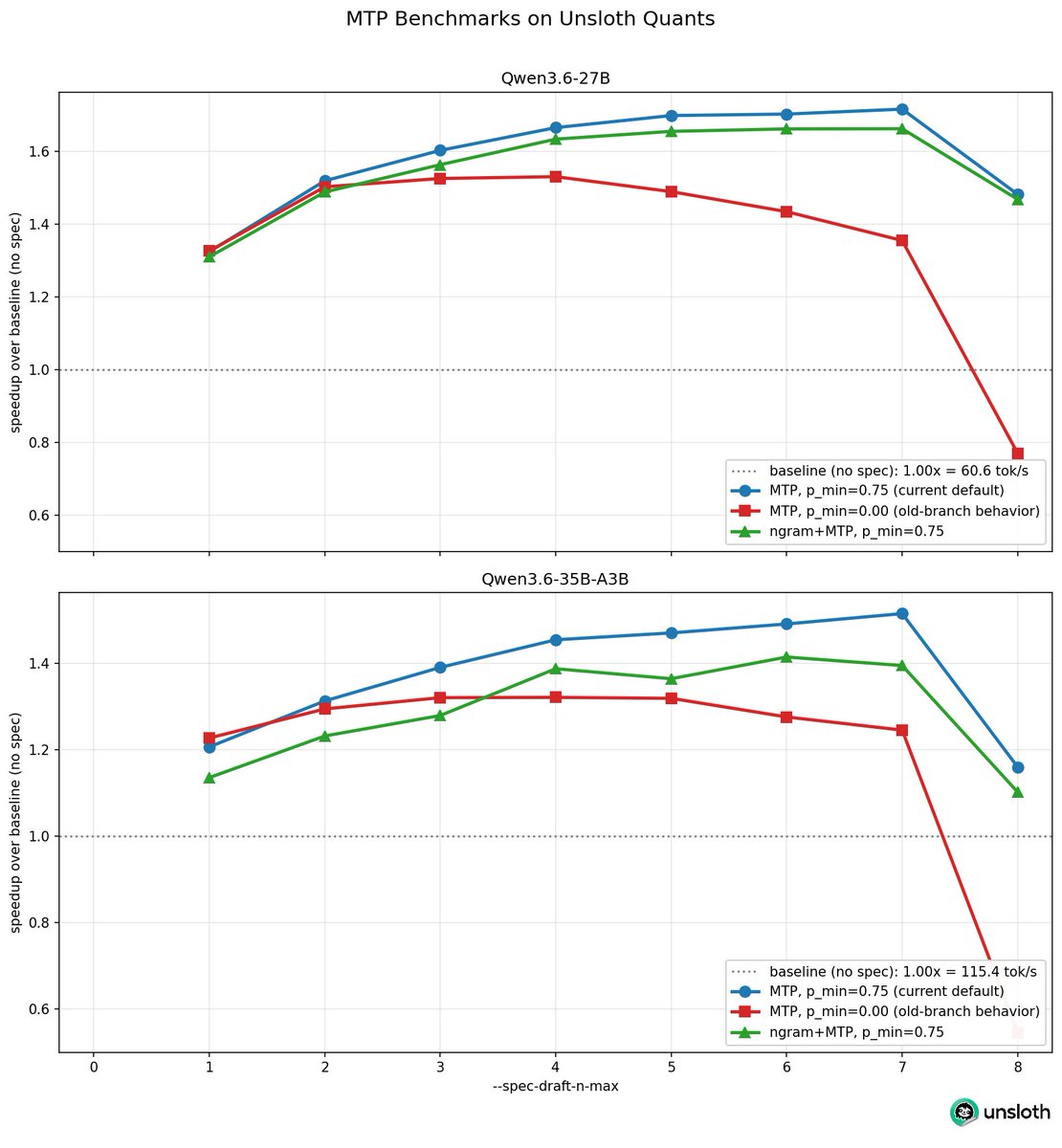

Qwen3.6 MTP Unsloth GGUFs now run 1.8x faster, increased from 1.4x just two days ago!

This is due to llama.cpp adding --spec-draft-p-min 0.75!

Args have also changed from

--spec-type mtp

to

--spec-type draft-mtp

Also increase --spec-draft-n-max 2 to 6

We also released Qwen3.6-0.8B, 2B, 4B, 9B MTP GGUFs! We'll be providing more soon!

For folks who find the new updated branch to have some perf regression, set --spec-draft-p-min to 0.0 to get the old behavior - we provided a plot of the old branch (red) vs the new branch (blue / green) as well.

Also you can use 2 speculative decoding algos - you can add ngram via --spec-type ngram-mod,draft-mtp - the perf isn't yet optimized so I'll do more benchmarks to find better numbers - see github.com/ggml-org/llama…

Guide for MTP: #mtp-guide" target="_blank" rel="nofollow noopener">unsloth.ai/docs/models/qw…

English

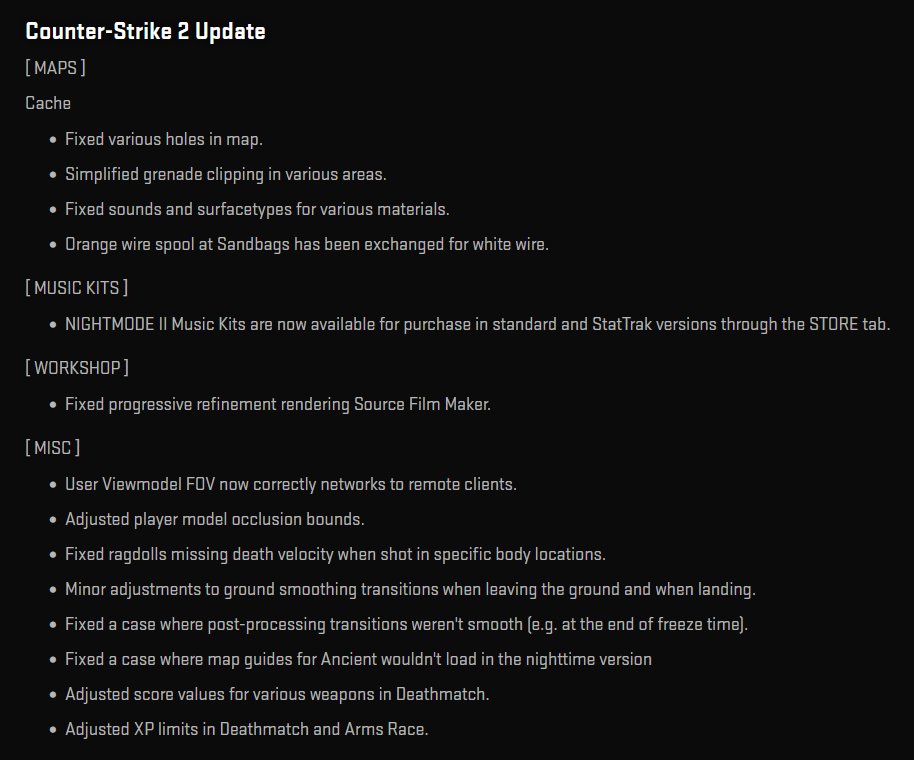

Release Notes for today are up: store.steampowered.com/news/app/730/v…

English

@fitchmultz Whats the benefits of using this compare to playwright-cli + skill? Yes it wraps tools to “native” tools, however the agent still have to draft tool call which is similar to drafting a CLI call

English

If you use pi, try pi-agent-browser-native.

It makes agent-browser a native pi tool, so agents actually use browser automation instead of awkward bash glue.

In my runs it has materially improved tool uptake, speed, and token-efficient browser work.

github.com/fitchmultz/pi-…

English

everything llm land is like that. context overflow signaling, retries, etc.

it's very sad and very horrible.

Armin Ronacher ⇌@mitsuhiko

Lifted straight from the codex code. Stringly typed errors are alive and well.

English

FelikZ retweetledi

FelikZ retweetledi

@badlogicgames I am pretty sure pi audience on mac using brew. Just make it official, why not?

English

People of pi.dev. Do not install.by via any method other than what's shown on the website and in the docs.

E.g. we do not publish to brew and never will. Someone else did. We have zero control over what goes into the brew release.

English

@badlogicgames Any chance to avoid JavaScript for good. Single go binary will be amazing

English

pi depends on this package, but not that version.

i'm sure we'll eventually get fucked by this too, by proxy.

i hate the npm model of dependency resolution.

Tobias Möritz@tobimori

@badlogicgames whoops

English

FelikZ retweetledi