Sabitlenmiş Tweet

The era of Personal Sovereign AI is coming.

We're going to use personal hardware to build personal SaaS.

We stop handing our data over to corporations and start owning our own intelligence.

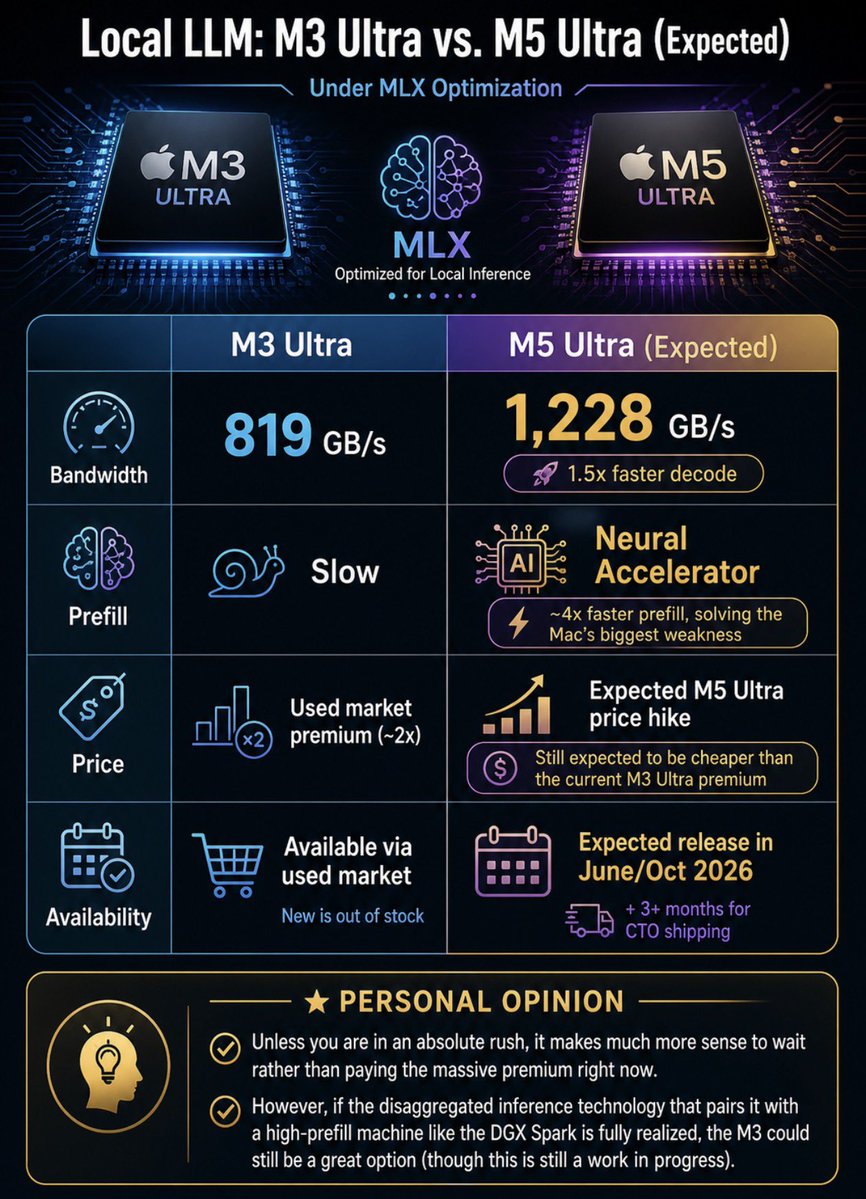

People are already waking up to this and spinning up Local LLMs.

As Big AI adds more guardrails and raises prices, the push for AI sovereignty will only explode.

Bookmark this tweet.

English