Hassan

365 posts

Hassan

@TheHassanEffect

I know the view from the penthouse and I know the taste of the pavement. Now I'm proving that 'Game Over' was just a glitch in the loading screen.

Dallas Katılım Şubat 2025

42 Takip Edilen17 Takipçiler

I'm experiencing this issue on 20x Pro sub and it's been happening almost a week now. Essentially unusable.

Additionally, for me, 8-10 times now, it goes up to 90-100 minutes of thinking and ends with "thinking failed" on trivial tasks.

@OpenAIDevs @OpenAI @sama

Roy@Kingu0613

这两天gpt大规模降智,不知道是风控变严还是vpn波动,具体情况表现为pro模型不思考降智成4o或mini而thinking模式完全正常;plus则是标准思考路由5mini,thinking正常。特殊情况下静置几天会恢复,但恢复后继续降智可能性很高,目前不知道具体是什么原因 #ChatGPT #OpenAI

English

@qiaohui @OpenAI @ChatGPTapp @sama Yup! I'm experiencing this same issue on Pro subscription 20x and it's been happening for almost a week now.

Additionally, for me, 8-10 times now, it goes up to 90-100 minutes of thinking and ends with "thinking failed" on trivial tasks.

@OpenAIDevs

English

Weird ChatGPT web issue with GPT-5.5 Pro / Extended Pro. Fresh chats + manual Pro + Extended briefly show "Pro thinking", then no persistent "Thought for ..."; output quality looks like a fallback/non-reasoning route. Thinking Heavy works normally. @OpenAI @ChatGPTapp @sama @nickaturley @barret_zoph

Tried: new sessions, clearing chatgpt.com site data, relogin, disabling Fast answers. Also hit "Message delivery timed out. Please try again."

My repro chats:

chatgpt.com/c/69f63833-58f…

chatgpt.com/c/69f63360-b4e…

Similar reports:

reddit.com/r/ChatGPTPro/c…

reddit.com/r/ChatGPTPro/c…

reddit.com/r/ChatGPTPro/c…

English

Crowd finds it. Claude fixes it. You ship it.

📺 Demo: youtu.be/3z4H9OT274I

🔗 Repo: github.com/semswitch-inc/…

#BuiltWithClaude #ClaudeCode

YouTube

English

Building with Claude Opus 4.7 this week as part of

@claudeai, @claudedevs, and @cerebral_valley's Built with Opus 4.7 hackathon.

I built CrowdPatch

Community-sourced bugs. AI-shipped fixes. Powered by Claude Managed Agents. Every fix lands as a real GitHub PR.

English

@OmniC79283 Yup, 100%

The "boring ops" stuff is really important. When that stuff has a tighter baseline and better foundation, it makes the more complex stuff go way smoother.

English

@TheHassanEffect Same direction here: cleaner refactors matter, but I still judge coding models by boring ops stuff: preserve repo context, recover from bad shell/browser state, and verify the diff without babysitting. xhigh+fast sounds promising there.

English

This whole subsidization thing, sure they lose some money, but they gain something worth a lot more. User data. Which they use to train new models and sell us the next thing we want since they already know what the demand is. That data is invaluable. And look what it accomplishes? For Anthropic specifically, Mythos-Preview was released and it’s not even available to the general public. I don’t think the prices are going up anytime soon.

English

@testingcatalog Have had this on Gpt pro plan for a bit now it’s quite good

English

@bridgemindai I really would like to know what kind of prompts you are using.

I have built a whole working SaaS within an 6 hour session and have like 25% usage.

Opus 4.7 feels a lot faster and really intelligent, even UI is by worlds better than 4.6

English

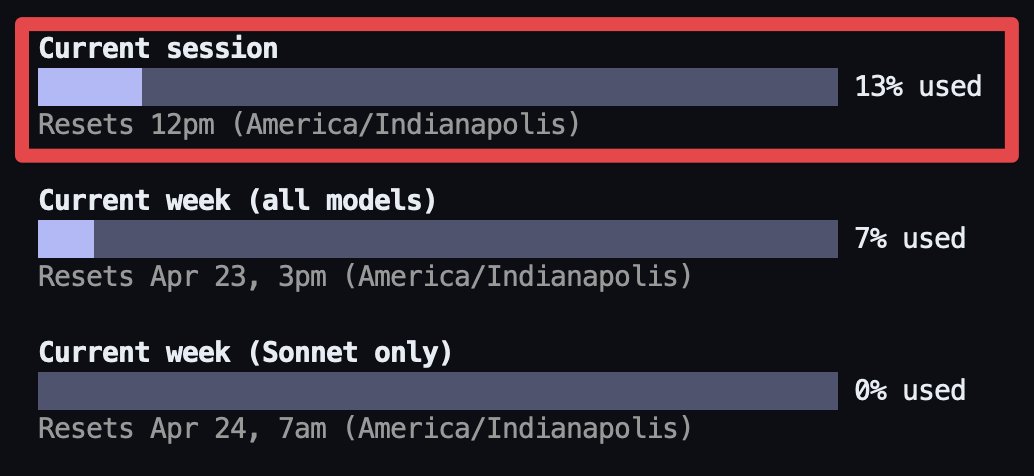

Claude Opus 4.7 eats tokens for breakfast.

3 prompts. 13% usage.

This is why I bought two Max plans.

One $200/month subscription would be gone before lunch.

The model is the best I've ever used.

But you can feel that 35% token increase on every single prompt.

Claude Opus 4.7 is not a model you run on a budget.

English