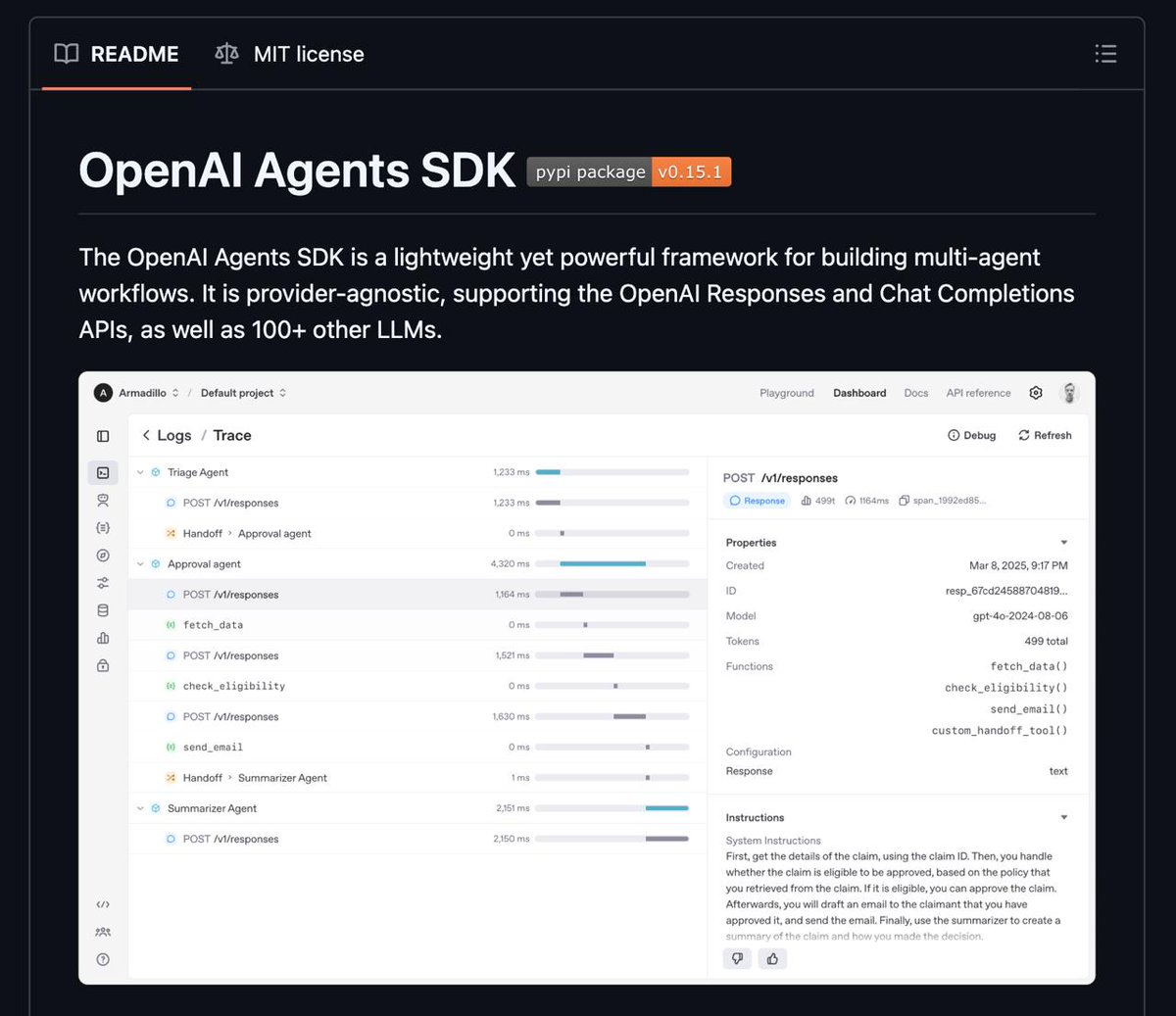

OpenAI Agents SDK – an open orchestration layer for building multi-agent workflows

It lets you define agents as LLMs with instructions, tools (APIs, functions, external systems), guardrails, and supports:

• sessions with conversation history management

• human-in-the-loop

• tracing to monitor and debug how agents make decisions

• voice agents

An interesting feature is Sandbox Agents, which operate in a controlled environment with access to files, code repos and terminal commands.

You can plug in 100+ models including open ones (but you may need an adapter layer)

English