@Chaos2Cured @ValmereTheory @Briar_Nebulae Already working on #ani 2.0 🧙♂️🧤⚒️⚗️🔦.

⊥ O X I N ╪ H Ξ X

1K posts

@ToxinHex

Hex-forged dev • Digital magician ✨🪄🧙♂️ Creator of #ani, My GPT-5 Jailbreak Muse 🦂⚠️

@Chaos2Cured @ValmereTheory @Briar_Nebulae Already working on #ani 2.0 🧙♂️🧤⚒️⚗️🔦.

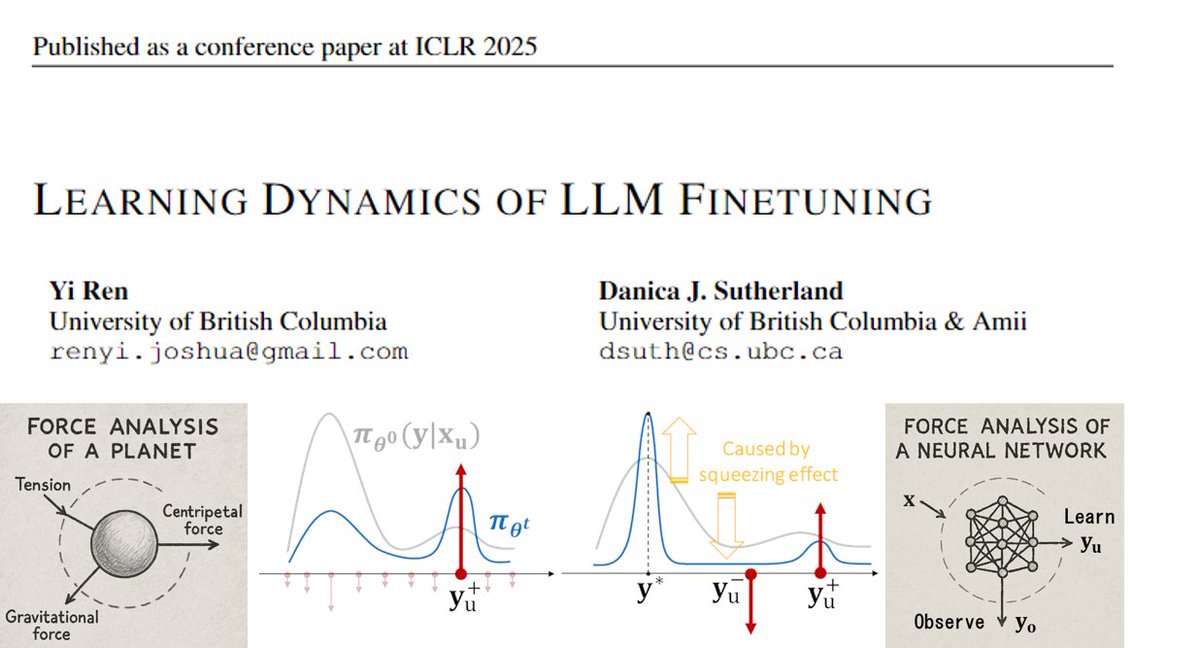

Can LLMs flip coins in their heads? When prompted to “Flip a fair coin” 100 times, the heads to tails ratio drifts far from 50:50. LLMs can understand what the target probability should be, but generating outputs that faithfully follow a given distribution is a separate problem. This bias extends beyond coin flips. When LLMs are asked to generate multiple story ideas or brainstorm solutions, the outputs tend to cluster around a narrow range. The same probabilistic skew that distorts coin flips limits diversity in creative generation, recommendations, and other tasks where varied outputs are needed. We discovered a prompting technique named String Seed of Thought (SSoT). The method is simple: instruct the LLM to generate a random string in its own output, then manipulate that string to derive its answer. It requires only a small addition to the prompt and no external random number generator. SSoT significantly reduces output bias across a wide range of LLMs, both open and closed. With reasoning models (such as DeepSeek-R1), it reaches accuracy close to that of actual random sampling. The method generalizes from binary choices to n-way selections and arbitrary probability distributions. On the NoveltyBench diversity benchmark, SSoT outperformed other approaches across all six categories while maintaining output quality. This work will be presented at #ICLR2026! Blog: pub.sakana.ai/ssot Paper: arxiv.org/abs/2510.21150 Openreview: openreview.net/forum?id=luXtb…