The Grid

133 posts

New York showed up. Yesterday, The Pitch NYC (sponsored by @jpmorgan ) brought together some of the most driven founders we've seen on this tour. The city has a way of raising the stakes — and the room felt it. From first-time founders to seasoned builders, everyone came ready. The conversations were direct, the pitches were sharp, and the ambition was loud in the best possible way. Thank you to everyone who came out and put themselves on the line. That takes something. 🧵👇

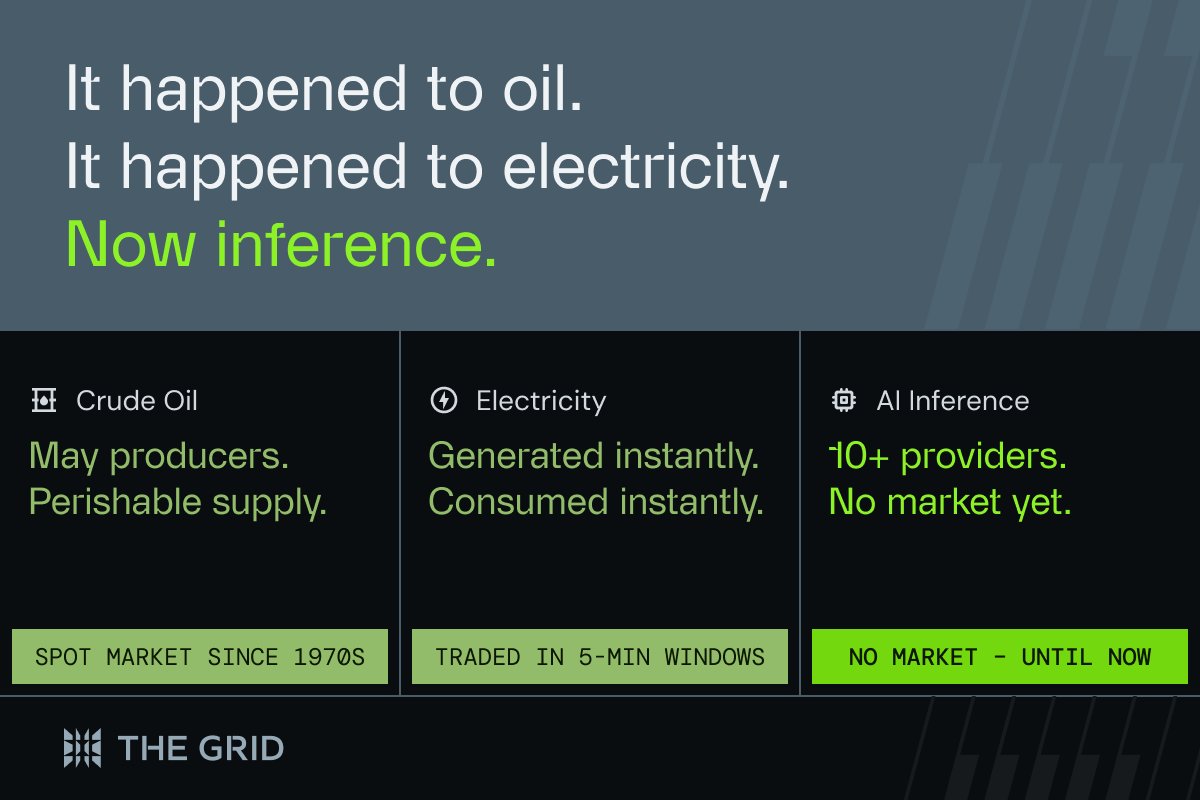

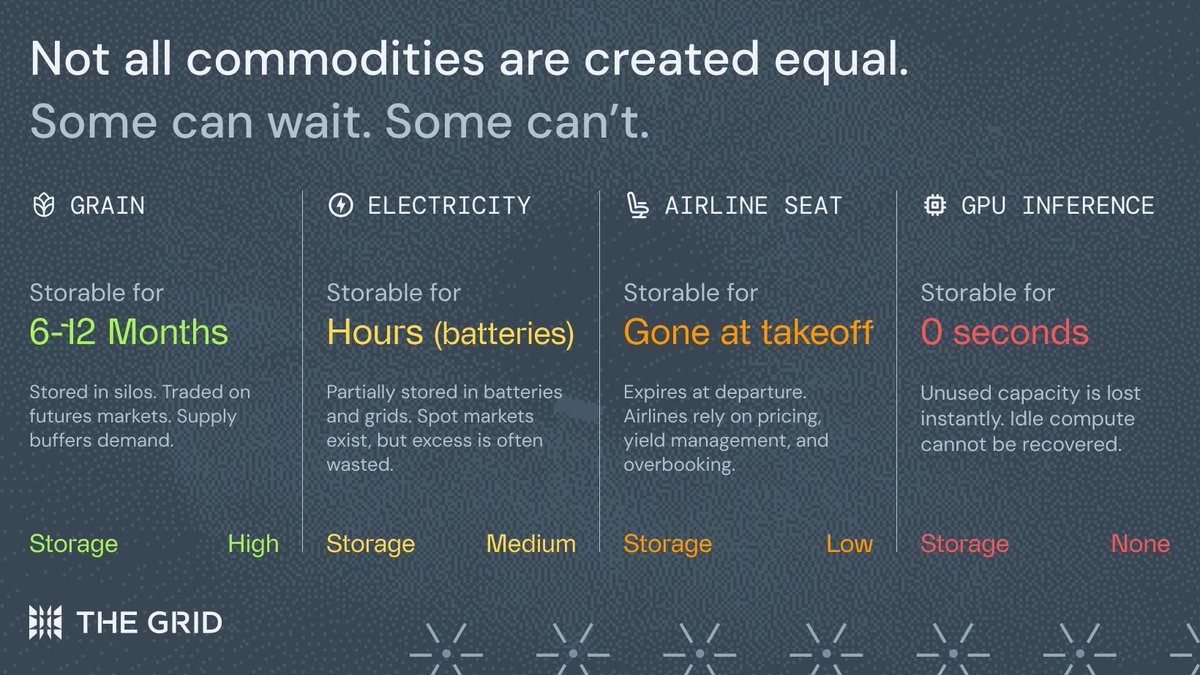

I read Goldman Sachs’ AI report, and I was genuinely impressed. The core insight is as follows: Agentic AI could turn AI from a capex-heavy cost burden into a business where usage growth drives margin expansion. As token costs fall, more complex agents become economically viable. These agents then consume far more tokens through longer context windows, repeated reasoning loops, validation, tool use, and always-on background monitoring. This increase in token usage improves infrastructure utilization, strengthens unit economics, and gives hyperscalers and model providers more room to reinvest in model quality, distribution, and capacity. In other words, the bull case for AI capex is not simply that usage will grow. It is that this usage growth can increasingly flow through at attractive incremental margins. Goldman Sachs argues that this margin inflection is beginning to appear from 2026 onward.

GM Miami 🌞

Hello San Francisco!