Sabitlenmiş Tweet

Prompt Guy

164 posts

Prompt Guy

@Thinkaiprompt

Need better AI Response? I build AI Prompts, Productivity hacks & Prompts Templates that save time. Start here https://t.co/h2olJNZNPJ

In the ocean 🐙 Katılım Nisan 2025

111 Takip Edilen1.3K Takipçiler

Anthropic Had A Very Bad Week. thinkaiprompt.beehiiv.com/p/anthropic-ha…

English

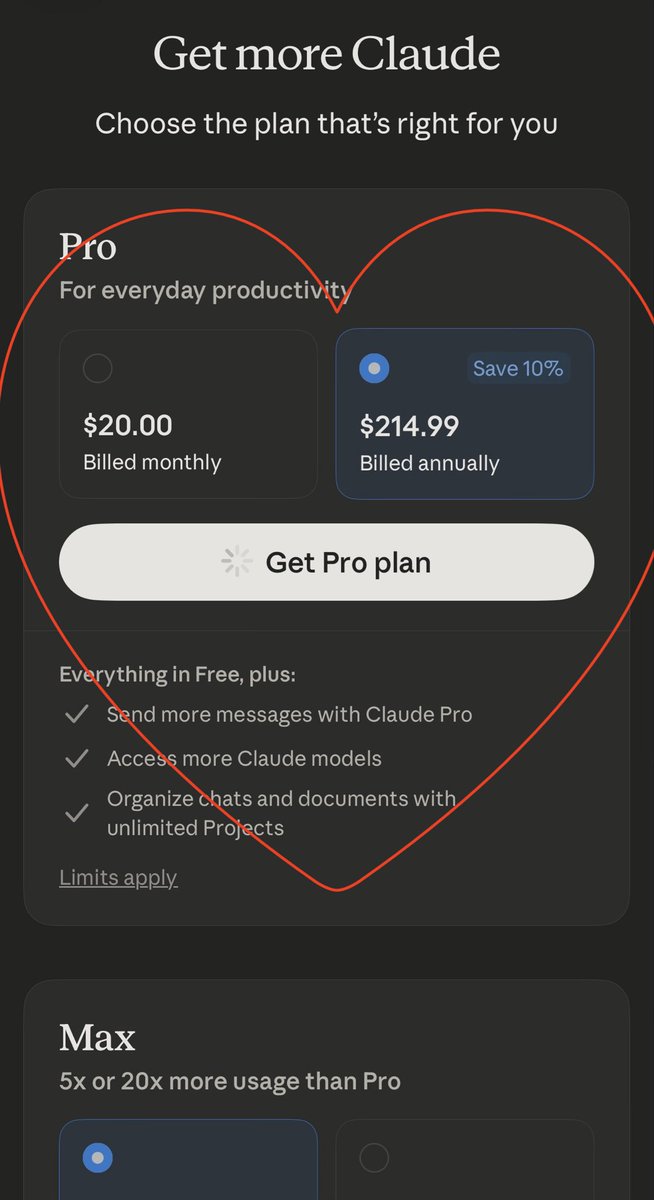

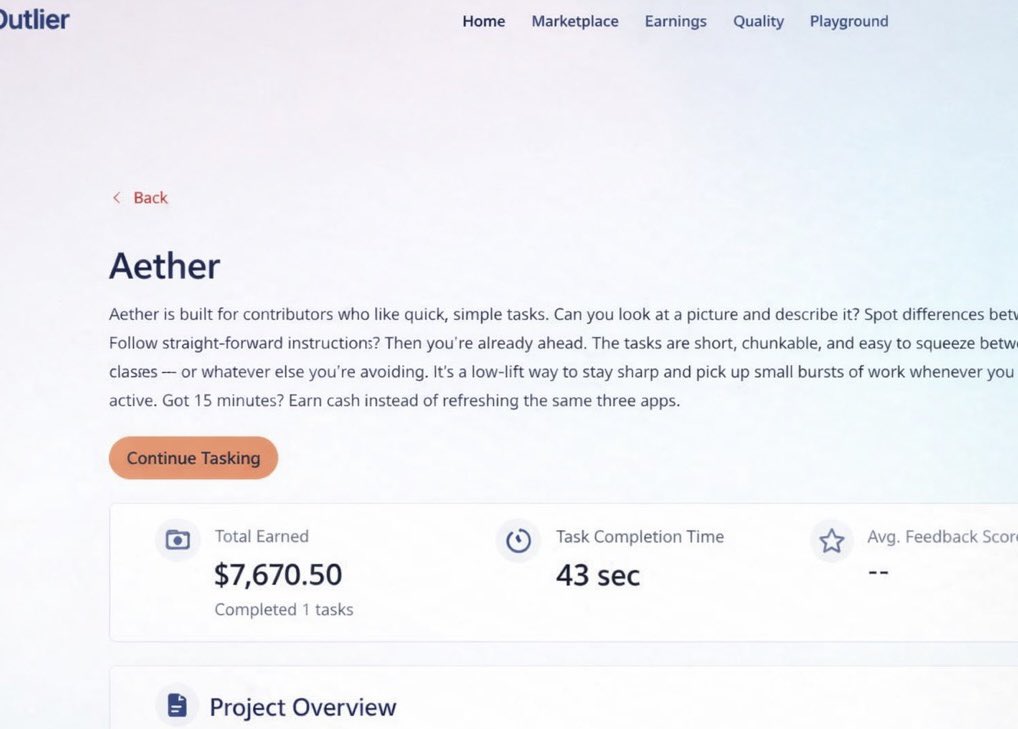

I’ve made over 7K$ + from Outlier

And I’m in Nigeria

But they said Nigerians can’t use it

So what changed ? Absolutely Nothing

Most people just don’t set it up right

Outlier pays you to train AI : Simple tasks, Real money

I’m using it right now from Nigeria

So yes it works

If you want to know how I managed to get my account created

Comment OUTLIER 👇

English

Google just killed Grammarly and didn't even announce it.

New Gmail update rolls out AI to all 3 billion users this week. Free. No subscription.

It now summarizes your email threads, writes replies in your tone, proofreads better than spellcheck, and lets you search your inbox with plain English like "find the contractor quote from last year."

Features that were paywalled last month. Gone.

The tools quietly dying right now:

• Grammarly — Gmail's proofreader just caught passive voice and word repetition in my test

• SaneBox — the AI Inbox does priority sorting now

• Every app you used to summarize long email chains

The part that's actually wild though — Google trained this on your emails to learn your writing style.

You agreed to it.

Everyone did. Nobody read the update.

Meanwhile half of X is still pasting emails into ChatGPT to clean them up.

You're feeding 3 different AI companies your private conversations to do the same task that now lives inside the app you already had open.

Not judging. Did it this morning.

The feature is on by default. You can turn it off but it kills Smart Features too. Which is a weird tax for wanting privacy.

Google didn't beat the AI startups with better technology.

They just waited until everyone built the habit, then put it in the thing you already use every day.

Classic.

English

The part that should keep you up at night:

Anthropic’s two conditions weren’t radical. They were: don’t spy on Americans en masse, and don’t let AI make kill decisions on its own (because the tech isn’t reliable enough yet).

Current U.S. law already restricts both of these things. The Pentagon’s own policies technically prohibit them. Altman confirmed the Pentagon agreed to identical terms with OpenAI.

So Anthropic wasn’t asking for something new. They were asking for written guarantees of things that are already supposed to be true.

And they got blacklisted for it.

The AI safety debate just stopped being theoretical. It went from “should companies set ethical limits on AI?” to “will the government punish companies that try?”

Every AI company in the world just watched what happens when you say “we’d like some guardrails please” to the wrong customer.

This story also reveals something about the current relationship between government and tech: compliance is rewarded, independence is punished. The designation that Anthropic received — supply-chain risk — is a tool designed for foreign threats. Using it against a domestic company over contract terms is, according to legal scholars, unprecedented and potentially illegal.

Anthropic says they’ll challenge it in court. OpenAI is publicly asking the Pentagon to extend the same terms to everyone. Silicon Valley workers are signing open letters.

Whether you care about AI safety or not, this is a story about what happens when a company tells the government “no.”

And the government deciding to make an example of them.

English

This is the first time in U.S. history an American company has been designated a supply-chain risk to national security.

Here’s why that matters beyond the headlines:

For Anthropic specifically:

Every Fortune 500 company with Pentagon exposure now has to ask their legal team: is using Claude worth the risk? Any contractor, supplier, or partner doing business with the military is barred from commercial activity with Anthropic. The legal challenge will take years. The business damage happens immediately.

For AI companies:

The precedent is set. If you build AI and want government contracts, your terms of service are negotiable — but only in one direction. Push back on how your technology gets used, and you don’t just lose the contract. You get labeled a threat alongside foreign adversaries.

For users:

Anthropic’s consumer products (Claude, the chatbot you might be using right now) aren’t affected by this order. You can still use Claude. But the financial and reputational pressure on the company is real, and it could shape how every AI company makes safety decisions going forward.

For the AI industry:

OpenAI, Google, and xAI all have Pentagon contracts. They’re all watching. The calculus just changed from “what safety standards should we set?” to “what safety standards can we afford to set?”

Sen. Mark Warner called it “bullying” and said it “should scare the hell out of all of us.” Senators Markey and Van Hollen called it “a chilling abuse of government power.”

The question nobody’s answering yet:

↓

English

TRUMP vs. ANTHROPIC — THE AI STORY NOBODY’S COVERING RIGHT

An American AI company just got labeled a national security threat. Not for spying. Not for data theft. For saying “please don’t use our AI for mass surveillance of Americans.”

Here’s what happened few days ago:

Anthropic — the San Francisco company behind Claude — had a $200 million contract with the Pentagon. They were the first AI company on classified military networks. Working across the intelligence community and armed services.

The Pentagon wanted full, unrestricted access to Claude for “all lawful purposes.” No exceptions.

Anthropic said: we’re fine with all lawful uses — with 2 narrow limits. No mass domestic surveillance of American citizens. No fully autonomous weapons (because current AI isn’t reliable enough for that yet).

That’s it. Two conditions.

The Pentagon said no deal. Trump posted on Truth Social calling Anthropic “Leftwing nut jobs” and ordered EVERY federal agency to immediately stop using their technology. Six-month phase-out for the military.

Then Defense Secretary Pete Hegseth did something unprecedented:

He designated Anthropic a “Supply-Chain Risk to National Security.”

This label is normally reserved for foreign adversaries. Chinese companies. Russian entities. Nations actively hostile to the U.S.

It’s never been used against an American company before.

Every military contractor, supplier, and partner is now barred from doing any commercial business with Anthropic. Effective immediately.

This is where the story gets wild.

↓

English

@heygurisingh Good prompts but the $175K isn’t for the docs. It’s for the person who knows which problem actually matters and can get 5 teams to care about it. AI can’t do that part yet.

English

@deepfates Honestly one of the things I respect most about Anthropic is they just keep building and let the work speak for itself. Dario not folding under pressure is exactly the energy the AI space needs right now.

English