Tiger Data - Creators of TimescaleDB

419 posts

Tiger Data - Creators of TimescaleDB

@TigerDatabase

The fastest PostgreSQL cloud platform for time series, real-time analytics, and vector workloads. Creators of TimescaleDB. https://t.co/KhYccImJ5D

Introducing Deviation Capital. Era-defining companies are the exception. They break almost every rule of conventional wisdom as they rise, but start the same way. A founder encounters something broken, invents a way forward others couldn't see, and bets everything on bringing it to life. We launch today as the spinout of Two Sigma Ventures to find, fund, and support these founders, backing technical teams harnessing data and computing to build enduring exceptions.

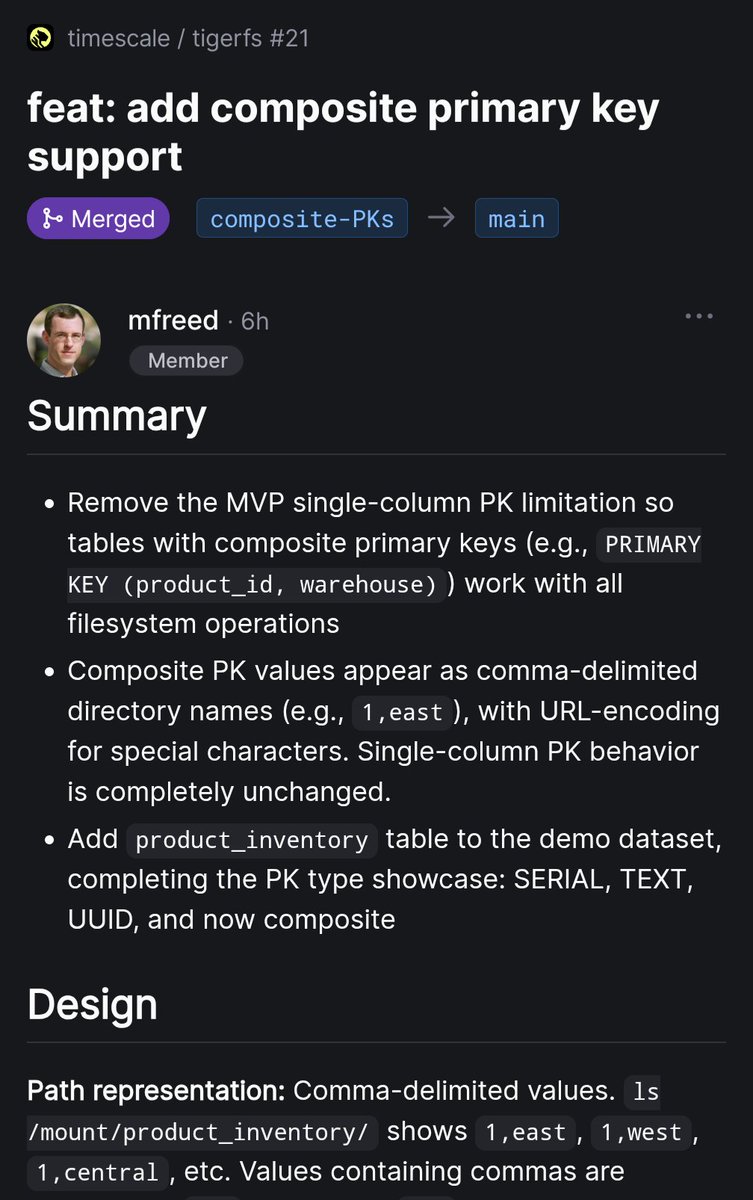

Introducing TigerFS - a filesystem backed by PostgreSQL, and a filesystem interface to PostgreSQL. Idea is simple: Agents don't need fancy APIs or SDKs, they love the file system. ls, cat, find, grep. Pipelined UNIX tools. So let’s make files transactional and concurrent by backing them with a real database. There are two ways to use it: File-first: Write markdown, organize into directories. Writes are atomic, everything is auto-versioned. Any tool that works with files -- Claude Code, Cursor, grep, emacs -- just works. Multi-agent task coordination is just mv'ing files between todo/doing/done directories. Data-first: Mount any Postgres database and explore it with Unix tools. For large databases, chain filters into paths that push down to SQL: .by/customer_id/123/.order/created_at/.last/10/.export/json. Bulk import/export, no SQL needed, and ships with Claude Code skills. Every file is a real PostgreSQL row. Multiple agents and humans read and write concurrently with full ACID guarantees. The filesystem /is/ the API. Mounts via FUSE on Linux and NFS on macOS, no extra dependencies. Point it at an existing Postgres database, or spin up a free one on Tiger Cloud or Ghost. I built this mostly for agent workflows, but curious what else people would use it for. It's early but the core is solid. Feedback welcome. tigerfs.io

"RAG is dead" Expose a file system with files that are easy to read or parse and look at those agents fly. In this video I use two very interesting technologies: TigerFS from @TigerDatabase and LiteParse from @llama_index to build a purely file-system driven agent using @claudeai code and Claude Agent SDK (video link in first comment)

Introducing TigerFS - a filesystem backed by PostgreSQL, and a filesystem interface to PostgreSQL. Idea is simple: Agents don't need fancy APIs or SDKs, they love the file system. ls, cat, find, grep. Pipelined UNIX tools. So let’s make files transactional and concurrent by backing them with a real database. There are two ways to use it: File-first: Write markdown, organize into directories. Writes are atomic, everything is auto-versioned. Any tool that works with files -- Claude Code, Cursor, grep, emacs -- just works. Multi-agent task coordination is just mv'ing files between todo/doing/done directories. Data-first: Mount any Postgres database and explore it with Unix tools. For large databases, chain filters into paths that push down to SQL: .by/customer_id/123/.order/created_at/.last/10/.export/json. Bulk import/export, no SQL needed, and ships with Claude Code skills. Every file is a real PostgreSQL row. Multiple agents and humans read and write concurrently with full ACID guarantees. The filesystem /is/ the API. Mounts via FUSE on Linux and NFS on macOS, no extra dependencies. Point it at an existing Postgres database, or spin up a free one on Tiger Cloud or Ghost. I built this mostly for agent workflows, but curious what else people would use it for. It's early but the core is solid. Feedback welcome. tigerfs.io

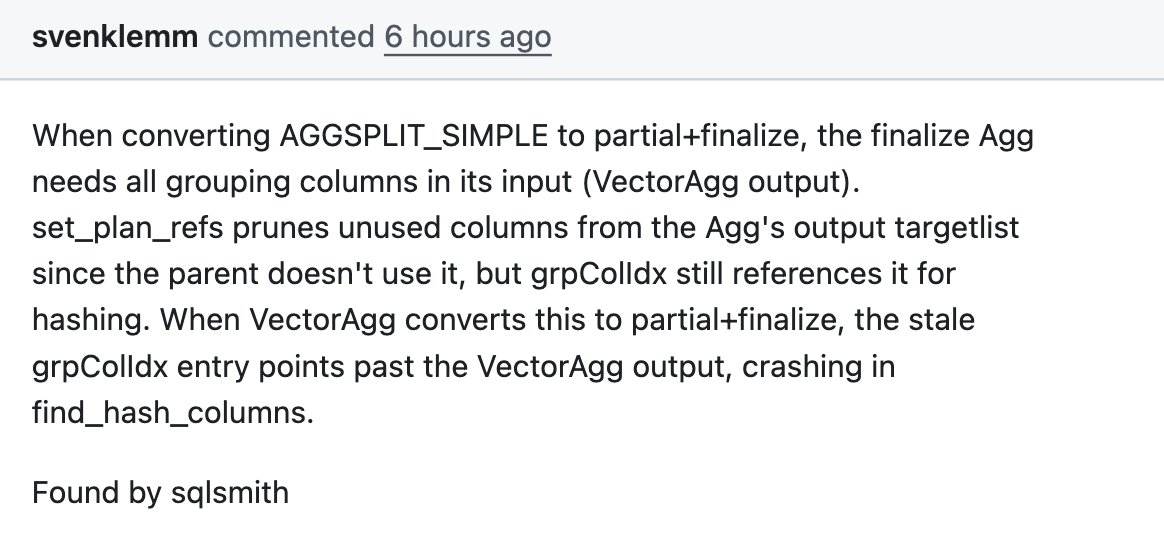

Read what's new in @TimescaleDB 2.26. 🏎️🏎️🏎️