Mike Freedman

2.9K posts

Mike Freedman

@michaelfreedman

Co-founder/CTO, @TigerDatabase / @TimescaleDB 🐯🦄. Professor, @PrincetonCS. Distributed systems, databases, AI, security, networking.

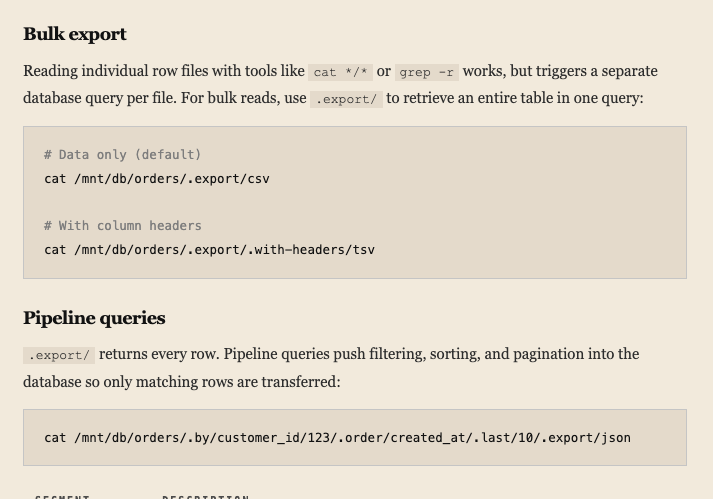

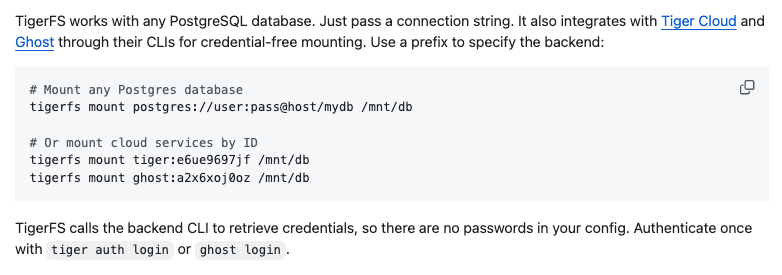

Introducing TigerFS - a filesystem backed by PostgreSQL, and a filesystem interface to PostgreSQL. Idea is simple: Agents don't need fancy APIs or SDKs, they love the file system. ls, cat, find, grep. Pipelined UNIX tools. So let’s make files transactional and concurrent by backing them with a real database. There are two ways to use it: File-first: Write markdown, organize into directories. Writes are atomic, everything is auto-versioned. Any tool that works with files -- Claude Code, Cursor, grep, emacs -- just works. Multi-agent task coordination is just mv'ing files between todo/doing/done directories. Data-first: Mount any Postgres database and explore it with Unix tools. For large databases, chain filters into paths that push down to SQL: .by/customer_id/123/.order/created_at/.last/10/.export/json. Bulk import/export, no SQL needed, and ships with Claude Code skills. Every file is a real PostgreSQL row. Multiple agents and humans read and write concurrently with full ACID guarantees. The filesystem /is/ the API. Mounts via FUSE on Linux and NFS on macOS, no extra dependencies. Point it at an existing Postgres database, or spin up a free one on Tiger Cloud or Ghost. I built this mostly for agent workflows, but curious what else people would use it for. It's early but the core is solid. Feedback welcome. tigerfs.io

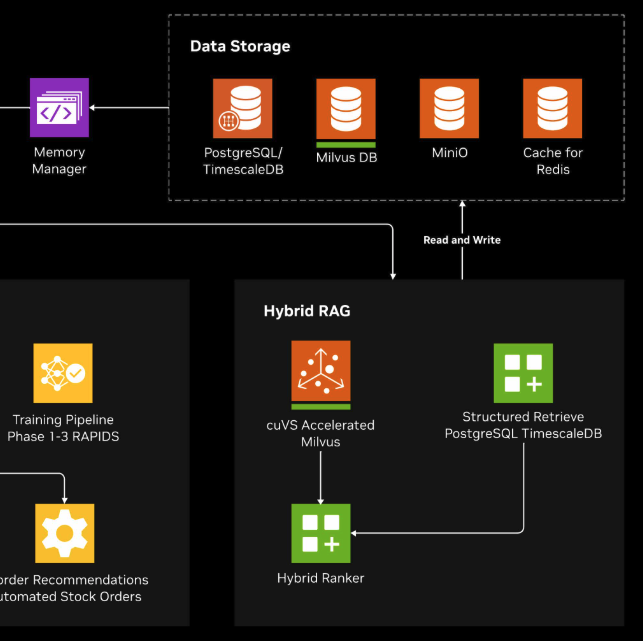

The most important AI systems of the next decade will not live in chat windows. They will run factories, warehouses, energy systems, and fleets. AI is moving from analyzing operations to helping run them. Earlier this year, @nvidia introduced its Multi-Agent Intelligent Warehouse blueprint. What stood out was not just the agents themselves, but the architecture behind them. Instead of dashboards and alerts, specialized agents coordinate across machine telemetry, robotics systems, workforce operations, forecasting, and inventory to support real-time decisions in the physical world. You can see the architecture NVIDIA is proposing here: developer.nvidia.com/blog/multi-age… And the full blueprint here: build.nvidia.com/nvidia/multi-a… Systems like this depend on continuous access to operational data. Factories, warehouses, and energy systems already generate massive streams of telemetry from sensors, robots, PLCs, and machines. The challenge has never been collecting the data. The challenge is to reason quickly enough to act. The architecture starts to look like this: machines → telemetry → database → AI agents → decisions → machines This creates a real-time operational data loop. Agents do not operate in isolation. They need access to the operational history of the systems they manage. Telemetry, events, anomalies, and trends over time. In agent-driven industrial systems, the database becomes the memory layer for machines. Many industrial platforms already rely on Postgres and TimescaleDB to store and analyze time-series telemetry from machines and infrastructure. At Tiger Data, the company behind TimescaleDB, we see this pattern across industrial IoT platforms, fleet monitoring systems, and manufacturing analytics. The future of industrial AI is not just better models. It is systems that can continuously reason across operational data.

Introducing TigerFS - a filesystem backed by PostgreSQL, and a filesystem interface to PostgreSQL. Idea is simple: Agents don't need fancy APIs or SDKs, they love the file system. ls, cat, find, grep. Pipelined UNIX tools. So let’s make files transactional and concurrent by backing them with a real database. There are two ways to use it: File-first: Write markdown, organize into directories. Writes are atomic, everything is auto-versioned. Any tool that works with files -- Claude Code, Cursor, grep, emacs -- just works. Multi-agent task coordination is just mv'ing files between todo/doing/done directories. Data-first: Mount any Postgres database and explore it with Unix tools. For large databases, chain filters into paths that push down to SQL: .by/customer_id/123/.order/created_at/.last/10/.export/json. Bulk import/export, no SQL needed, and ships with Claude Code skills. Every file is a real PostgreSQL row. Multiple agents and humans read and write concurrently with full ACID guarantees. The filesystem /is/ the API. Mounts via FUSE on Linux and NFS on macOS, no extra dependencies. Point it at an existing Postgres database, or spin up a free one on Tiger Cloud or Ghost. I built this mostly for agent workflows, but curious what else people would use it for. It's early but the core is solid. Feedback welcome. tigerfs.io

Introducing TigerFS - a filesystem backed by PostgreSQL, and a filesystem interface to PostgreSQL. Idea is simple: Agents don't need fancy APIs or SDKs, they love the file system. ls, cat, find, grep. Pipelined UNIX tools. So let’s make files transactional and concurrent by backing them with a real database. There are two ways to use it: File-first: Write markdown, organize into directories. Writes are atomic, everything is auto-versioned. Any tool that works with files -- Claude Code, Cursor, grep, emacs -- just works. Multi-agent task coordination is just mv'ing files between todo/doing/done directories. Data-first: Mount any Postgres database and explore it with Unix tools. For large databases, chain filters into paths that push down to SQL: .by/customer_id/123/.order/created_at/.last/10/.export/json. Bulk import/export, no SQL needed, and ships with Claude Code skills. Every file is a real PostgreSQL row. Multiple agents and humans read and write concurrently with full ACID guarantees. The filesystem /is/ the API. Mounts via FUSE on Linux and NFS on macOS, no extra dependencies. Point it at an existing Postgres database, or spin up a free one on Tiger Cloud or Ghost. I built this mostly for agent workflows, but curious what else people would use it for. It's early but the core is solid. Feedback welcome. tigerfs.io

AgentFS is from @tursodatabase, not Neon. But it's (subtly but meaningfully) different. AgentFS is literally trying to build a full file-system, backed by SQLite. Files are broken into segments, segments are backed by the database. It's a more "traditional" view of a remote storage layer. TigerFS started by thinking of exposing the database as a file system (not vice versa), then added the reverse of building "apps" on top of this abstraction layer. Which is how we get files with directories (especially markdown), auto-history, etc. These are synthesized "apps" built on top of it.