Tim Salzmann

19 posts

Tim Salzmann

@TimSalzmann

PhD Student at TUM and Google DeepMind

Currently playing with the idea to switch to an iPhone 15 Pro. Using a Pixel 8 Pro as my daily but since I am developing iOS-only apps, not having an iPhone in the pocket is kind of annoying tbh. Love the Pixel so much but that Natural Titanium Pro looks rather good... 👀 What do you think?

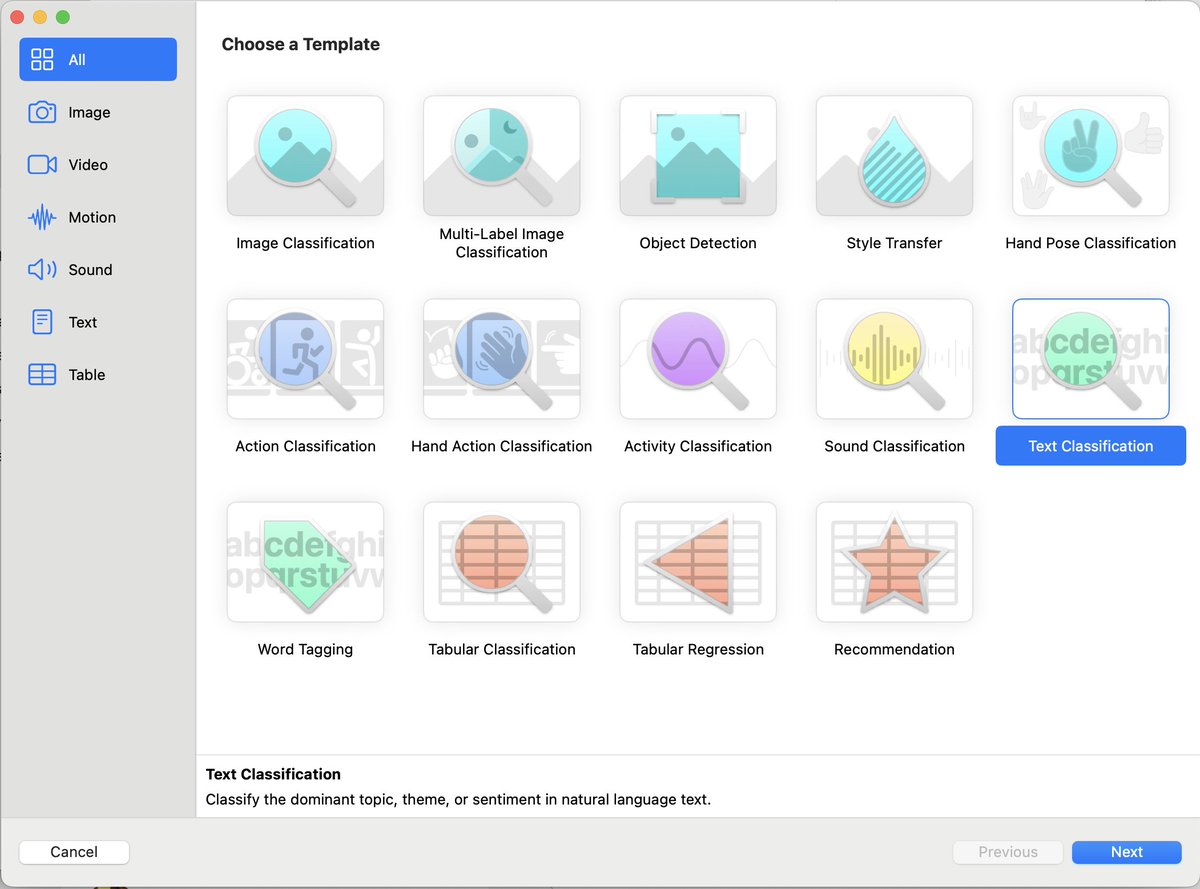

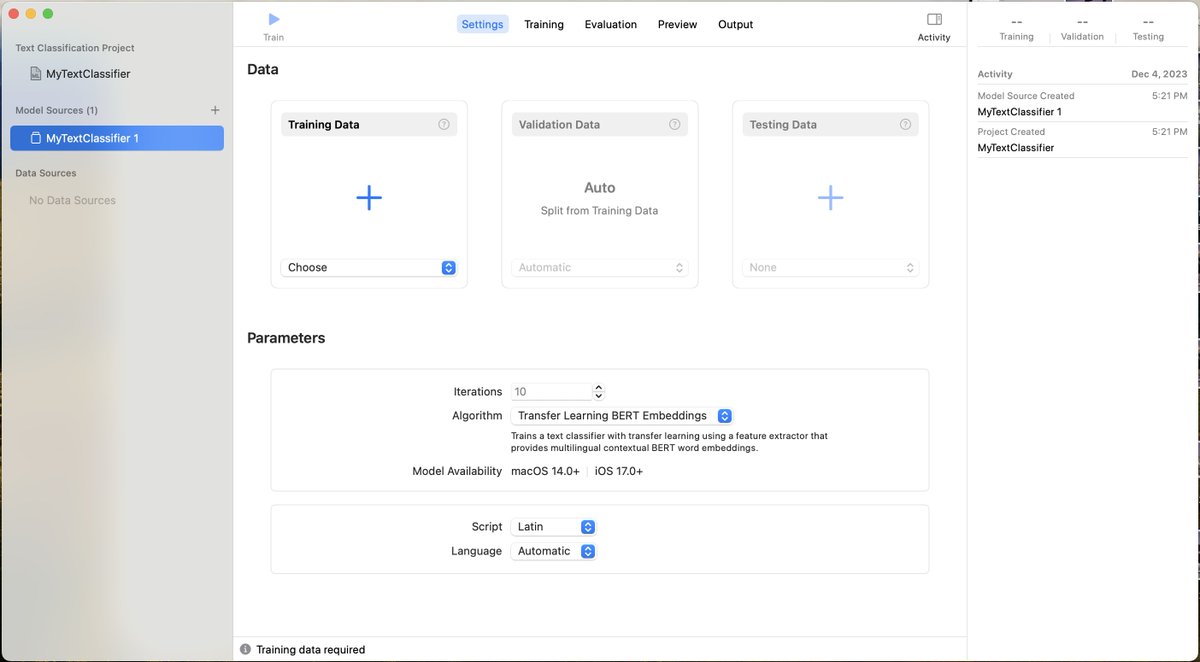

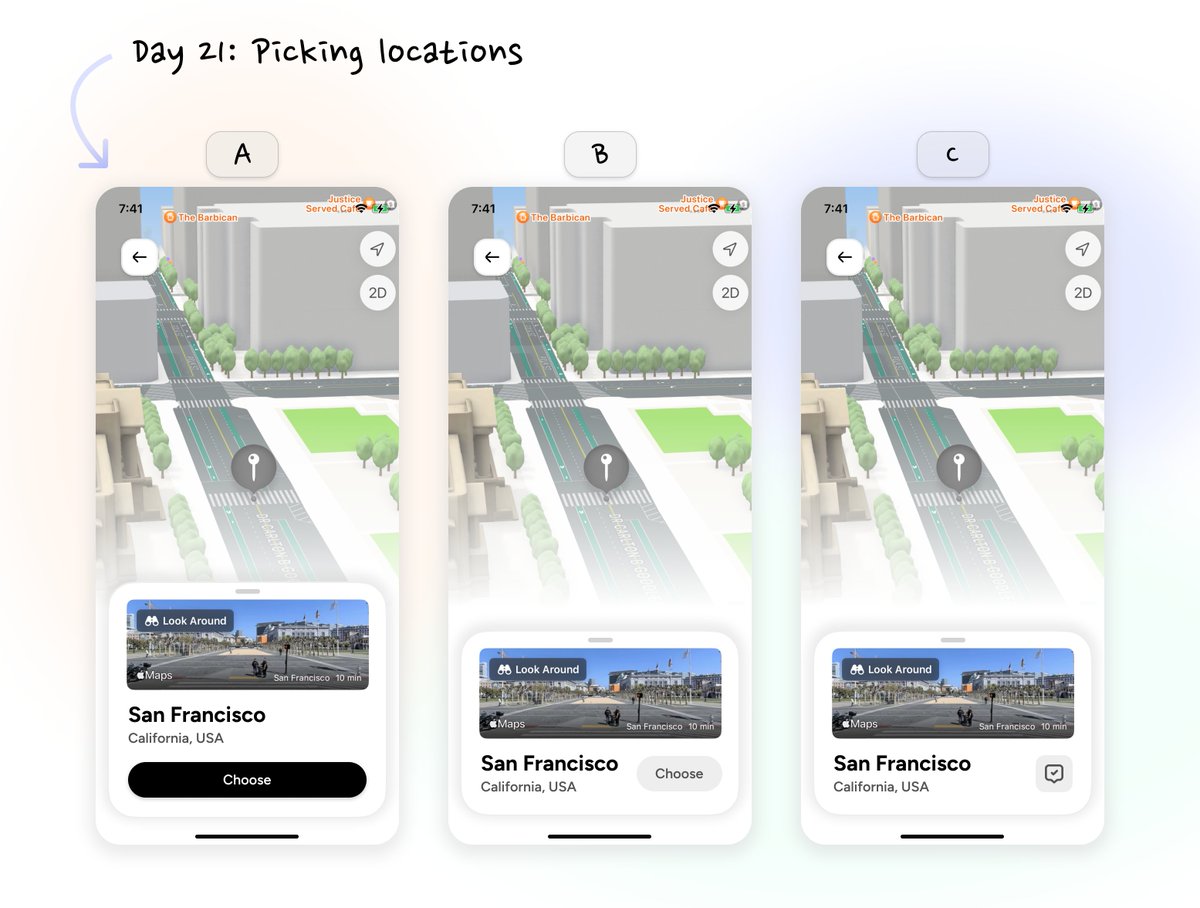

One thing left is tagging user entries. Yesterday, I generated a huge dataset using GPT and then trained a CoreML model to use in the app However, sometimes it works great, sometimes not so. Does anyone have a great idea for a tagging system or great picker UI? #buildinpublic

In our Real-time Neural MPC paper, we leverage network capacities 4000x larger in optimizations. We now release L4CasADi, which enables easy integration of PyTorch models in optimizations on CPU and GPU, supporting fast C code generation and seamless integration in Acados.