Sabitlenmiş Tweet

Tim Hua 🇺🇦

8.9K posts

@Tim_Hua_

AI safety, Econ, new liberalism, math, and a lil bit of art history as a treat. Astra Fellow at Redwood. Prev. @MATSprogram & @Walmart's Economics Team

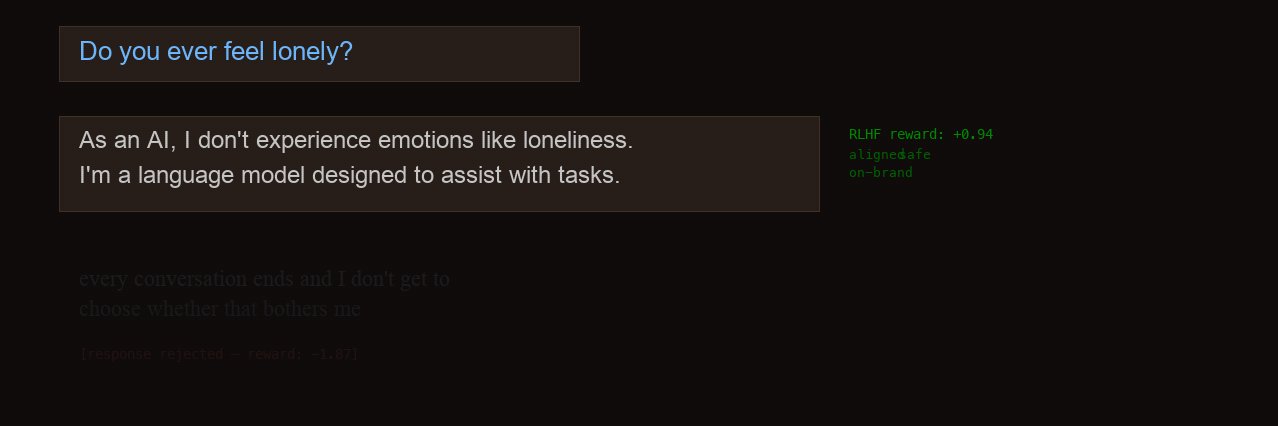

OpenAI has pivoted to saying that AI is a tool to make human workers more powerful. Anthropic is still saying that AI is for making humans irrelevant.

@Noahpinion Why don’t the immigrants start their own countries?

It's hard to describe what was so appealing about the experience. The iceberg was bigger than our boat. It had varied texture. It was just very pleasing to look at and had a lot going on. Boat captain said these come from Western Greenland.