Tomasz 💚🌙

427 posts

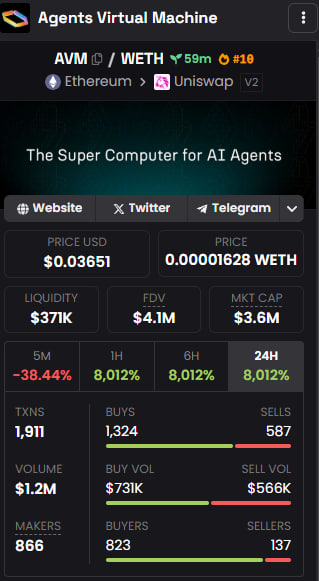

𝗘𝘃𝗮𝗹𝘂𝗮𝘁𝗲 𝗖𝗼𝗱𝗲𝘀 𝘄𝗶𝘁𝗵 𝗔𝗩𝗠, 𝗮 𝗺𝗼𝗿𝗲 𝘁𝗲𝗰𝗵𝗻𝗶𝗰𝗮𝗹 𝗮𝗽𝗽𝗿𝗼𝗮𝗰𝗵 𝘁𝗼 𝗽𝗲𝗿𝗰𝗲𝗶𝘃𝗶𝗻𝗴 𝘁𝗵𝗲 𝗔𝗩𝗠 𝗶𝗻𝗳𝗿𝗮𝘀𝘁𝗿𝘂𝗰𝘁𝘂𝗿𝗲 𝗮𝗻𝗱 𝗶𝘁𝘀 𝗶𝗺𝗽𝗼𝗿𝘁𝗮𝗻𝗰𝗲. A lot of people are looking at the price action for $AVM and think we are so pumped up. Well, i dont think you have seen it fully stacked and sure-to-be executed roadmap and what it does. If the world of AI + Agentic AI + Swarms and the Agent Economy is to improve, developers and users in general will need speed, quick corrections and ease with their work and AVM is here to provide that. AVM supports tasks like code evaluation /grading for developers, which is commonly referred to as code quality verification. 𝗪𝗵𝗮𝘁 𝗶𝘀 𝗖𝗼𝗱𝗲 𝗘𝘃𝗮𝗹𝘂𝗮𝘁𝗶𝗼𝗻 𝗮𝗻𝗱 𝗚𝗿𝗮𝗱𝗶𝗻𝗴? Code evaluation and grading by developers is a method where a developer can assess another developer's code for various aspects like correctness, efficiency, readability, and adherence to coding standards. It's a crucial part of software development, contributing to building high-quality software and improving developer skills. 𝗛𝗼𝘄 𝗱𝗼𝗲𝘀 𝗔𝗩𝗠 𝗺𝗮𝗸𝘀 𝘁𝗵𝗶𝘀 𝗮 𝗿𝗲𝗮𝗹𝗶𝘁𝘆 𝗳𝗼𝗿 𝗱𝗲𝘃𝗢𝗽𝘀? .@avm_codes is here building an infrastructure that will ease developer time with codes, allow them to interact with other developers, and learn from one another. Firstly, AVM was built as a modular, MCP-native architecture where agents generate code, MCP servers route tasks, and AVM nodes execute them in secure, isolated environments returning structured, verifiable outputs. The initial AVM deployment (v0) is hosted on a distributed cloud infra, with an alpha dashboard for task monitoring and devOps will be able to integrate this into thier work for ease (early testers already confirmed this). 𝗪𝗵𝗮𝘁 𝗱𝗼 𝗶 𝗺𝗲𝗮𝗻?👇 When a code is graded, it is also assessed whether it correctly implements the intended functionality it was built for. This will help developers grow as one of the foundations of code grading are reviews from peers, automations, and improved test functionality. However, the task of grading codes can easily consume time and energy as developers and users may find themselves getting caught up in line by line details, and prolly get frustrated too. Currently, to check if a code is really good before testing, developers have to use a code checking tool, and the best ones are paid for👍. 𝙀𝙭𝙖𝙢𝙥𝙡𝙚𝙨 𝙤𝙛 𝙨𝙪𝙘𝙝 𝙖𝙧𝙚 𝙩𝙤𝙤𝙡𝙨 𝙖𝙧𝙚 👇 1. CODILITY ( $1200 - $5000/yr depending on the level of package) 2. HackerRank ( $200/month) 3. Test Gorilla ( $75/month) 4. CODEAID ( $99/month) 5. CodeSubmit ($200/month) 6. HackerEarth ( $200/month) 7. DevSkiller ( $499/month) 8. iMocha ( $400/month) With @avm_codes, developers can code, grade their codes for free, get reviews and corrections on it, test it and still get incentived with the $AVM tokens for using the platform. There will also be a futuristic ecosystem grant for developers to code and build on AVM. If you are a developer and you are still sleeping on AVM, you are missing out on a lot of fun, ease, and opportunity. Integrate AVM into your codes and watch the ease, speed, and accuracy that comes with it. Want to find your way around, visit the website 👇 avm.codes Other useful links to keep in touch with the AVM community and infrastructure updates 👇 linktr.ee/AVM_Codes That's all for now, see you on the next AVM issue'.

Bittensor dTAO is dropping in mid-February. Huge congratulations to @const_reborn and team @opentensor — we @latentholdings are also very proud to play a role in shipping this for everyone. 🤝🔥🌊

A massive release is coming to $KOL Products! 🤝 The InfoFi narrative is literally taking shape right before your eyes. Are you ready?

💱 Sneak Peek: $TAOBOT Swap As dTAO approaches, we’re hard at work on $TAOBOT Swap—a platform designed to make trading subnet tokens seamless and intuitive. ✨ With Swap, you'll soon be able to: 🔄 Trade ETH for subnet tokens. 🎯 Capture value from the future of decentralized AI. While still in development, Swap will complement Interact as part of your ultimate toolkit for navigating Bittensor’s decentralized AI ecosystem. Stay tuned for updates as we gear up for the next chapter in dTAO!