Curtis

150 posts

Snapshot, subject to change. Clearwater Forest 1H 2026. Diamond Rapids mid 2027, 16CH. Coral Rapids mid 2028, starting with 8CH. As mentioned in Q1 call, may be accelerated. Crescent Island and Crescent Island Workstation late 2026, Xe3p. Jaguar Shores late 2027, Xe4.

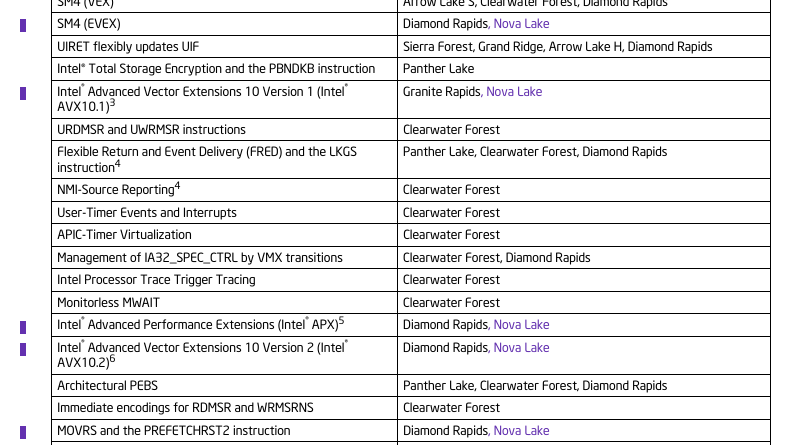

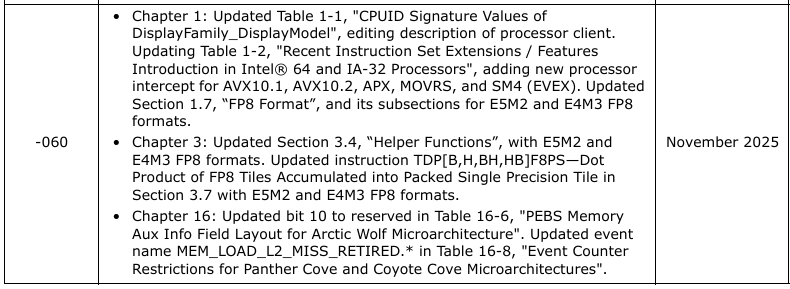

#Intel re-released the 59th edition of the ISA Extensions Reference with #USER_MSR clarifications: Download: cdrdv2-public.intel.com/865891/319433-… #DiamondRapids #NovaLake #WildcatLake #PantherCove #CoyoteCove #ArcticWolfs

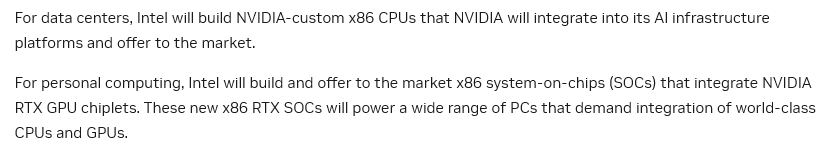

Huge deal between $NVDA and $INTC. NVIDIA and Intel announced a multi-generation collaboration across PC and datacenter and NVIDIA will invest $5B in Intel at $23.28 per share. The joint solution will be a tight coupling Intel x86 CPUs and NVIDIA RTX GPUs over NVLink for PCs and data-center platforms. Timeframe are TBD and current roadmaps with arm will not change, per sources, meaning NVIDIA will offer both. Overall •PCs: Intel will build and sell x86 SoCs integrating NVIDIA RTX GPU chiplets •Data center: Intel will build NVIDIA-custom x86 CPUs that NVIDIA integrates into its AI platforms •Financial: NVIDIA is committing $5B to Intel equity at $23.28/share •Scope: Product collaboration—not a foundry manufacturing deal Why it matters •Windows/x86 PCs: Higher-bandwidth, lower-latency CPU-GPU coupling should lift AI inference and pro-app and gaming performance versus discrete PCIe designs. Data center: NVIDIA gains an x86 option alongside ARM; Intel attaches CPUs into NVIDIA’s fastest-growing AI platforms. What we don’t know yet •Ship timing, ramp cadence, and how many generations. •NVLink specifics (bandwidth, flavor, coherence) and CPU-DRAM/GPU-HBM memory topology. •Process nodes and packaging (EMIB/Foveros) for the PC SoC and the custom DC CPU. •PC SoC scope (NPU, power targets, die/chiplet counts) and NVIDIA rack-level designs. •Software stack details (CUDA/driver model on Windows/x86; Linux support). •Commercial mechanics of RTX chiplets inside an Intel-sold SoC and CPU attach accounting on NVIDIA platforms. My take: There’s no doubt this is BIG for Intel, GOOD for NVIDIA and If execution lands, this gives Windows AI PCs a credible scaling path and gives data-center buyers an x86 choice inside NVIDIA platforms—without blowing up existing roadmaps. Now it’s about silicon, software, and speed of OEM and rack-scale design engineering. It makes life more difficult for $AMD and $ARM, but without more details, it’s hard to assess. On PC, a high performance notebook with tightly-coupled Intel+NVIDIA seems strong for AI, gaming and workstation. While deets are slim, it’s interesting to think about multi-GPU configs (are we back?) On datacenter, it’ll come down to choice, choose Arm or Intel, as customers did before. It’ll come down to right-brain, performance per watt. Will this datacenter optionality be more confusing for customers, or, will there really only be one solution? In my scenario planning the past year, I had expected Intel to offer NVIDIA an x86 license to create its own CPUs but this is not part of this deal. I also thought we’d see some more foundry commits. It’s great that the X86 chipsets and the PC combo solution will use foundry, but no GPUs on Intel Foundry in this announcement. Press conference at 10AM PST with LBT and Jensen.

The @Intel Xeon 6980P vs. @AMDServer EPYC Power Efficiency / Performance-Per-Watt Benchmarks A look at the CPU power consumption and perf-per-Watt for the new Xeon 6 Granite Rapids. phoronix.com/review/intel-x…

@tldtoday Any proof on the argument that the Studio Display is “more HDR than most monitors that label themselves HDR”? That EDR article doesn’t states that. I wonder where you came up with it. Seriously, I want to read it.