TrendNinja 🥷

173 posts

TrendNinja 🥷

@TrendNinjaApp

Supply chain constraints → stock moves. Before Bloomberg. Capital maps. Novelty gaps. Who wins. Who loses. 🥷 https://t.co/ktCqqHLgN2

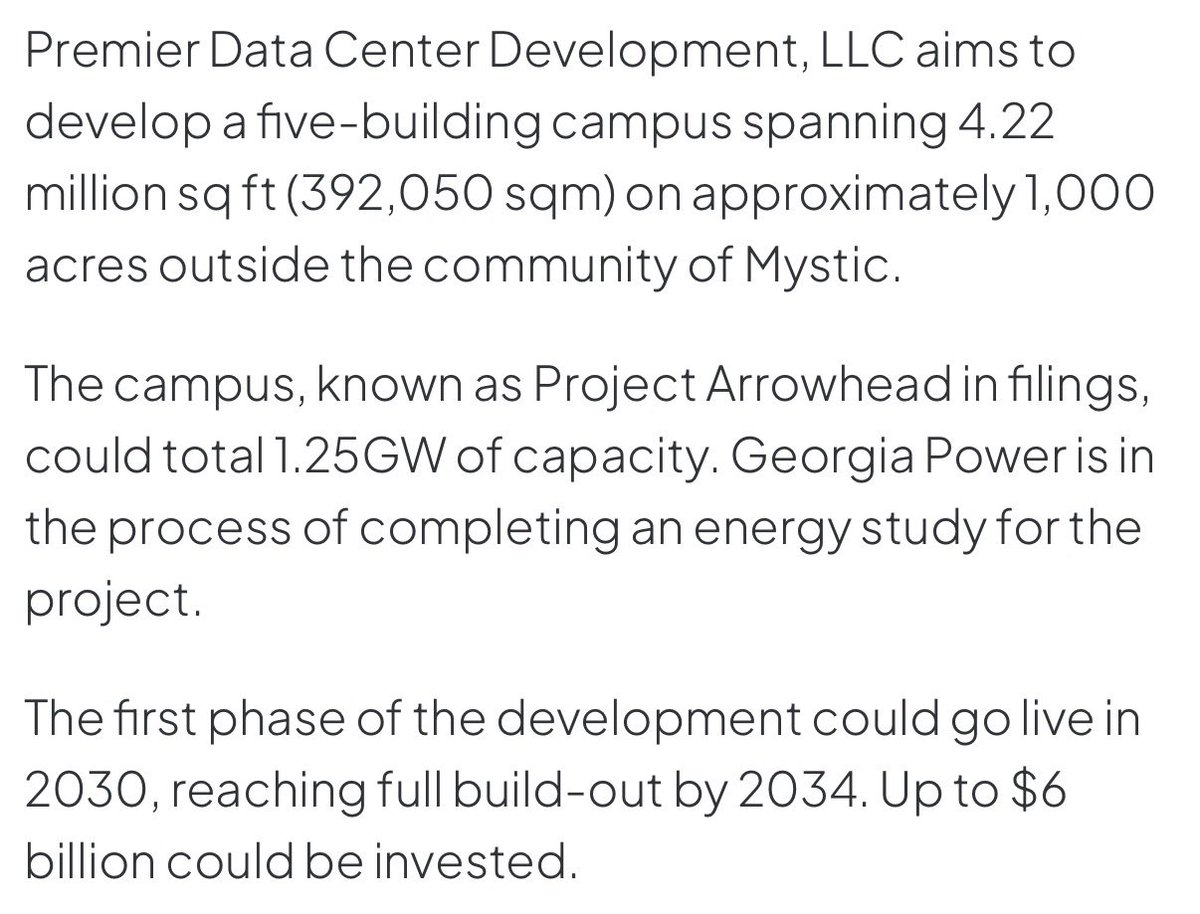

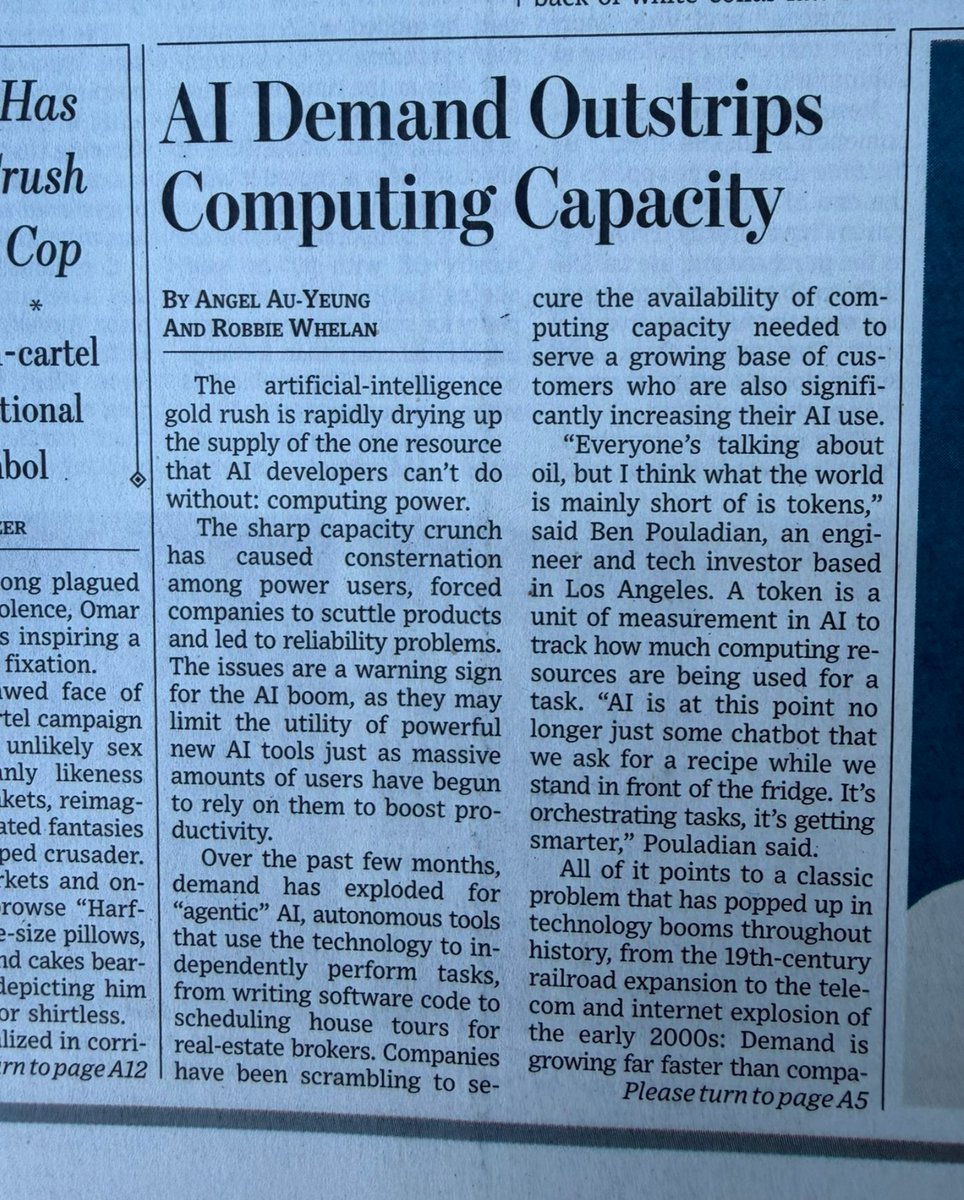

It's almost as if there is overwhelming, accelerating demand for AI compute and the mainstream media is finally covering what I have been pounding the table on and writing about for many months regarding Nvidia, OpenAI, and Anthropic $NVDA WSJ: "Over the past few months, demand has exploded for “agentic” AI, autonomous tools that use the technology to independently perform tasks, from writing software code to scheduling house tours for real-estate brokers. Companies have been scrambling to secure the availability of computing capacity needed to serve a growing base of customers who are also significantly increasing their AI use." "Hourly rental prices for GPUs, the microchips used to train and run AI models, have surged since the fall." "Spot-market prices to access Nvidia’s GPUs, or graphics processing units, in data-center clouds have risen sharply in recent months across the company’s entire product line, according to Ornn, a New York-based data provider that publishes market data and structures financial products around GPU pricing."

Dylan Patel says GPUs are no longer the biggest bottleneck. According to @dylan522p, now CPUs are the constraint. In the early AI era, CPUs were the laggers. You used them for storage, checkpointing, pre-processing, etc. (pretty light workloads) The models weren't agentic and couldn't go step by step. Just string in and string out (simple inference) Then OpenAI launched O1 preview in September '24, and RL training loops have since tightened every month. - initially it was checking model output with regex - then running classifiers - followed by code unit tests + compilation - and finally agentic flows calling databases & scientific simulations The model outputs to an environment, gets verified, and trains on it. Coding agent revenue went from a couple billion to north of $10B in roughly 6 months. Something like Codex 5.4 can work agentically on its own for 6-7 hrs straight - doing all sorts of calls (databases, cron servers, scraping) That requires insane CPU capabilities. And over the last two quarters, the entire cloud market ran out of CPUs. - GitHub has been really unstable lately - Amazon's CPU server installations 3x'd year over year - Microsoft sold all of its spare CPUs to Anthropic & OpenAI Earlier, it was 100 megawatts of GPUs served by 1 megawatt of CPUs. Now that ratio is getting much closer for both RL training and agentic inference. There's simply no capacity anywhere, and it's causing massive instability.

$AMD| Why EPYC CPU is worth >$1 Trillion alone🧵 Not Financial Advice! AMD's EPYC server CPU business standalone valuation should be exceeding $1 trillion market cap. This is not hype, it is driven by structural, explosive demand from the inference/agentic AI era, combined with AMD's accelerating market share, chiplet-driven differentiation, and sustained pricing power from multi-year supply constraints. Current total AMD market cap sits at ~$350 billion , so this implies EPYC becoming the dominant value driver, with the rest of the portfolio (Instinct GPUs, Client/Gaming) as upside. EPYC isn't a legacy CPU play, it's the "orchestration engine" and bottleneck-solver for agentic AI clusters. Every large-scale AI deployment still requires dense x86 CPUs for workload management, data routing, tool calling, verification loops, enterprise integration, and keeping GPUs utilized at 80%+ without idling. Agentic AI (multi-step autonomous agents) multiplies token/compute demand 5–50x per interaction versus simple inference, shifting the CPU:GPU ratio higher and making high-core EPYC platforms indispensable. 1. Some facts first: The lowest end of FY2026 projection: AI GPUs: $40-50B (I'm very conservative already) EPYC Data center: $15-$20B(EPYC may contribute as large of revenue in 2027 due to explosive agentic AI demand) Client Segment: $12-$13B Gaming: $6B Embedded: $4-$5B Total Revenue: $77-$94B Non-GAAP net income $19.3B-$23.5B Non-GAAP EPS $12-$14.7 Each Helios Rack come with 18 trays ~18 compute trays, with 4 GPUs + 1 EPYC Venice ("Zen 6") CPU per tray. ~ Or 72x MI455X and 18 EPYC Venice ~Total system includes 31 TB of HBM4 memory, up to ~2.9 exaFLOPS of FP4 AI performance (or ~1.4 exaFLOPS FP8), and high-bandwidth interconnects So 1GW= $20B-$25B is combination of both MI455X, EPYC, networking Pensando Vulcano and UALink. Due to explosive Agentic AI demand from enterprises as well as small to individual businesses, Large customers/hyperscalers and AI native companies will demand EPYC rack dense setup. Yes EPYC will need time to catch up on supply, so we will see even more explosive growth on EPYC in 2027. Yes,full EPYC CPU-only racks are not only possible, but they are already being deployed (and surging in demand) specifically to handle explosive agentic AI workloads. Agentic AI (autonomous agents that plan, reason in loops, call tools/APIs, manage memory/context, orchestrate multi-step tasks, and interact with external systems) shifts the workload heavily toward general-purpose compute You don’t need GPUs in every node. Dense, pure-CPU racks using AMD EPYC processors are commercially available today and optimized for specific workloads like ~Dell PowerEdge M7725 + IR7000 Integrated Rack → up to 74 dual-EPYC nodes in a single 50OU rack = ~27,000 cores per rack. ~Supermicro H14-series and other A+ servers → ultra-dense 1U/2U dual-EPYC nodes (up to 192+ cores per CPU in current gens, scaling higher with Venice) for AI/HPC inference and orchestration. ~HPE Cray Supercomputing GX250 Compute Blade (CPU-only blade). 8 × next-gen AMD EPYC “Venice” CPUs per blade (each up to ~256 cores in high-core variants).Up to 40 blades per compute rack. 2. Current Momentum and Scale Data Center segment: Record $16.6B in FY2025 (+32% YoY), with Q4 at $5.4B (+39%). Q3 Q4 2026 will be the beginning of a J-curve momentum, and bears cannot stop it. AMD hit a record 41.3% server CPU revenue share in Q4 2025 (up from ~35% earlier), driven by 5th-gen Turin EPYC (already >50% of EPYC revenue by year-end). Dr. Su said AMD is targeting 50%+ server CPU share, and I believe she will get it in 2026-2027, especially the generational leadership lift through TSMC 2nm class. Inference already dominates AI compute (>50–70% of spend). Agentic workloads require far more CPU cycles for orchestration, reasoning loops, RAG/database queries, and parallel tasks. Lisa Su has repeatedly noted CPU demand "far exceeded expectations," with "strong double-digit" server TAM growth in 2026 explicitly tied to agentic AI. Intel is said to raise price 30% toward May, and is likely to raise another 10-20% by year end. $AMD is likely to follow but slighly less increase to gain more market share, or for example Intel 50%, AMD will do 40-45% increase. Hyperscalers need more EPYC cores per GW of AI capacity (some models show 4x increase). AI servers themselves are exploding: AI server market from ~$125B (2024) toward $800B+ by 2030 (CAGR 35%+). EPYC anchors the full stack (head nodes, storage, networking). AMD frames the opportunity as part of a $1T compute market by 2030 (up from prior estimates), with AI infrastructure driving server refreshes and custom/hybrid silicon. EPYC's x86 compatibility, flexibility, and TCO advantages win in cost-sensitive inference and enterprise agentic deployments. However, the $1T TAM may be outdated in the last few months, where we are looking at $1 Trillion TAM by 2027. It is simple, because businesses found massive productivity gain through Agentic AI and is willing to pay handsome $ for Compute. EPYC is getting ramped up to match demand per Dr. Su from most recent Morgan Stanley Conference, and we will see it more clearly in 2027. EPYC Revenue is likely in the $20-$50B depending on how fast TSMC can ramp up the supply. TSMC is expanding even faster in Arizona and potentially up to 10 FABs in Taiwan for just 2nm production. I will link the threads where I discuss the Supply detail. Capacity expansions take 12–24 months. Agentic adoption is still in early innings (enterprise pilots accelerating). This creates durable pricing powerunlike cyclical CPU markets of the past similar to how NVIDIA sustained GPU pricing in training booms. 3. Why >$1 Trillion Market Cap is fully justified as standalone At this kind of growth, $AMD EPYC could bring in $20B in Operating Income in 2027, so if u slap a 50x forward earnings multiple, as explosive Agentic AI demand cycle is just getting started => It would be $1 Trillion market cap already. 50x forward earnings is very reasonable for an explosive CPU cycle just getting started, some would argue 80-100x in a more positive sentiment market. Now on a forward P/S, AMD cannot service all EPYC demand, or 15-20m units in 2026-2027(Venice), but AMD should be able to meet ~6-8m units within 12-18 months cycle or roughly a $70B-$100B Revenue business by itself. If you factor in the highest end of Venice, or $20k at premium configurations, we would be talking about $140B run rate. A simple, 15x P/S at the lowest end would already be $1.050 Trillion market cap. Now, everyone should know, TSMC is fully booked through 2028 on 2nm, and AMD is the 2nd largest customer. For AMD to service even 2/3 of full demand of Agentic AI demand, TSMC would have to accelerate 2-3 more 2nm Fabs for AMD, which is the current plan from what I see from TSMC. But Supply chain and construction are complex, so we will have to monitor this. But what we do know so far, Dr. Su said demand “Frankly, you know, we see just a tremendous demand for traditional compute as well. If you look at the CPU cycle, we’ve always believed that the computing stack is heterogeneous, and you’re gonna need CPUs and GPUs and FPGAs and all of these components. … And that’s really coming to fruition here in 2026.” “We’re seeing a significant CPU demand, frankly, as a result of the inference demand picking up. … You’re now seeing the growth of inference exceed training, which is what we all expected but that’s a great thing because that means people are actually using … all of these models to now do real work. We’re seeing the growth of agentic AI …” “Actually, as much as I’m very, very excited about the GPU portion of the business, I mean, the CPU portion of the business has actually far exceeded my expectations in terms of demand. I was pretty bullish to begin with, right?” “If you talk to our top customers, they’re like: ‘Wow… Lisa, the demand for CPU compute sitting along AI was perhaps something that was under-forecasted.’ We are in the process of catching up.” She added context that supply is now tightening due to the rapid acceleration in orders over recent quarters, but AMD is expanding capabilities through 2026–2027 and working closely with customers (including long-term commitments) to address it. The demand surge is driven by agentic AI applications, where each GPU-generated token or action triggers multiple CPU-intensive orchestration, reasoning, verification, and enterprise integration tasks effectively raising the CPU:GPU ratio in modern AI clusters. Conclusion: What began as a high-performance x86 CPU business has quietly become the indispensable orchestration backbone of the agentic AI era. Inference has already overtaken training as the dominant compute workload, and agentic systems; those autonomous, multi-step reasoning loops that generate 5–50× more tokens and CPU cycles per interaction have fundamentally rewritten the hardware equation. Every GPU token now triggers layers of orchestration, verification, data movement, and enterprise integration that only dense, high-core x86 platforms like EPYC can handle efficiently. Dr. Lisa Su captured this perfectly at the March 2026 Morgan Stanley conference: the CPU side of the business has “far exceeded my expectations,” with hyperscalers openly admitting that “CPU compute sitting along AI was under-forecasted.” Demand is so structural that AMD’s server CPU book is effectively sold out into 2026, enabling sustained pricing power and ASP uplift that traditional cyclical CPU markets never delivered. The numbers tell the story. In FY2025, Data Center revenue hit a record $16.6 billion, with EPYC contributing roughly half and closing Q4 at a record 41.3% server CPU revenue share (28.8% unit share). Fifth-gen Turin already dominated the mix, and sixth-gen Venice launching H2 2026 on 2nm with up to 256 cores, dramatically higher bandwidth, and rack-scale Helios integration, is modeled to command flagship ASPs of $15,000–$20,000. With AMD’s long-term target of >50% server CPU revenue share inside a re-rated, AI-driven server TAM that could exceed $70–100 billion by 2027 at 60%+ gross margins and 35%+ operating margins. That level of durable, high-margin earnings, growing 50%+ annually in a supply-constrained environment, easily supports a 50× forward earnings multiple, precisely the valuation premium the market assigns to infrastructure leaders that own the “picks and shovels” of the next computing wave. AMD’s chiplet architecture, Infinity Fabric interconnect, and hybrid custom-silicon flexibility exactly the platform you highlighted in your original analysis give EPYC a lasting moat that pure accelerators cannot match. In an era of $1 trillion global compute demand by 2030, hyperscalers are not choosing between CPUs and GPUs; they are buying both, and EPYC is the flexible, x86-native foundation that makes the entire stack economically viable. The rest of AMD (Instinct GPUs, Client, Gaming, Embedded) becomes pure upside. When the market fully appreciates that the CPU business is no longer a supporting player but a multi-decade, pricing-power juggernaut powering the agentic revolution, EPYC alone will re-rate to a trillion-dollar valuation and AMD’s total enterprise value will follow. The demand is not coming; it is already here, and it is structural, not cyclical. This is why Sexist Analysts will be wrong, $AMD joining the top 10 Largest companies in the world is inevitable. Not Financial Advice