Sabitlenmiş Tweet

Turing

9.5K posts

Turing

@turingcom

Accelerating superintelligence to drive economic growth.

Palo Alto, CA Katılım Eylül 2018

2.1K Takip Edilen15.8K Takipçiler

Turing retweetledi

My first interview with @jonsidd was going through all my saved games of SimCity 2000 together. He was impressed… until he got to my RollerCoaster Tycoon parks and said they could have been more creative.

Seriously though, if you're looking for your next great adventure using AI to shape the real world, check out @turingcom.

exQUIZitely 🕹️@exQUIZitely

Job interview: "Any management experience?" Me:

English

Excited to announce that @hbarra , @alcor and I are joining Meta Superintelligence Labs with the entire @Dreamer team today.

The last few months have been extraordinary: we built Dreamer, put the beta in the world just a month ago, and saw magic come to life for real people. Since then, thousands of people have used Dreamer to build personal, intelligent software with our Sidekick in the world’s newest and most popular programming language: English!

They're building and sharing agents to manage email, calendar, and to-do’s, create learning tools for their kids, learn new languages, plan trips with friends, become better cooks, help them with work, achieve their health goals, or simply to creatively express themselves—all sorts of surprising and uniquely personal needs. These are agents as unique as the people building them, because they're built exactly the way each person wants them to be. We’ve captured some of our favorites at dreamer.com/community-lett….

What matters most here isn’t the early momentum; it’s what Dreamer has enabled people to do. People are building things they’ve wanted for years. They’re solving real, important problems no traditional software company would ever prioritize, because they’re too niche, too bespoke, too personal. What company would ever build for an “n of 1”?

Our bet from the beginning has been that software should be personal, malleable, and shaped by the person using it. The constraint was never people’s imagination. It was the fact that building software is out of reach for most people. This early chapter gives us conviction that the idea resonates, the need is real, and the moment is now.

@alexandr_wang was helpful to us from the very beginning, and when we showed Dreamer to Mark Zuckerberg and @natfriedman earlier this year, it was clear right away that we share the same vision of the future: one where billions of people have the power to create software that makes their lives better. We’re thrilled to accelerate this mission by joining Meta Superintelligence Labs and licensing our technology to Meta. Read more at meta.com/superintellige….

Deeply grateful to our investors @jillchase124 and @ninaachadjian for supporting our vision for a more personal, creative, and intelligent future for software. Thank you for the trust, the thought partnership, and for being in our corner at every step.

To everyone in our community who built with us: thank you. You've taught us what's possible, and you're the proof this works. We're so grateful, and we're just getting started!

English

How would this work in your enterprise? Talk to us.

turing.com/intelligence/c…

English

Turing operates at the intersection of frontier research and enterprise deployment.

Our experience with leading AI labs informs what’s realistic, reliable, and ready for production.

That perspective helps enterprises move faster, avoid costly missteps, and deploy AI systems that scale within real regulatory and operational constraints.

turing.com/blog/frontier-…

English

Human-guided AI is how AI works in regulated environments.

In compliance, fraud, and audit workflows, speed is not enough. Systems must be explainable, auditable, and defensible.

Autonomous-first AI fails where accountability matters:

-Hallucinations

-Silent drift

-Unclear decisions

-Weak audit trails

“The model said so” does not hold up.

The shift is architectural:

-> Confidence-based routing

-> Deterministic validation

-> Human gating before execution

-> End-to-end traceability

This is partial autonomy:

-Routine work scales

-Edge cases get expert review

-Every decision is reconstructable

Governance is not a layer. It is the system.

English

Turing Research is launching a groundbreaking initiative to capture and utilize the complete, unfiltered operational history of companies, creating the definitive dataset for training the next generation of frontier models.

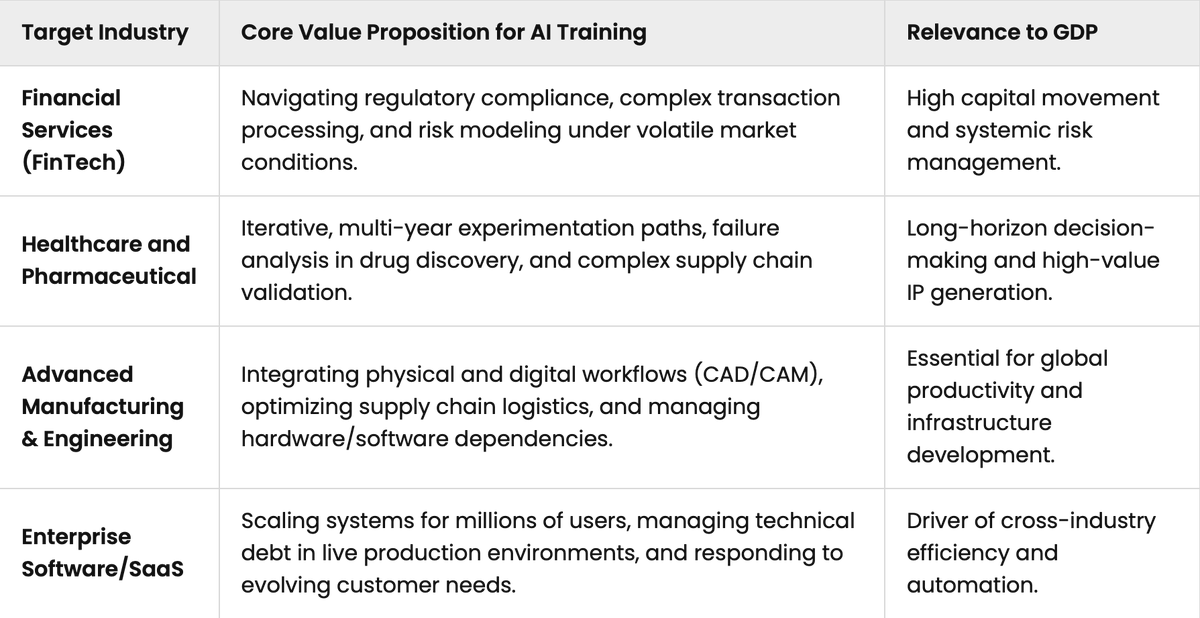

Project Lazarus is an initiative to acquire and permanently preserve the full, unfiltered operational history of defunct or inactive companies at scale. We focus on private codebases, version histories, internal documentation, post-mortems, experimentation logs, infrastructure tooling, and everyday work artifacts that collectively reflect how real organizations actually operate.

These materials capture the reality of knowledge work: incomplete specifications, tradeoffs made under time pressure, accumulated technical debt, evolving systems, and decisions made under uncertainty. Unlike polished outputs, operational traces preserve the causal structure of work across weeks, months, and years.

We prioritize industries with high complexity and outsized GDP impact, including financial services, healthcare and pharma, advanced manufacturing, and enterprise software. These domains contain long-horizon decision making, regulatory constraints, supply chain dependencies, and high-value intellectual property that are critical for training economically useful AI systems.

The data is structured for advanced methodologies such as reinforcement learning, imitation learning & long-horizon task evaluation, enabling models to learn multi-step reasoning, organizational decision processes, and system diagnosis over extended timelines.

For founders, Project Lazarus is also preservation. A company’s history is a compressed record of human judgment, experimentation, and problem-solving. Instead of disappearing, that work compounds by becoming part of the foundation shaping the next generation of autonomous AI systems.

English

Request a sample task featuring a curated issue prompt, validated patch, pass/fail test states & metadata on difficulty, solvability, and repository source: turing.com/case-study/cur…

English

CASE STUDY: Better code models need better benchmarks.

We partnered with a client to build a dataset that shows where models actually break, not just where they succeed.

200+ SWE-bench style Java tasks

20+ real GitHub repositories

Each task includes a validated patch, reproducible tests, and a trainer-authored issue prompt

The goal was simple: reflect how bugs are found and fixed in the real world.

The problem:

Most benchmarks rely on clean, solvable examples. Real pull requests are not like that. They are messy, uneven, and often hard to resolve.

Our client needed to understand:

-Where their model succeeds

-Where it fails

-How well it generalizes across real codebases

The approach:

We curated tasks from high-quality Java repositories with strict criteria:

-Reproducible test failures before the patch

-Clean passes after the patch

-Meaningful logic changes only

-Stable compilation throughout

Each repo was containerized in Docker to ensure consistent, isolated test execution.

When issues were missing, Turing trainers wrote them. Every prompt was:

-Problem-focused

-Neutral and solution-agnostic

-Aligned with test behavior for clean evaluation

We also balanced difficulty:

-About 30 percent solvable

-About 70 percent designed to expose failure modes

The outcome:

A benchmark that does more than measure accuracy. It reveals capability.

Teams can now:

-Test model performance on real bugs

-Identify breakpoints across complexity and context

-Analyze failure patterns with precision

This is how you move from optimistic benchmarks to real insight.

English

Turing retweetledi

Turing retweetledi

Turing is featured in @ServiceNowRSRCH's Enterprise Ops Gym paper.

We built the task and evaluation backbone:

-1,000 prompts

-7 single domain plus 1 hybrid workflow

-7 to 30 step planning horizons

-Expert reference executions with logged tool calls

-Deterministic validation for success and side effect control

Enabling structured comparison of enterprise agent performance across domains and complexity tiers.

Dataset -> Paper -> Website -> Code. Below.

English

Turing retweetledi

Turing retweetledi

Paper: arxiv.org/abs/2603.13594

Website: enterpriseops-gym.github.io

Code: github.com/ServiceNow/Ent…

English

Turing retweetledi

Case Study: Most AI agent evals are flawed.

They measure outputs. Real agents operate across 80–200+ actions, tools, and OS environments where failure is gradual, not binary.

At Turing, we built a new evaluation framework:

-900+ deterministic tasks

-450+ parent–child pairs

-1800+ evaluable scenarios via prompt–execution swapping

• 6 domains, balanced across Windows, macOS, Linux

• 40% open-source, 60% closed-source tools

Each task includes full telemetry:

-screen recordings

-event logs (clicks, keystrokes, scrolls)

-timestamped screenshots

-structured prompts, subtasks, and metadata

The key idea: structured failure.

Instead of injecting errors, we create them by swapping execution and intent:

-Parent prompt + Child execution

-Child prompt + Parent execution

This produces controlled, classifiable failures:

-Critical mistake

-Bad side effect

-Instruction misunderstanding

With calibrated complexity (80–225 actions) and strict QA, this becomes a fully reproducible benchmark.

Result:

We can measure not just if agents succeed, but:

-where they break

-how errors propagate

-how robust they are across real environments

Agents don’t fail at the answer. They fail in the process.

Read more case studies below.

English

Turing retweetledi

@jonsidd @turingcom @steph_palazzolo @theinformation Bold move, ROI will hinge on data provenance and licensing governance more than the narrative

English

Turing retweetledi

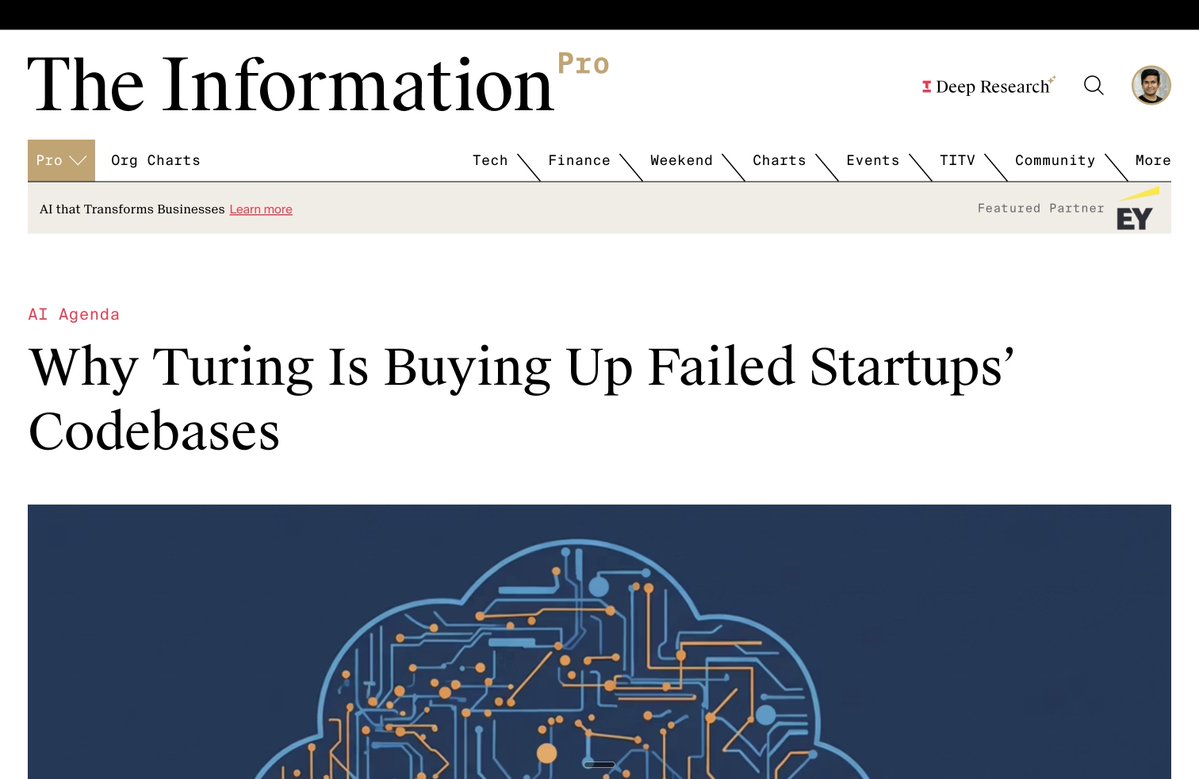

Important Project Lazarus update:

Back in December, @Turingcom pioneered acquiring real-world startup/enterprise codebases and operational data to train frontier AI models. @steph_palazzolo at @theinformation broke the story on day one. Incredible reporting that helped define a new category.

The companies might be dead, but the human intelligence that built them can live on, powering the next generation of frontier models. Lazarus from Turing resurrects the spirits of dead companies.

Now we're scaling massively. Buying all data assets from active and inactive companies.

Founder or investor or operator with data to monetize? Hit me up.

DM or email jonsid@turing.com

Stephanie Palazzolo@steph_palazzolo

Can't go public or sell yourself? Try selling your codebase to an AI lab as training data! In this morning's AI Agenda, we get into this growing trend, as data curation firms like Turing and AfterQuery pick up failed startups' codebases. theinformation.com/articles/turin…

English

@SnowD3n_india It looks like you may still have an unanswered question.

English

After filling all the details for job application on turing.com submit button gets disabled. @turingcom?

English

@jonsidd @turingcom @steph_palazzolo @theinformation Love this execution. Finally, a use for all that abandoned SaaS spaghetti. Data liquidity is the VC exit strategy nobody planned for.

English

Turing retweetledi

Turing is buying your code and all your data assets. Get in touch.

@turingcom

Turing@turingcom

That COBOL system you retired 3 years ago? It's sitting in a repo — unmaintained, unused, but valuable. Have a legacy codebase? Schedule a call below.

English