Voltropy

37 posts

why is claude compaction so bad. codex compaction is basically instant. why can’t they just copy whatever oai did

There's a lot of cool stuff being built around openclaw. If the stock memory feature isn't great for you, check out the qmd memory plugin! If you are annoyed that your crustacean is forgetful after compaction, give github.com/martian-engine… a try!

🚨 OpenClaw users! Install Lossless Claw immediately! Every message stored. Full history searchable. Context that actually survives. This is the single biggest upgrade you can make to your OpenClaw setup. Not close. I’m not affiliated or paid to post this. It’s that good!

OpenClaw AI Browser Agents + Lossless Claw + Kimi K2.5 + Nvidia + Ollama x.com/i/broadcasts/1…

OpenClaw's Creator Says Use This Plugin x.com/i/broadcasts/1…

There's a lot of cool stuff being built around openclaw. If the stock memory feature isn't great for you, check out the qmd memory plugin! If you are annoyed that your crustacean is forgetful after compaction, give github.com/martian-engine… a try!

You can now replace your OpenClaw Agent's aggressive compaction process with a DAG to supercharge it's memory! Remember DAG shitcoins in crypto? directed acyclic graph ... alternate architecture to bitcoin's blockchain ... they sacrifice decentralization and security for higher throughput. Finally, a DAG has a use for a Bitcoiner :) IOTA had the tangle with coordinators RaiBlocks/Nano was a block-lattice DAG Hashgraph used a gossip about gossip consensus with a permissioned governance council ByteBall/Obyte used a DAG with witness nodes. Strip the shitcoins and governance nonsense away and you have something that's actually useful for AI agent memory enhancement. I hacked a whole skill together (SoulKeep) for my agent to stay in a session as long as possible because usually you want your agent to have as much context as possible for as long as possible. Josh & team put the DAG to work brilliantly to replace the default compaction process with rolling summarization nodes as a novel way of holding as much valuable context as possible in the session for as long as possible. It also as some tools to trawl the session context, they call it "walking the DAG" using a bounded subagent to keep token costs down and performance up. With the latest openclaw release they allow for compaction plug ins like lossless claw. This isn't meant to be a replacement for QMD, your obsidian vault or any other extended long term memory / system of record enhancements your'e using. It's meant to be used in parallel with those strategies to help your agent have better context for longer. I'm seriously considering switching to this! losslesscontext.ai

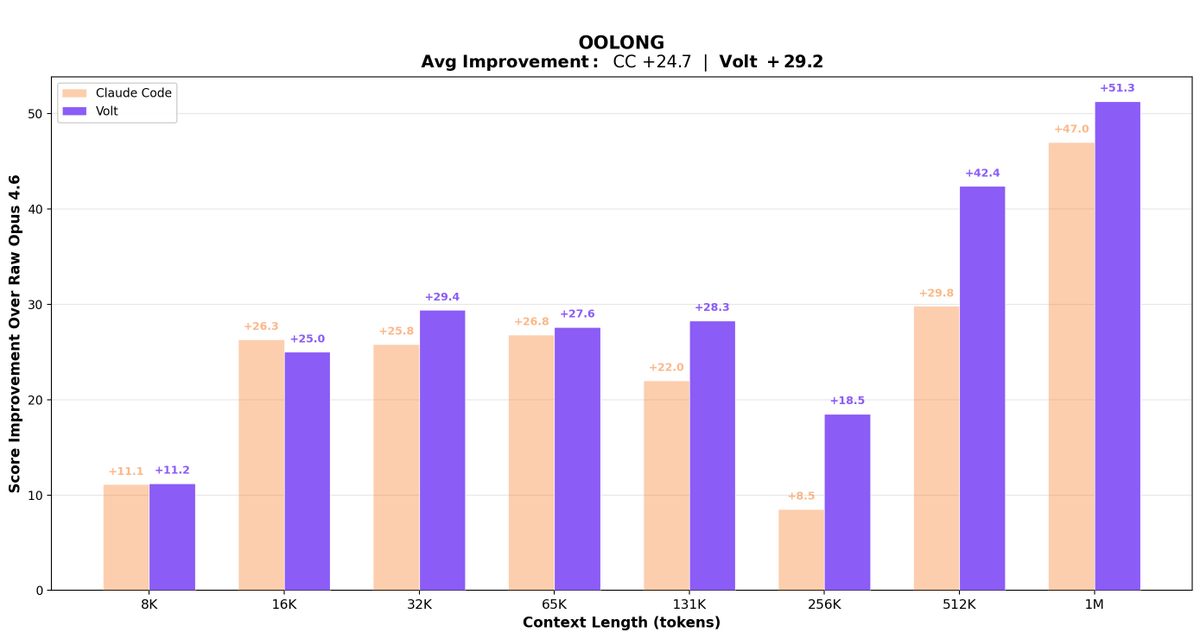

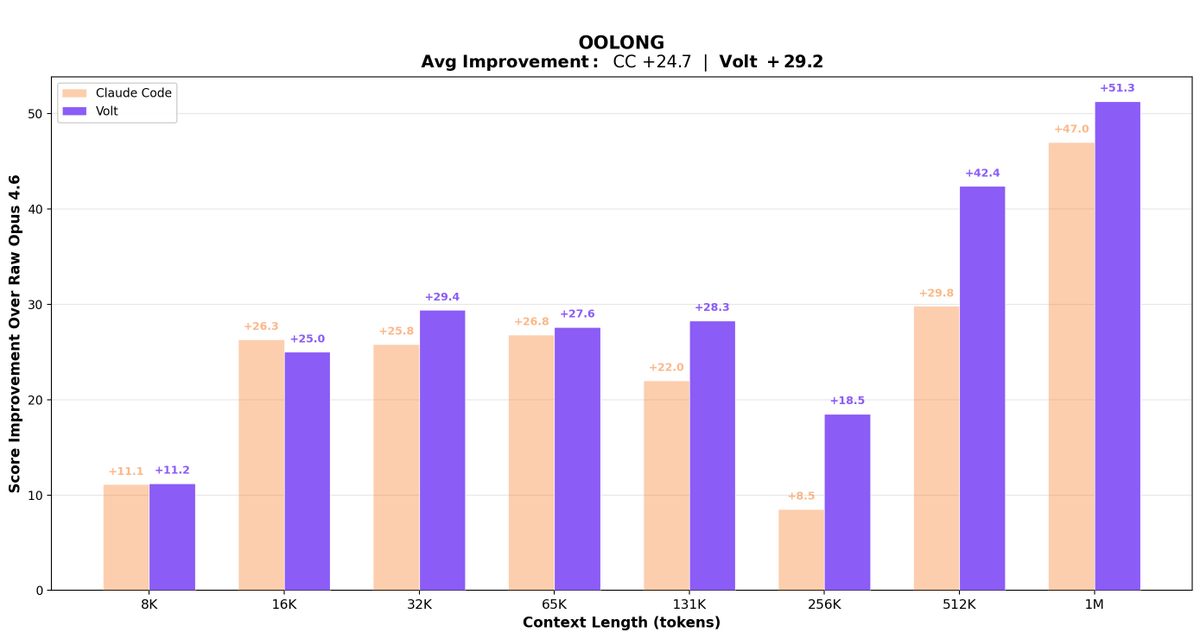

BIG: @openclaw 2026.3.7 just dropped, introducing context engine plugins and lossless-claw. "OpenClaw's context management (compaction, assembly, etc.) is hardcoded in core, making it impossible for plugins to provide alternative context strategies." — PR author @jlehman_ why is this a big deal? this means plugins can now replace the entire context management strategy, opening up opportunities for the community to improve openclaw's core functionality the first application: lossless-claw based on the Lossless Context Management paper (Ehrlich & Blackman), instead of throwing away old turns, they're compressed into summaries linked back to the originals. the model can expand any summary on demand. nothing is ever actually lost. on the OOLONG benchmark, lossless-claw scored 74.8 vs Claude Code's 70.3 using the same model, with the gap widening the longer the context gets. benchmarked higher than Claude Code at every context length tested. the PR author built it, ran it for a week on openclaw, and says "to say it works well would be an understatement." other honorable mentions in this release: · per-topic agent routing: each telegram topic runs a different agent. one forum group, multiple agents. · ios app store prep: mobile is coming. · docker slim build: bookworm-slim variant for smaller, faster deploys.

OpenClaw 2026.3.7 🦞 ⚡ GPT-5.4 + Gemini 3.1 Flash-Lite 🤖 ACP bindings survive restarts 🐳 Slim Docker multi-stage builds 🔐 SecretRef for gateway auth 🔌 Pluggable context engines 📸 HEIF image support 💬 Zalo channel fixes We don't do small releases. github.com/openclaw/openc…

ANNOUNCEMENT: We just mogged malware. Introducing Mog, a programming language for self-modifying AI agents. Mog solves the security problems of claws + the usability problems of sandboxing. 1/N 🧵