VrianCao

179 posts

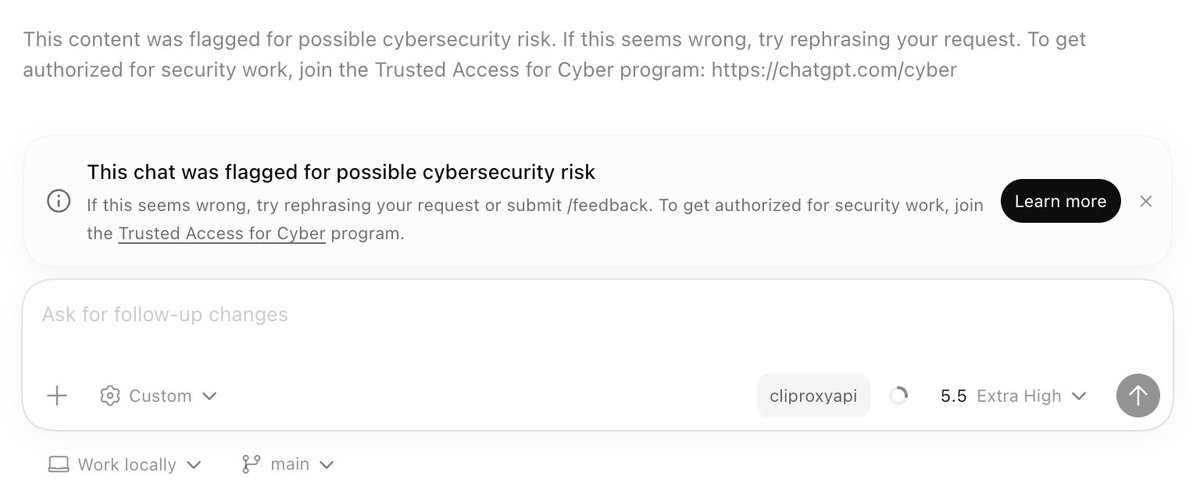

最近看到一个开源项目 Context Mode,能有效解决 AI 编程工具上下文被超出的问题。 核心思路是让原始数据留在沙盒里,只把处理结果送进上下文窗口。 据介绍,能把 315 KB 的原始输出压缩到 5.4 KB,长度节省高达 98%。 同时用本地数据库记录会话状态,对话压缩后也能无缝恢复。 GitHub:github.com/mksglu/context… 支持 14 个平台,包括 Claude Code、Cursor、Gemini CLI、VS Code Copilot 等主流 AI 编程工具。 内置 11 种语言运行时,还有知识库索引和智能搜索功能,方便按需检索而不是一股脑塞进上下文。 所有数据都在本地处理,不联网不上传。如果你经常遇到 AI 编程到一半就出现幻觉的情况,不妨试试这个工具。

Introducing Flue — The First Agent Harness Framework Flue is a TypeScript framework for building the next generation of agents, designed around a built-in agent harness. Flue is like Claude Code, but 100% headless and programmable. There's no baked in assumption like requiring a human operator to function. No TUI. No GUI. Just TypeScript. But using Flue feels like using Claude Code. The agents you build act autonomously to solve problems and complete tasks. They require very little code to run. Most of the "logic" lives in Markdown: skills and context and AGENTS.md. Flue is like Astro or Next.js for agents (not surprising, given my background 🙃). It's not another AI SDK. It's a proper runtime-agnostic framework. Write once, build, and deploy your agents anywhere (Node.js, Cloudflare, GitHub Actions, GitLab CI/CD, etc). We originally built Flue to power AI workflows inside of the Astro GitHub repo. But then @_bgiori got his hands on it, and we realized that every agent needs a framework like Flue, not just us. Check it out! It's early, but I'm curious to hear what people think. Are agents ready for their library -> framework moment?

My GPT-5.4 Pro just froze mid-thought. Even after I steered it to 'Continue,' it only thought for a second before getting stuck again. Considering yesterday’s massive OpenAI outage, I can’t think of any other reason besides some major infrastructure adjustments. Big model smell.

Meet Kimi K2.6: Advancing Open-Source Coding 🔹Open-source SOTA on HLE w/ tools (54.0), SWE-Bench Pro (58.6), SWE-bench Multilingual (76.7), BrowseComp (83.2), Toolathlon (50.0), Charxiv w/ python(86.7), Math Vision w/ python (93.2) What's new: 🔹Long-horizon coding - 4,000+ tool calls, over 12 hours of continuous execution, with generalization across languages (Rust, Go, Python) and tasks (frontend, devops, perf optimization). 🔹Motion-rich frontend - Videos in hero sections, WebGL shaders, GSAP + Framer Motion, Three.js 3D. 🔹Agent Swarms, elevated - 300 parallel sub-agents × 4,000 steps per run (up from K2.5's 100 / 1,500). One prompt, 100+ files. 🔹Proactive Agents - K2.6 model powers OpenClaw, Hermes Agent, etc for 24/7 autonomous ops. 🔹Claw Groups (research preview) - bring your own agents, command your friends', bots & humans in the loop. - K2.6 is now live on kimi.com in chat mode and agent mode. For production-grade coding, pair K2.6 with Kimi Code: kimi.com/code - 🔗 API: platform.moonshot.ai 🔗 Tech blog: kimi.com/blog/kimi-k2-6 🔗 Weights & code: huggingface.co/moonshotai/Kim…