Venkat

306 posts

Venkat

@Whit3f4ng_

Design @quillaudits_ai | Wabi Sabi

Introducing EVMbench—a new benchmark that measures how well AI agents can detect, exploit, and patch high-severity smart contract vulnerabilities. openai.com/index/introduc…

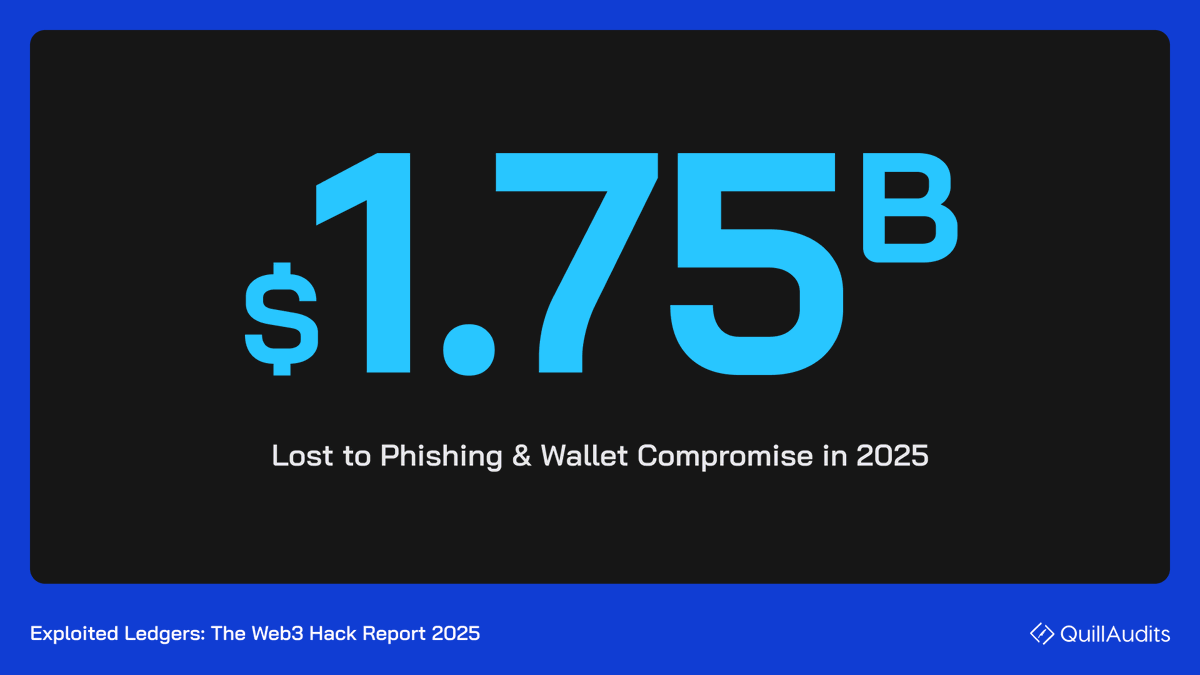

Compromised and revoked TEE machines could pass dstack's attestation verification as perfectly valid, due to missing checks. What's more? This gap has existed since the library's first commit. @PhalaNetwork Cloud and every protocol built on it inherited this behaviour from day one. Their GHSA marks this as Critical and notes that it "bypasses entire remote attestation model". My team at @bluethroat_labs reported this and 5 other vulnerabilities, and this is the response we got: + $2,500 in bounty offered + disclosure timelines framed as "threat" + wiped shared Notion + severities downgraded in a public blog post Here's the full story: 🧵👇🏻

Compromised and revoked TEE machines could pass dstack's attestation verification as perfectly valid, due to missing checks. What's more? This gap has existed since the library's first commit. @PhalaNetwork Cloud and every protocol built on it inherited this behaviour from day one. Their GHSA marks this as Critical and notes that it "bypasses entire remote attestation model". My team at @bluethroat_labs reported this and 5 other vulnerabilities, and this is the response we got: + $2,500 in bounty offered + disclosure timelines framed as "threat" + wiped shared Notion + severities downgraded in a public blog post Here's the full story: 🧵👇🏻

audits might be one of the worst thing that happened to this industry - extracting millions for a false sense of security balancer got 11 audits, still hacked for $128M cetus hack for $223M, not a SC bug but a math library bug it's pretty clear in 2026 that everyone understands the risks - aave / uniswap are considered safe, the rest is clearly not & you should do your due dilligence so honestly if i had a magic wand, i'd hope all protocols spare their audit costs & just use this for something useful also the black or white distinction between audited vs non-audited is so funny, like the most cracked solidity eng coder you know is considered less secure than a vibe coded certik certified contract

Make it iconic. Halftone Dots, available today. Playground link in the thread.

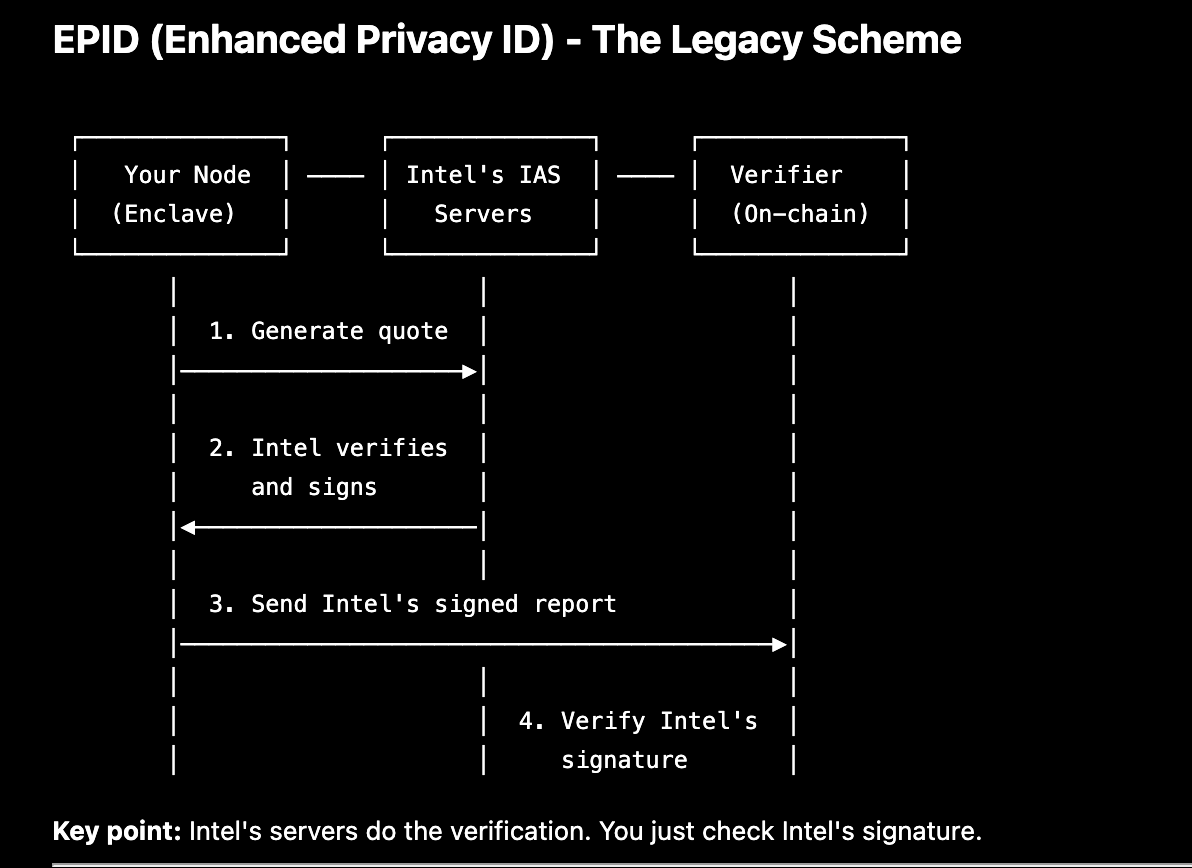

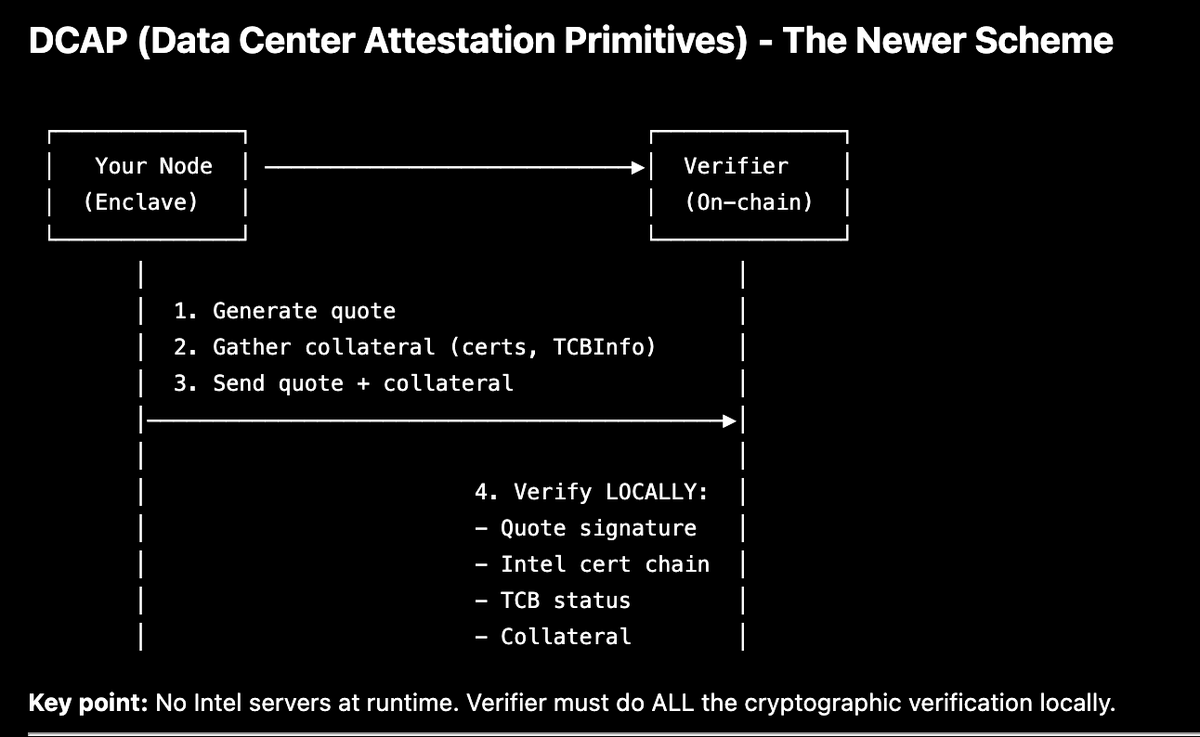

Intel removed itself as the bottleneck in online verification paths when it started moving away from (EPID quotes + IAS verification) to (DCAP collateral + local verification). It gave data centre customers more control of attestation, but also put the burden of correct verification on verifiers. Now, the correctness of attestation is only as good as the verifier implementation. I wonder if that was the right move. ---- For context, these are the EPID and DCAP schemes:

1/ So you don’t trust your own computer… You’re running code on a machine where the OS, hypervisor, and even the cloud provider might be compromised. You still want to keep your keys, models, or data secret. That’s the problem Trusted Execution Environments (TEEs) try to solve.